???

A HARROWING EXPERIENCE

http://youtu.be/2m2ywYlok5E

(PDF link)

– 14 LED 7-Segment Display

– 3V Coin Cell Battery

A HARROWING EXPERIENCE

http://youtu.be/2m2ywYlok5E

(PDF link)

– 14 LED 7-Segment Display

– 3V Coin Cell Battery

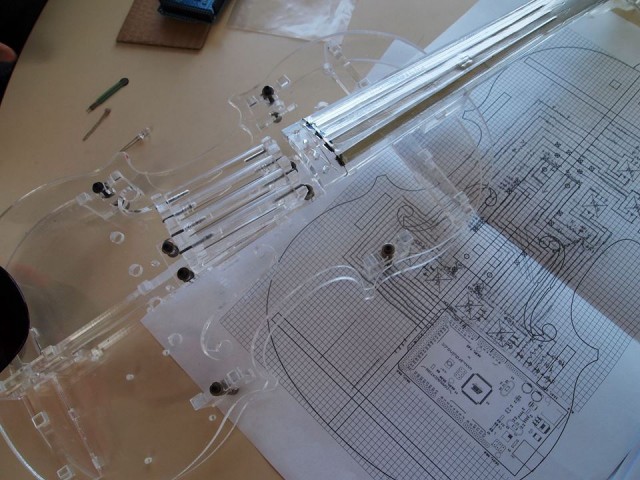

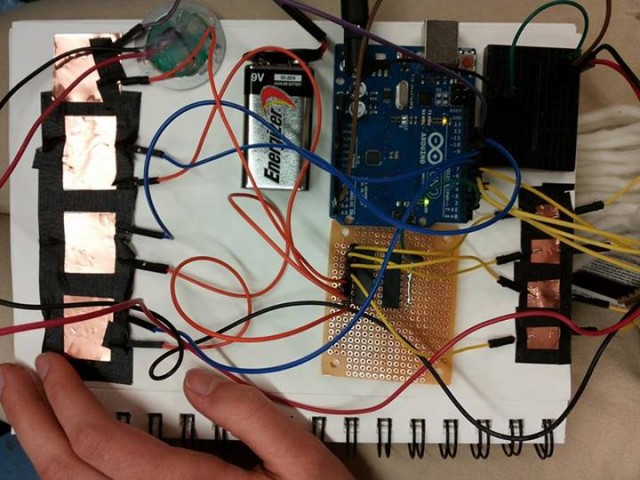

Initially, I wanted to create a fairly traditional violin, where only the strings were essentially electronics. During the project discussion, however, it was pointed out to me that this had already been done — several times over. I was advised to make an instrument which was truly my own, and I have now done so:

Here are some electronic violins I considered using as a starting point.

Unfortunately, CMU students are overacheivers and someone got here first:  They didn’t have LED lights in their acrylic violin, but it was too close to emulate.

They didn’t have LED lights in their acrylic violin, but it was too close to emulate.

Here was my first mockup. (You know how most people do drafts in their sketchbook? I couldn’t close mine after this one.)

This is my project to date. Thanks!

Soliciting participants on Facebook – my original scheme for the final project

Soliciting participants on Facebook – my original scheme for the final project

I planned to print screenshots out and frame them like so

I planned to print screenshots out and frame them like so

As is often the case in art, my project to capture the things that make us smile turned out to have been implemented a year before by Brooklyn artist Kyle McDonald. The embarrassing part of this is that I – unknowingly – used Kyle’s library to make my project.

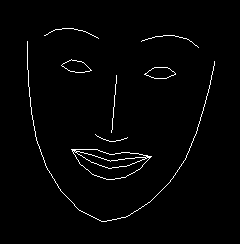

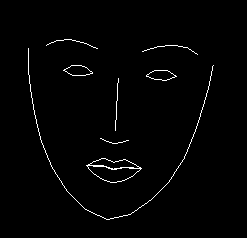

In any case, this initial attempt/failure emboldened me to try something more nuanced with faces. I wanted to consider a continuum of expressions as opposed to a binary smile-on smile-off.

Face Seismograph

Face Seismograph is a tool for recording and graphing states of excitement over time. It was written in OpenFrameworks using Kyle McDonald’s ofxFaceTracker addon.

Excited?

Excited?

Excited!

Excited!

The seismograph measures excitement by tracking the degree to which one smiles or moves their eyebrows from a resting state.

One limitation of this approach is that in practice, internal states of excitement or arousal may not have corresponding facial expressions.

Genuinely excited

Genuinely excited

Depressed

Depressed

I staged a casual conversation between myself and a friend. While we chatted about life, two instances of Face Seismograph approximated and recorded the intensity of our excitement. Viewing the history of our facial expressions, I began to notice surprising rhythms of expression.

To present this conversation, I play each recording on a separate iMac. The two recordings are synchronized via OSC. A viewer can scrub through the video on both computers simultaneously.

In a future iteration of this project, I’d like to highlight the comparison of excitement signatures with greater clarity. Also, I need to label my axes.

The Interactive Development Environment: bringing the creative process to the art of coding.

TIDE is an attempt to integrate the celebrated traditions of the artistic process and the often sterile environment of programming. The dialogue of creative processes exists in the process of coding as frustration: debugging, fixing, and fidgeting over details until the programmer and program are unified. TIDE attempts to turn this frustration into the exhilaration of dialogue between artist and art.

The environment uses a Kinect to find and track gestures that correspond to values. Processing code is written with these values and saved in a .txt file. The result code creates a simple shape on screen. I want to expand this project to be able to recognize more gestures and patterns, allowing for much more complicated systems to be implemented. Ironically, I found the process of implementing and testing this project generating the very frustration and sterility I was trying to eradicate with the intuitive, free flowing motions I could get with the Kinect.

Ever since I was a kid, I’ve always been fascinated by the idea of our stuffed animals and toys coming to life, quite similar to the various toys from Toy Story. Having that vision in mind and after discussing my idea to a few classmates, I decided to bring some of Toy Story to life. My inspirations came from revisiting old children movies where all the objects and creatures were personified.

“Little Wonders” explores the idea of objects moving and interacting when we aren’t there to see the interactions exchanged. I loved the idea that we existed in a world with a more fantastical side that we may never be able to fully unveil.

Some of the technical challenges included trying to create a realistic interpretation of the “creatures'” movements. I wanted the movements to appear subtle so people would second guess themselves, but at the same time smooth enough so that they don’t appear as robotic as the servos. I added an easing effect to help ease the changes in movements. Another challenge was connecting the power chord to the teensy while keeping it hidden. In the end, I placed the shelf slightly between the two doors so that the chord can slip behind the shelf and between the doors inconspicuously.

#include

int duration = 3500;

// metros

Metro mouse1 = Metro (duration);

Metro mouse2 = Metro (duration);

Metro jaguar = Metro (duration);

//servos

Servo sMouse1;

Servo sMouse2;

Servo sJaguar;

// destinations

int dm1_0;

int dm2_0;

int dj0;

// destinations to be smoothed

int dm1_0_sh;

int dm2_0_sh;

int dj0_sh;

float easing[] = {

0.09,0.08,0.03};

const int motionPin = 0;

int noMotion;

int mouse1Motion = 0;

int motionLevel;

void setup(){

Serial.begin(9600);

// pin 5 missing

sMouse1.attach(0);

sMouse2.attach(1);

sJaguar.attach(2);

// intial destinations

dm1_0 = 0;

dm2_0 = 90;

dj0 = 135;

// intial destinations to be smoothed

dm1_0_sh = 0;

dm2_0_sh = 90;

dj0_sh = 135;

noMotion = 0;

}

void loop(){

motionLevel = analogRead(motionPin);

motionLevel = map(motionLevel,0,656, 0,100);

motionLevel = constrain(motionLevel, 0,100);

//motionLevel = 0;

if (motionLevel < 20 ){ noMotion ++; if (noMotion >= 100){

if (mouse1.check() == 1){

dm1_0 = random(0,180);

mouse1.interval(random(200,800));

mouse1Motion ++;

}

dm1_0_sh = dm1_0_sh *(1.0 - easing[0]) + dm1_0 * easing[0];

sMouse1.write(dm1_0_sh);

if (noMotion >= 120){

if(mouse1Motion >= 10){

if (mouse2.check() == 1){

dm2_0 = random(0,180);

mouse2.interval(random(300,800));

}

dm2_0_sh = dm2_0_sh * (1.0 - easing[1]) + dm2_0 * easing[1];

sMouse2.write(dm2_0_sh);

}

}

if (noMotion >= 150){

if (mouse1Motion >= 14 ){

if (jaguar.check() == 1){

dj0 = random(90,180);

jaguar.interval(random(500,2000));

}

dj0_sh = dj0_sh * (1.0 - easing[2]) + dj0 * easing[2];

sJaguar.write(dj0_sh);

}

}

}

}

else{

noMotion = 0;

}

//Serial.print("motion: ");

Serial.println(motionLevel);

//Serial.println(dj0_sh);

delay(25);

}

Some images from the presentation:

//Revolving Games by Michelle Ma

//Revolving Games by Michelle Ma

#include

#include

#include "RTClib.h"

#include "Adafruit_LEDBackpack.h"

#include "Adafruit_GFX.h"

#include

#include

Adafruit_7segment matrix1 = Adafruit_7segment();

Adafruit_7segment matrix2 = Adafruit_7segment();

Adafruit_ADXL345 accel = Adafruit_ADXL345(12345);

RTC_DS1307 RTC; // Real Time Clock

const int chipSelect = 10; //for data logging

const int distancePin = 0; //A0 for IR sensor

const int threshold = 100; //collect data when someone near

const float radius = 0.65; //radius of door in meters

const float pi = 3.1415926;

File logfile;

int highScore;

void setup() {

Serial.begin(9600);

SD.begin(chipSelect);

createFile();

accel.begin();

matrix1.begin(0x70);

matrix2.begin(0x71);

if (!RTC.isrunning()) {

RTC.adjust(DateTime(__DATE__, __TIME__));

}

accel.setRange(ADXL345_RANGE_16_G);

highScore = 0;

}

void createFile() {

char filename[] = "LOGGER00.CSV";

for (uint8_t i=0; i<100; i++) {

filename[6] = i/10 + '0';

filename[7] = i%10 + '0';

if (!SD.exists(filename)) {

logfile = SD.open(filename, FILE_WRITE);

break;

}

}

if (!logfile) {

Serial.print("Couldn't create file");

Serial.println();

while(true);

}

Serial.print("Logging to: ");

Serial.println(filename);

Wire.begin();

if (!RTC.begin()) {

Serial.println("RTC error");

}

logfile.println("TimeStamp,IR Distance,Accel (m/s^2),RPM");

}

void loop() {

DateTime now = RTC.now();

sensors_event_t event;

accel.getEvent(&event);

float distance = analogRead(distancePin);

float acceleration = event.acceleration.z;

float rpm;

if (distance > threshold) {

rpm = computeRpm();

} else {

rpm = 0;

}

if (rpm > highScore) {

highScore = rpm;

}

logData(now, distance, acceleration, rpm);

serialData(now, distance, acceleration, rpm);

writeMatrices(int(rpm), int(highScore));

Serial.println("Saved...");

logfile.flush();

}

float computeRpm() {

float velocity = computeVelocity();

float result = abs(60.0*velocity)/(2.0*pi*radius);

return result;

}

float computeVelocity() {

float acceleration;

float sum = 0;

int samples = 100;

int dt = 10; //millis

for (int i=0; i