Tyvan – LookingOutwards-04

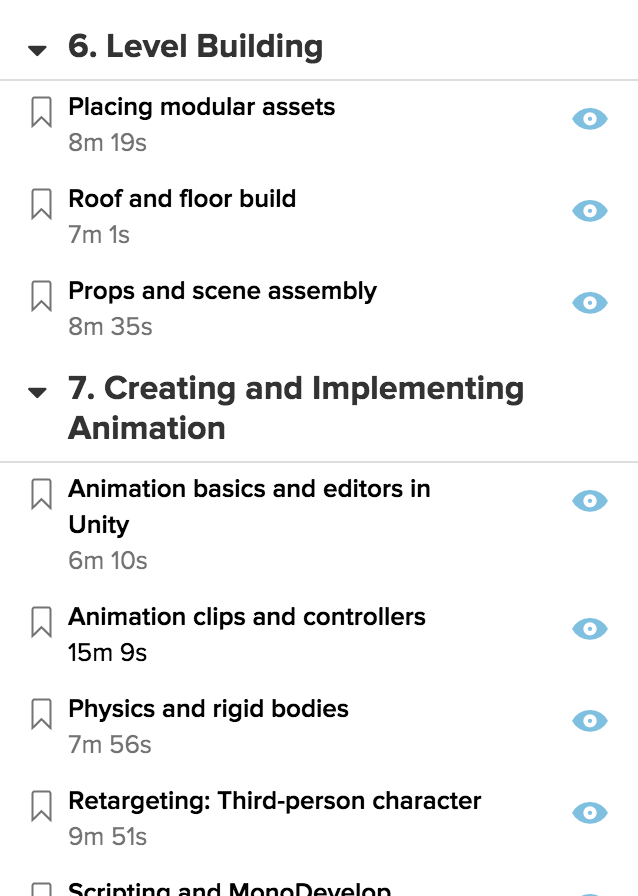

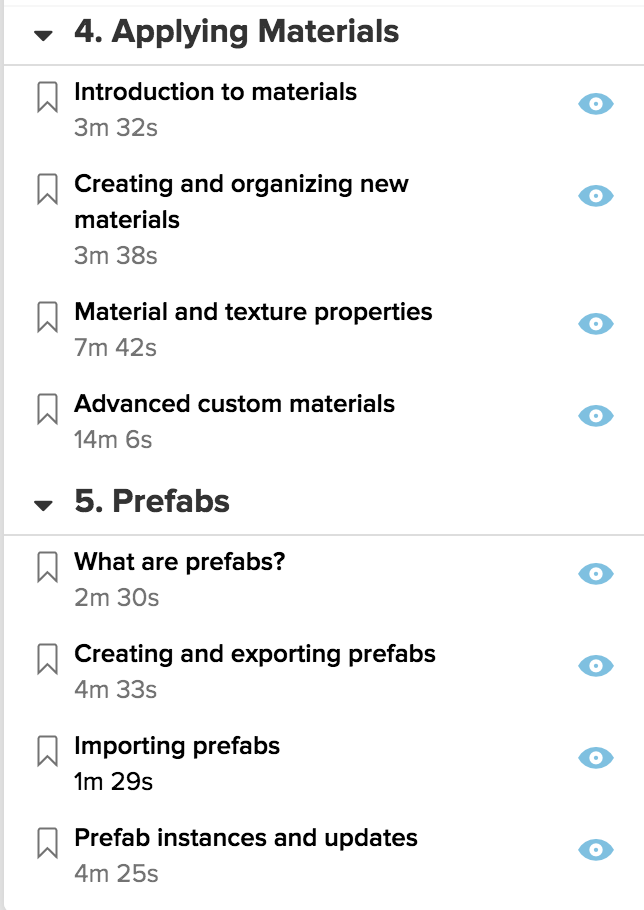

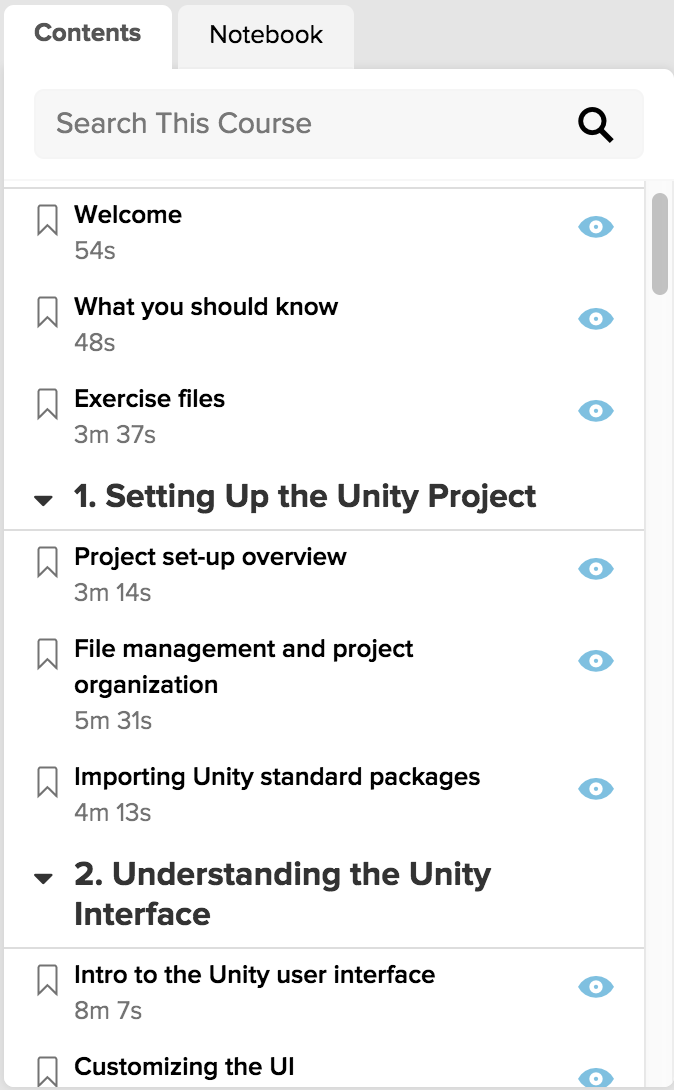

This looking outwards is dedicated to the artist, scientist, and technologist Philipp Drieger. Three interpretive information translations rendered from his code. Each video exhibits examples of tension between two and three dimensional representations of information.

Propagation of Error forflashes codes to the upcoming multi-dimensional experience. Priming the viewer w/ highly graphic orthogonal graphics adds a 2d lens to the introduction of a third dimension, interaction; multiple 2d patterns, upon addition to the central shape, cause interference with the addition of information layers. I enjoy Drieger’s decision to include a non-spacial dimension before revealing the central shape’s form because it ties complexity to interaction, rather than space itself. As the third spacial dimension is revealed, so is color, Dorothy!

The first project of his I discovered was through a climb across youTube, viewing some music visualizer processing demos. Drieger’s video contrasted other videos because the author recognized timing intervals and dramatic movement as core traits of music.

2.073.600 Pixels in Space is a 2.5d extraction of brightness values. This is the same method of dimensional interpolation I used for Mocap (particularly 1:30). The camera movement throughout the reliefs pop the tension of boundaries in 2, 2.5, & 3d spaces.

hes also a sales engineer who makes music.