As mentioned in my sketch earlier, my initial goal for the third project was to create an interactive performance, combining different softwares such as AbletonLive, the Kinect, and Max/MSP. Since I was not familiar with any of them, this project became more of a learning experience as I have created a ‘demo’ instead of a ‘poem’.

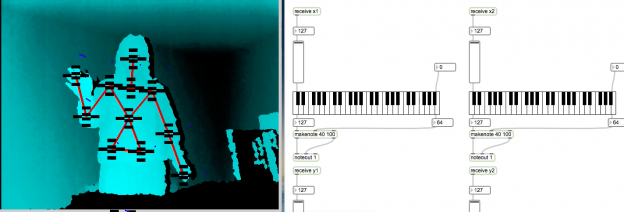

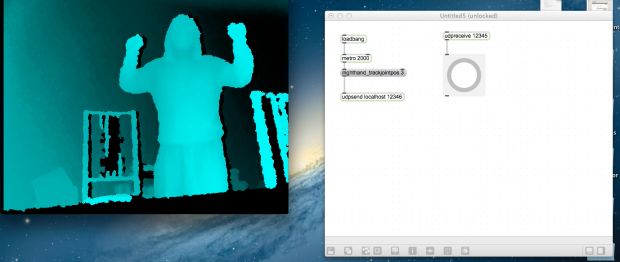

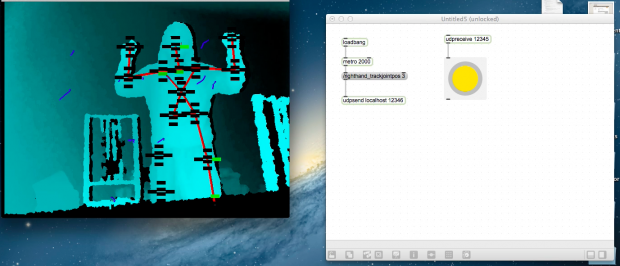

For this project, I used Synapse – an opensource application that sends out input data from the Kinect to Ableton, Quartz, and Max/MSP. I initially wanted to send the input data directly to AbletonLive, but along the way became curious about how to use Max/MSP. I thought it would be a very useful tool/skills to acquire for my final project — for making a tangible interactive art piece. Consequently, for this project, I used Synapse to get data from the x and y positions of my right and left hands, and send them to a custom Max patch that plays four different keyboards.

It took me quite some time to figure out the basics of Max/MSP. I wished I made something more sophisticated with the tools I have learned. However, upon completing this project, I have a become more familiar with the programs and how to send data via OSC from one to another.

https://vimeo.com/63060450