Code: https://github.com/Oddity007/3DAutomataPainting

As someone who does a lot of 3d modeling, I’ve always been frustrated with the tools available for texturing geometry. The traditional approach to texturing is to unwrap the 3d mesh into a 2d mesh and then paint on that so that the pixels on the 2d mesh can be reprojected back into 3d. In more modern systems, a user can paint directly on the 3d mesh and the system will automatically handle the 2d projection. This works well for the most part, but starts breaking down when one tries to make physically realistic scenes. Creating physically plausible scenes requires extreme attention to detail regarding the relationship of different materials and surfaces in 3d space. Painting every such detail is far too time consuming.

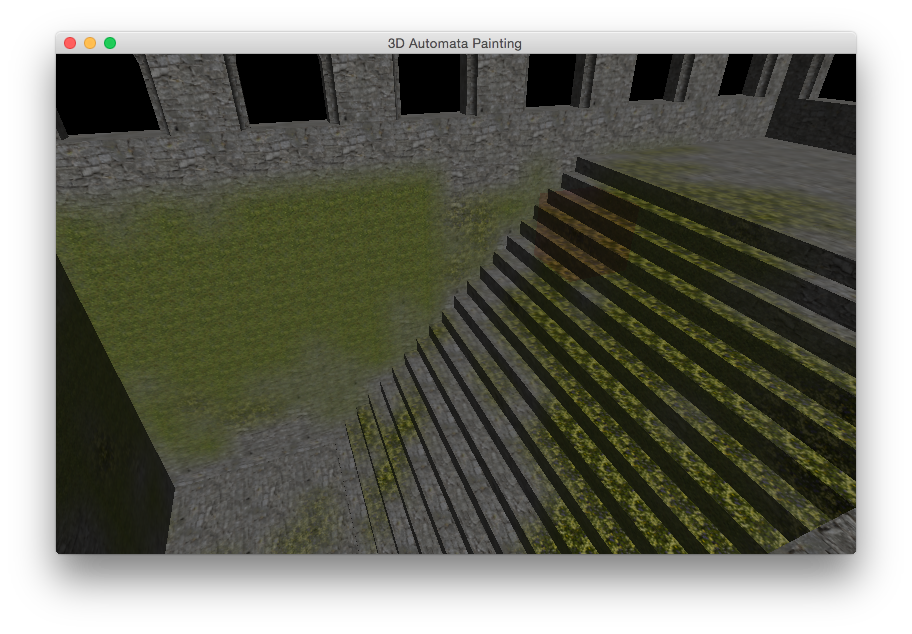

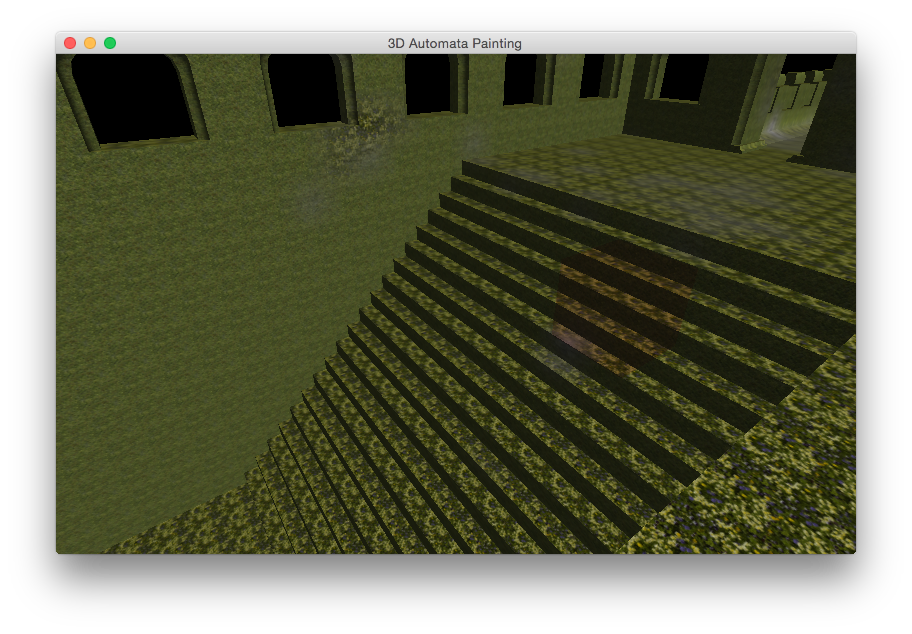

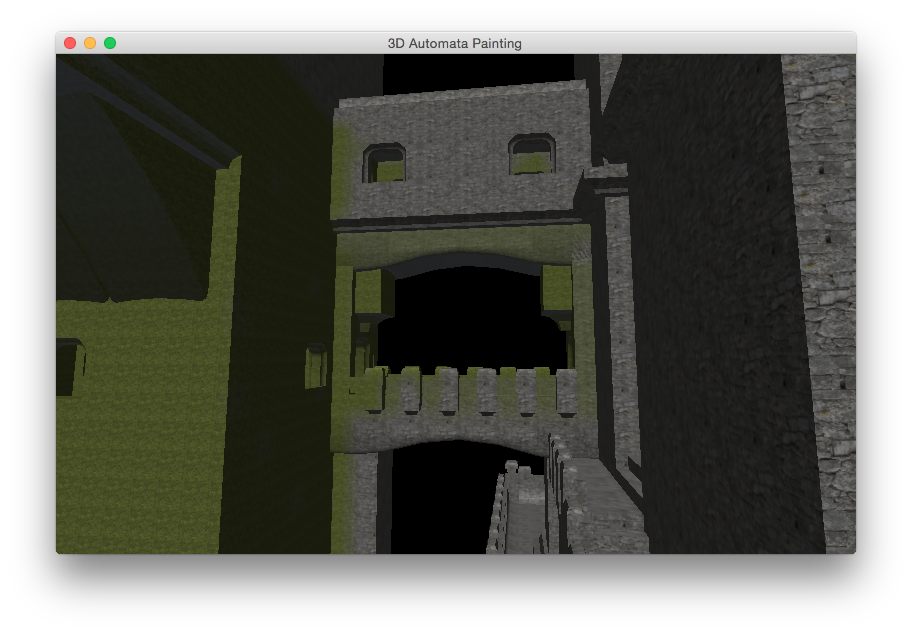

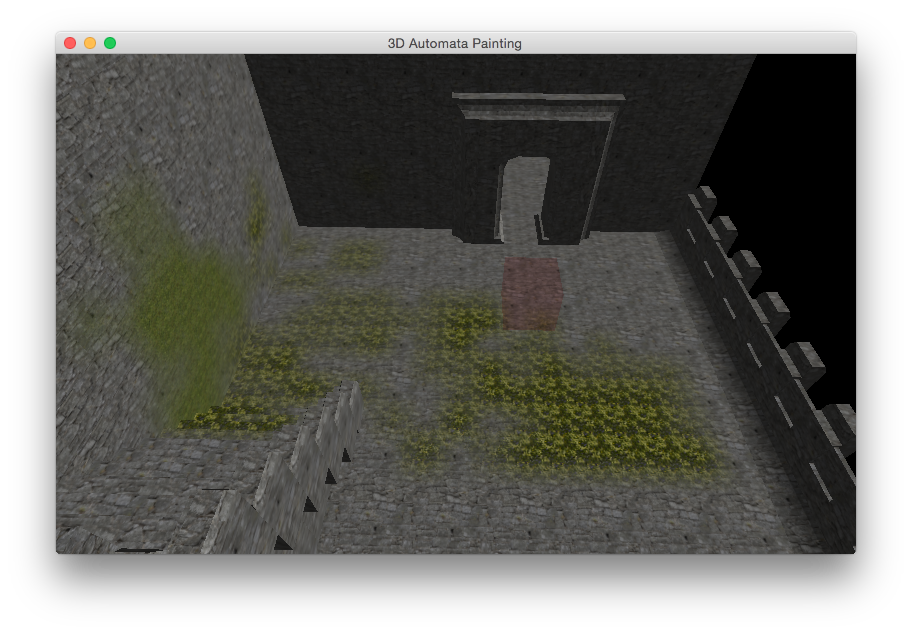

The goal of my drawing software was to provide a proof of concept of cellular-automata-assisted 3d painting. The idea is that an artist can program in the physical rules they want the painting to follow and have the program calculate these rules while the artist draws on the geometry.

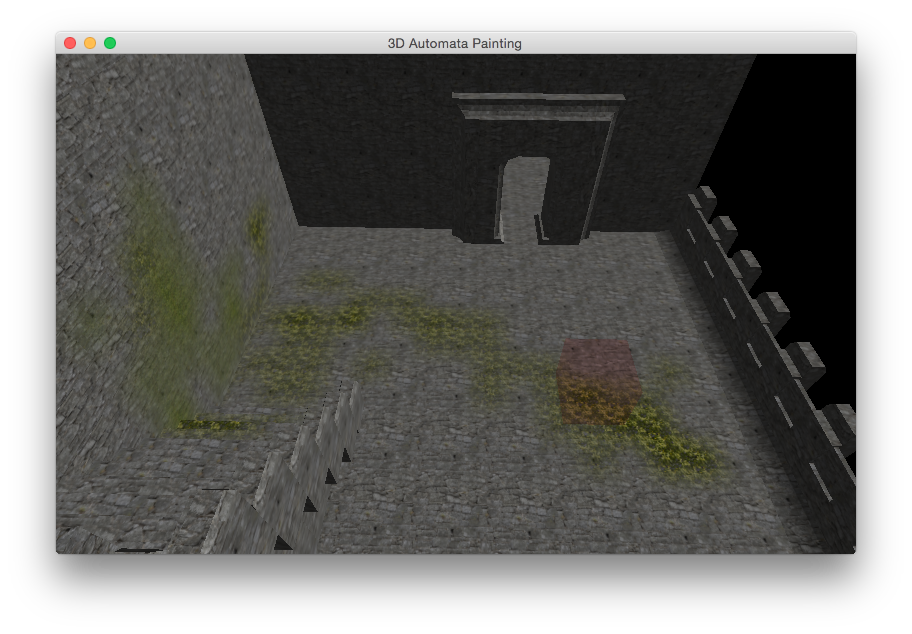

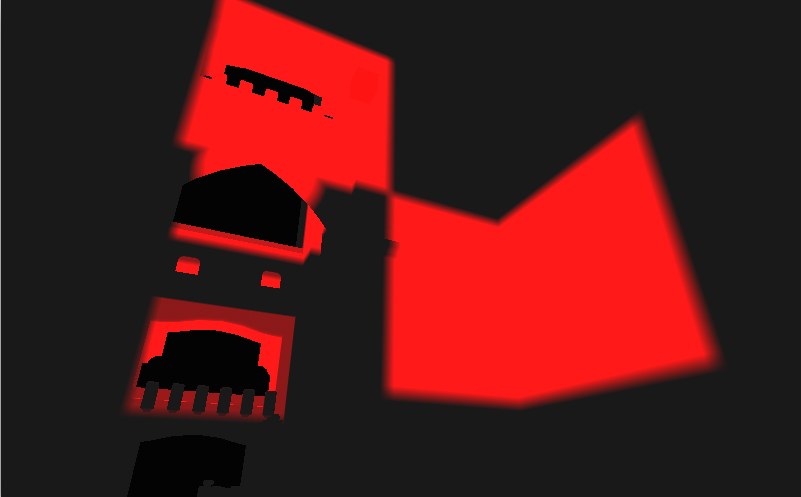

This software was written in C++ using OpenGL. The program evaluates the cellular automata in several linked 16x16x16 chunks that are created as the user clicks. Here is a screenshot of a view I used for debugging the chunk system during development where the touched chunks are filled entirely with red.

Due to time constraints, the cellular automata currently controls the blending of various materials on the surface of a 3d mesh, although there are much better methods available for rendering such textures.