UnityEssentials

LookingOutwards06

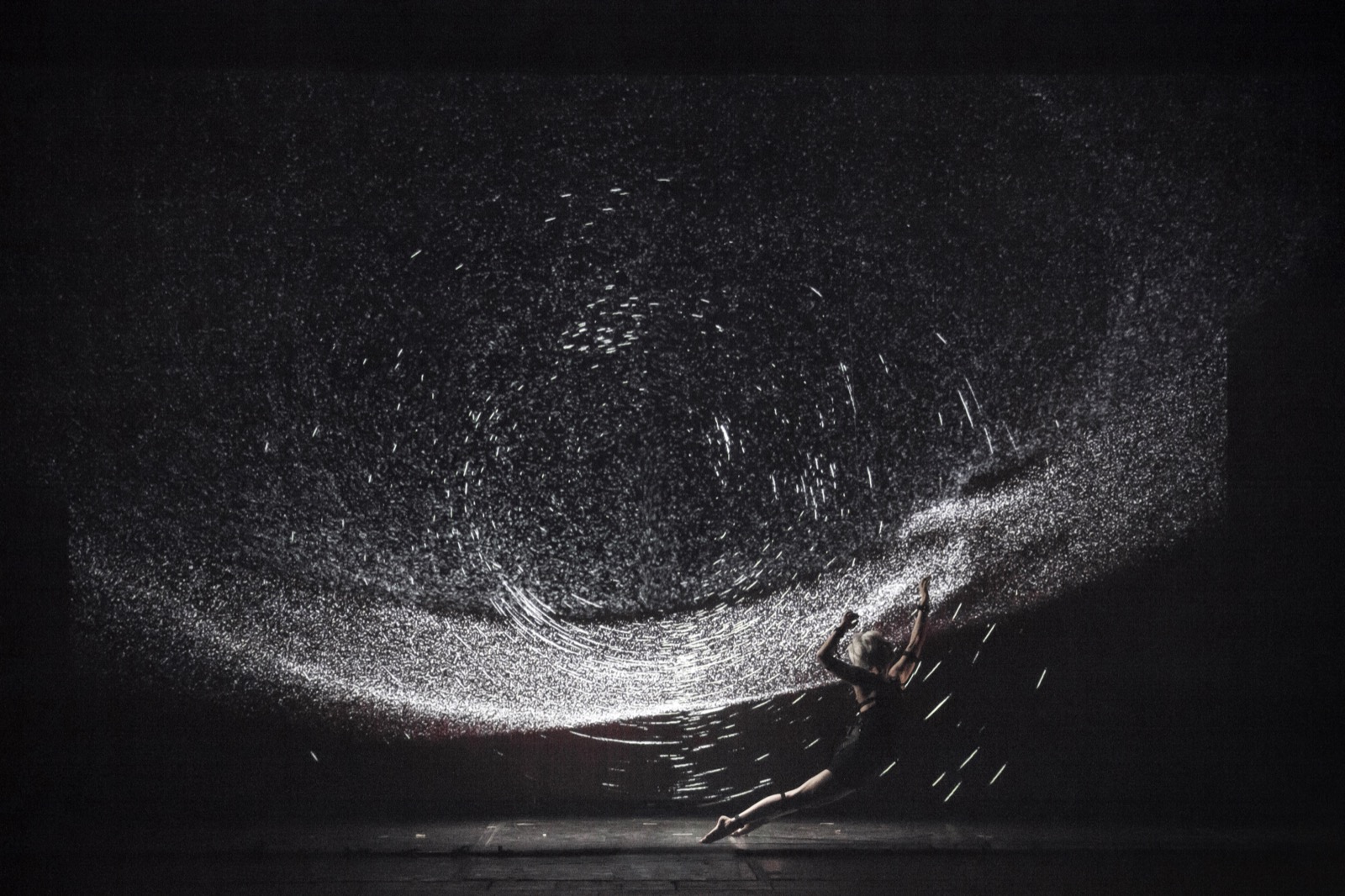

I like this work a lot because it’s already very expressive as a dance piece but at the same time the new media enhancement added so much to it that I could kind of feel a sense of sublime. When I thought about why I felt what I felt, I think there’re a couple of elements that I can definitely learn from if I want to achieve something similar, even when using just the movement of human body. 1) dark background (as its name suggests), in this case I think it represents endless time and space, but darkness in general convey something mysterious and of big scale. 2) repetitive small elements (light dots or rays) that move in some obscure pattern, and when in viewed with the dark background it reminds the viewer of the contrast between the grand scale of the background and the tiny lights. 3) extension or scale up the source information (body movement), such as extending the movement of arms and legs with light rays. Doing so is like granting some divine power to the body in motion.

I really should’ve looked at this before doing my mocap project…

conye – Unity2

Some reminders for myself:

- Start function => void Start()

- update function called repeatedly => void Update()

- Debug.Log

- Do while loops do{} while() checks exit condition at end

- For each loop is written “foreach(type item in arr)”

- string is lowercase

- if a var is declared public, other scripts can edit it, and it shows up in the inspector!

- Hierarchy is: start/update set var > inspector set var > class set var

- Private is the default access modifier

- Access another class =>

- Awake called before start

- Fixed update for physics -> called regularly

- Update times vary

- Get time with Time.deltaTime

- Vector3.Cross(VectorA, VectorB)

- If I want to get a component of the thing my script is attached to. Type Mything = getComponent<Type>();

- We can use “enabled” property to toggle components on and off

- Can also use “active” to toggle GameObjects on and off

- https://unity3d.com/learn/tutorials/topics/scripting/activating-gameobjects?playlist=17117

- mult by time.deltaTime moves it in meters per second instead of meters per frame

- Vector3.forward, Vector3.up is a shortcut

- Input.getKey(KeyCode.UpArrow), there’s also getButton => these return true or false based on if they’re pressed or not.

- getAxis: => [-1, 1]

- can detect mouseclicks on gui or colliders => OnMouseDown()

- rigidBody(addForce())

- Destroy to destroy game objects, use it for other objects/ components that the script isn’t actually attached to, can include timed delay

- getComponent is expensive

- subclass example =>

- Use instantiate to duplicate prefabs

- helpful link for ref => https://unity3d.com/learn/tutorials/topics/scripting/instantiate?playlist=17117

- Invoke and InvokeRepeating(func, delay, subsequentDelays)

Screenshots (one for each of the 28 lessons in the beginning scripts thing)

tesh – LookingOutwards07

MIT Media Lab – Fluid Interfaces Group

Reality Editor is a web-based tool from MIT that uses AR interfaces and Logic Crafting to connect devices over the web and help control the physical world. These interfaces both act as input interfaces for the Logic Crafting system as well as output displays for numerical values, search results, and other forms of data display.

Something interesting about how the Reality Editor system uses AR is that it tries to minimize the amount of AR visual space that is dedicated to inputs and actually helps bring physical input devices into the digital world as a form of Augmented Reality.

We have naturally and intuitively used our hands to interact with the physical world around us for hundreds of years, and the Reality Editor system is trying to give back some of that physicality that has been somewhat lost in our current overabundance of ambient tech devices or flashy, eye-catching screens and signage. The AR is only mainly used to help the user determine what physical objects are connected and how they work together.

Reality Editor works using Open Hybrid and is actually available on the iOS App Store to mess with right now!

conye – UnityEssentials

Notes:

- Unity crashed every time I clicked on the main camera and made me want to remove myself and my wheezing computer from the world

- Something I learned from unity crashing every 3 seconds – changes to the assets will remain even if you didnt save

- Updated unity to the newest version and everything is good again

- Note to add colliders to everything so that things don’t start falling away and make me think there’s a glitch because I wasted a lot of time on that 🙁

- Note to adjust the quality settings

- Lights to mixed or baked to optimize if possible

- Don’t forget to turn on shadows or it looks weird

- Non-render non-dynamic objects to static

- I chose colors I thought were more fUn than his but then his result was much better looking so in the future I should probably decide on a ~ mood ~ and make a color scheme first before I make assets because its a huge hassle to make a lot of changes at the end.

Hotkeys

- Cmd v to snap

- Cmd d to duplicate

- right – wasdeq to move

chapter 1

chapter 2

chapter 3

chapter 4

chapter 5

chapter 6

chapter 7

chapter 8

chapter 9

chapter 10

chapter 11

chapter 12

chapter 13

chapter 14!! it moves!! so exciting

chapter 15

farcar – Unity2

Unity Beginner Scripting Lessons

Lesson 05

Playing with global variables

Lesson 06

Do While loop

Lesson 08

Awake() and Start()

Lesson 09

Update() and FixedUpdate() runtimes

Lesson 11

Enabling lights by spacebar

Lesson 12

Activating objects by spacebar

Lesson 13

Moving objects and rotating them using arrow keys

Lesson 14

Having the camera track a moving object

Lesson 15

Destroying game objects on keyboard inputs

Lesson 19

Wall flies away on mouse down

Lesson 21

Moving objects linearly using Time.delayTime

Lesson 23

I don’t know what went wrong…

Lesson 24

Did it again, this time using instances

Lesson 26

InvokeRepeating new objects every 2 seconds at random positions

Jackalope-UnityEssentials

Part 1

Part 2

Part 3

Part 4

Part 5

Part 6

Part 7

Part 8

Part 9

Part 10

Part 11

Part 12

Part 13

Part 14

Part 15

creatyde-LookingOutwards07

Aspect

By Draw & Code

Here is the video.

And a GIF below, of a neat moment:

Aspect is an app that allows users to direct their phones at black and white drawings and make them move. Whereas many other AR apps focus on changing reality, Aspect allows content that is already fantastical, content within whimsical drawings, to become even more enchanting by allowing a viewer to interact with them more fully.

When I look at this work, I wonder how the artists (who drew the illustrations I presume) imagined when they added animation to their pieces. From a more technical standpoint, how was the animation done in accordance with the image? Could animations be made from existing, static images, or some existing photos (such as seeing a man on a bike, and the app automatically knows that the man should be riding the bike)? Then more static works can become interactive.

creatyde-Unity2

creatyde-UnityEssentials

Tutorial 1.1

Tutorial 1.2

Tutorial 1.3

Tutorial 2.1

Tutorial 2.2

Tutorial 2.3

Tutorial 2.4

Tutorial 3.1

Tutorial 3.2

Tutorial 3.3

Tutorial 3.4

Tutorial 3.5

Tutorial 4.1

Tutorial 4.2

Tutorial 4.3

Tutorial 4.4

Tutorial 5.1

Tutorial 5.2

Tutorial 5.3

Tutorial 5.4

Tutorial 6.1

Tutorial 6.2

Tutorial 6.3

Tutorial 7.1

Tutorial 7.2

Tutorial 7.3

Tutorial 7.4

Tutorial 7.5

Tutorial 8.1

Tutorial 8.2

Tutorial 8.3

Tutorial 9.1

Tutorial 9.2

Tutorial 9.3

Tutorial 9.4

Tutorial 10.1

Tutorial 10.2

Tutorial 10.3

Tutorial 10.4

Tutorial 11.1

Tutorial 11.2

Tutorial 11.3

Tutorial 11.4

Tutorial 11.5

Tutorial 12.1

Tutorial 12.2

Tutorial 12.3

Tutorial 12.4

Tutorial 13.1

Tutorial 13.2

Tutorial 13.3

Tutorial 13.4

Tutorial 14.1

Tutorial 14.2

Tutorial 14.3

Tutorial 14.4

Tutorial 15.1

Tutorial 15.2

Tutorial 15.3

phiaq – LookingOutwards07

Bouquet – Synesthetic olfactory device to detect color through fragrance

Bouquet is a device that pushes out fragrances and smells related to the color once it passes by images with different colors. The device is an extension of the olfactory system, ad delivers a version of augmented reality. The cone shaped device has an optical sensor built on its tip which can recognize different colors using Arduino. Inside the bottom of the cone is a stepper motor controlled disc that turns pads with scents, such as a strawberry soaked cotton pad. ECAL’s Bachelor Media and Interaction Design Students created color posters that people can experience the device with. I enjoy this project because smell is usually uncommon with art projects but having a olfactory component with digital inputs is pretty delightful. I also think that it doesn’t necessary have to be digital – the paper itself with the colors could emit fragrances by itself physically.

dechoes – UnityEssentials

Essentials Training

Part 1

Part 2

Part 3

Part 4

Part 5

Part 6

Part 7

Part 8

Part 9

Part 10

Part 11

Part 12

Part 13

Part 14

Part 15

dechoes – LookingOutwards06

Mirror Fugue

I was interested in doing a looking outwards for this project because I had a very similar idea a couple months back. I always found self playing pianos to be unintentionally creepy, which could be a big asset if spun the right way.

In MirrorFugue the artists created an installation, where recorded videos of pianists playing are projected back onto the glossy surface of the instrument, as it self-plays the same piece. The fingers look like they are moving the keys, the pianists expressions add an emotional quality to the piece that would not be there without the image.

Although this piece was not actually interactive, I think it could have been. They could have used real time video feed from a different location, or could have rigged a pre-made 3D model to different piano pieces, etc. I think the piece is effective in the context of memory analysis and emotional study, however maybe lacks in complexity.

Here is a link to the MIT Tangible Media webpage.

ookey-LookingOutwards06

Ei Wada frequently uses obsolete tech in order to create his art pieces, which frequently involve sound. For his piece Braun Tube Jazz Band, he takes advantage of the electromagnetic properties of CRT TVs to then be able to play them like drums with his hands by having his body become the antenna. I found that this allowed him to use his body and gesture in a way that directly affected the sound. I appreciate that it wasn’t just a software that was approximating his body but, rather, a direct result of his movements. Ei Wada also frequently uses his art to bring attention to e-waste, which I really respect. I find e-waste to be a really interesting topic in modern art which can definitely be both contributed to and fought against by new media artists.

Ei Wada has also collaborated with the artist Zbeok featured, Daito Manabe.

farcar – LookingOutwads07

“A Moment In Time” is Southern California’s first augmented reality art show held nearly 6 years ago. Viewers hold up their devices to the artwork to see an extended view of the art. While traditional art holds boundaries limited by the canvas size, augmented reality allows the canvas to be extended by adding an extra dimension of time to the piece.

The gallery works by placing images around the the environment, acting like QR codes. Using the Roark Studio App, viewers can scan in the images with their devices to see the associated film sequence projected onto the original image. Because the images around the gallery act like QR codes, this means that anyone anywhere can load the augmented reality so long as they have a printout of the image and the app installed. The developers hope to make their artwork more view-able through this approach.

“A Moment In Time” is significant to the artistic environment of augmented reality by merging contemporary galleries with evolving technologies to demonstrate where art galleries, and art itself, may be going.

tyvan – Mocap

While learning about professional motion capture rigs during our lecture, I noted the high level techniques: reflective trackers are placed on the body for an IR camera setup to track in space. Software is able to find the high-value IR areas of the image, convert the micro areas to track points, align spatially similar dots, and turn that into a 3D model. I wanted to experiment with the first and final step of that process, converting value and light directly to 3D. The software used for this project is edited from the “MeshTweening” example in processing.

Process:

1.Instead of the project being solely about form and shadow, explicit markers were

screen printed onto the model with shiny black ink, then photographed.

2. The background of the images were removed by painting a 255 green over the areas.

3. The Processing app was edited from the “MeshTweening” example to create

a desirable outcome. Placement on the Z-axis was defined by the value of the

represented pixel.

Although the code is interactive, and at some points animated, the final piece

is static imagery.

// Use of custom vertex attributes. // Inspired by // http://pyopengl.sourceforge.net/context/tutorials/shader_4.html // Sets up Shader, Shape, and Values for Z-axis PShader sh; PShape grid[] = new PShape[600]; PImage img; float imgValues[]; void setup() { size(800, 533, P3D); // OpenGL Shader tools sh = loadShader("frag.glsl", "vert.glsl"); shader(sh); // Image to render img = loadImage("________"); img.loadPixels(); int d = 5; // Line density unit for (int x = 0; x < img.width; x+=d) { int i = x/d; grid[i] = createShape(); // 'beginShape' function here allows Verticle lines, vs continuous surface grid[i].beginShape(); grid[i].noStroke(); for (int y = 0; y < img.height; y += d) { //Convert to grayscale color colo = img.pixels[y * img.width + x]; fill(colo); float colorR = red(colo); float colorG = green(colo); float colorB = blue(colo); float value = (colorR + colorG + colorB)/3; // Green Filter for input image if(colorG == 255){ grid[i].endShape(); grid[i].beginShape(); } else { // Draw image in 3D Space as squares in columns grid[i].fill(255); grid[i].attribPosition("tweened", x, y, value); grid[i].vertex(x, y, 0); grid[i].fill(255); grid[i].attribPosition("tweened", x + d, y, value); grid[i].vertex(x + d, y, 0); grid[i].fill(255); grid[i].attribPosition("tweened", x + d, y + d, value ); grid[i].vertex(x + d, y + d, 0); grid[i].fill(0); grid[i].attribPosition("tweened", x, y + d, value); grid[i].vertex(x, y + d, 0); } } grid[i].endShape(); } } void draw() { background(255); int size = img.width/5; // Allows for adjustment of perspective and composition pushMatrix(); translate(width/2, 0, 0); rotateX(mouseY*.005); rotateY(mouseX*.005); translate(0-width/2, 0, 0); sh.set("tween", map(300, 0, width, 0, 1)); // Draw Shapes for (int i = 0; i < size; i++) { shape(grid[i]); } popMatrix(); } |

ookey-Mocap

For my piece, I knew I wanted to make an “instrument” controlled by a hand. Rather than typical sounds, I wanted to create glitchy noises inspired by Ryoji Ikeda or this piece by Tomoki Kanda. I have really weird shaped, “glitchy” fingers, so I found it fitting. Creating interesting glitch sounds is actually what I had to experiment the most with. In the end, I like the sounds that are produced. However, I wish it was more clear how the hand was triggering them or was more responsive based on the actions that the hand took. The element I think that became the most clear is how the height of the hand affects the tone through filtering. The triggering of notes is less clear and has more errors happening. I originally wanted to do something with machine learning for max, and I might pursue this further in the future.

gif of code running:

sketch:

code under readmore (its not the most readable so you can download the patch here)

Continue reading

dechoes – Mocap

Veggie Party

For this project, I really wanted to use OpenPose, which is a brand new, off the shelf piece of software developed by CMU School of Computer Science. Unlike RGBD recordings, OpenPose is able to extract skeletons from already existing 2D videos and saves all of the body coordinates to a JSON file.

I started this project with some very large scale ideas, which I quickly realized were not achievable in a couple days. I finally settled on the idea of a Veggie Party, where each character was made of a different vegetable, mapped to its limbs.

In the final outcome, there was no specific reason to use OpenPose since the movement is quite mundane. I had originally wanted to use an already existing video of people pealing or cutting vegetables (which would have generated a never ending cycle of veggies, pealing and eating other veggies), but that specific source material could not be found. Additionally, the OpenPose data is still extremely flawed, but I decided to just roll with it and embrace the experimental glitch.

I cannot say that I am specifically psyched about this outcome, however I am excited to have been exposed to MoCap technology and am already thinking about larger scale projects I will be able to produce in the next few weeks.

Video:

Preliminary Notes:

Background tries:

Code:

PImage carrot; PImage asparagus; PImage corn; PImage kale; PImage aubergine; PImage broccoli; PImage meat; class SceneSequence { int sceneIndex = 0; int numScenes; int imageWidth, imageHeight; String poseModel; JSONObject[][] poses; public SceneSequence(JSONArray baseJSON) { JSONObject baseObj = baseJSON.getJSONObject(0); numScenes = baseObj.getInt("numScenes"); poses = new JSONObject[numScenes][]; imageWidth = baseObj.getInt("imageWidth"); imageHeight = baseObj.getInt("imageHeight"); poseModel = baseObj.getString("poseModel"); for (int sceneIndex = 0; sceneIndex < numScenes; sceneIndex++) { JSONObject scene = baseObj.getJSONObject("" + sceneIndex); int numPoses = scene.getInt("numPoses"); poses[sceneIndex] = new JSONObject[numPoses]; for (int poseIndex = 0; poseIndex < numPoses; poseIndex++) { JSONObject pose = scene.getJSONObject("" + poseIndex); poses[sceneIndex][poseIndex] = pose; } } } public void drawFrame() { JSONObject[] scene = poses[sceneIndex]; for (int i = 0; i < scene.length; i++) { JSONObject pose = scene[i]; println(poseModel); if (poseModel.equals("COCO")) drawCOCOPose(pose); else drawMPIPose(pose); } sceneIndex++; if (sceneIndex >= numScenes) sceneIndex = 0; } } void connectJoints(JSONObject pose, String joint0, String joint1) { JSONArray pt0 = pose.getJSONArray(joint0); JSONArray pt1 = pose.getJSONArray(joint1); float x0 = pt0.getFloat(0); float y0 = pt0.getFloat(1); float x1 = pt1.getFloat(0); float y1 = pt1.getFloat(1); float x2 = x0 + 200; float y2 = y0 - 70; float x3 = x1 + 200; float y3 = y1 - 70; float x4 = x0 - 200; float y4 = y0 - 70; float x5 = x1 - 200; float y5 = y1 - 70; float x6 = x0 - 350; float y6 = y0 - 120; float x7 = x1 - 350; float y7 = y1 - 120; float x8 = x0 + 350; float y8 = y0 - 120; float x9 = x1 + 350; float y9 = y1 - 120; if ((x0 != 0) && (y0 != 0) && (x1 != 0) && (y1 != 0)) { if ((x0 != 0) && (y0 != 0) || (x1 != 0) && (y1 != 0)) { // line(x0, y0, x1, y1); drawCarrotOnLimb(x0, y0, x1, y1); drawAsparagusOnLimb(x2, y2, x3, y3); drawAubergineOnLimb(x4, y4, x5, y5); drawBroccoliOnLimb(x6, y6, x7, y7); drawCornOnLimb(x8, y8, x9, y9); } } } void drawMPIPose(JSONObject pose) { connectJoints(pose, "head", "neck"); connectJoints(pose, "neck", "left_shoulder"); connectJoints(pose, "neck", "right_shoulder"); connectJoints(pose, "neck", "left_hip"); connectJoints(pose, "neck", "right_hip"); connectJoints(pose, "left_shoulder", "left_elbow"); connectJoints(pose, "left_elbow", "left_hand"); connectJoints(pose, "right_shoulder", "right_elbow"); connectJoints(pose, "right_elbow", "right_hand"); connectJoints(pose, "left_hip", "left_knee"); connectJoints(pose, "left_knee", "left_foot"); connectJoints(pose, "right_hip", "right_knee"); connectJoints(pose, "right_knee", "right_foot"); } void drawCOCOPose(JSONObject pose) { connectJoints(pose, "left_eye", "nose"); connectJoints(pose, "right_eye", "nose"); connectJoints(pose, "left_ear", "left_eye"); connectJoints(pose, "right_ear", "right_eye"); connectJoints(pose, "nose", "neck"); connectJoints(pose, "neck", "left_shoulder"); connectJoints(pose, "left_shoulder", "left_elbow"); connectJoints(pose, "left_elbow", "left_wrist"); connectJoints(pose, "neck", "right_shoulder"); connectJoints(pose, "right_shoulder", "right_elbow"); connectJoints(pose, "right_elbow", "right_wrist"); connectJoints(pose, "neck", "left_hip"); connectJoints(pose, "left_hip", "left_knee"); connectJoints(pose, "left_knee", "left_foot"); connectJoints(pose, "neck", "right_hip"); connectJoints(pose, "right_hip", "right_knee"); connectJoints(pose, "right_knee", "right_foot"); } JSONArray baseJSON; SceneSequence sequence; void setup() { size(1280, 740); stroke(255); baseJSON = loadJSONArray("dance2.json"); sequence = new SceneSequence(baseJSON); carrot = loadImage("data/Carrot.png"); asparagus = loadImage("data/Asparagus.png"); corn = loadImage("data/Corn.png"); kale = loadImage("data/Kale.png"); aubergine = loadImage("data/Aubergine.png"); broccoli = loadImage("data/Broccoli.png"); meat = loadImage("data/meat.jpg"); } void draw() { background(0); //drawBackground(); //spotlight(); sequence.drawFrame(); fill(255); //text(frameCount, mouseX, mouseY); } void drawCarrotOnLimb(float x0, float y0, float x1, float y1) { float cw = carrot.width; float ch = carrot.height; float carrotLength = ch; float desiredLength = dist(x0, y0, x1, y1); float ratio = desiredLength / carrotLength; float dy = y1 - y0; float dx = x1 - x0; float orientation = atan2(dy, dx); pushMatrix(); translate(x0, y0); scale(ratio, ratio); rotate(orientation - HALF_PI); translate(0-cw/2, 0); image(carrot, 0, 0); popMatrix(); } void drawAsparagusOnLimb(float x2, float y2, float x3, float y3) { float cw = asparagus.width; float ch = asparagus.height; float asparagusLength = ch; float desiredLength = dist(x2, y2, x3, y3); float ratio = desiredLength / asparagusLength; float dy3 = y3 - y2; float dx3 = x3 - x2; float orientation = atan2(dy3, dx3); pushMatrix(); translate(x2, y2); scale(ratio, ratio); rotate(orientation - HALF_PI); translate(0-cw/2, 0); image(asparagus, 0, 0); popMatrix(); } void drawAubergineOnLimb(float x4, float y4, float x5, float y5) { float cw = aubergine.width; float ch = aubergine.height; float aubergineLength = ch; float desiredLength = dist(x4, y4, x5, y5); float ratio = desiredLength / aubergineLength; float dy5 = y5 - y4; float dx5 = x5 - x4; float orientation = atan2(dy5, dx5); pushMatrix(); translate(x4, y4); scale(ratio, ratio); rotate(orientation - HALF_PI); translate(0-cw/2, 0); image(aubergine, 0, 0); popMatrix(); } void drawBroccoliOnLimb(float x6, float y6, float x7, float y7) { float cw = broccoli.width; float ch = broccoli.height; float broccoliLength = ch; float desiredLength = dist(x6, y6, x7, y7); float ratio = desiredLength / broccoliLength; float dy7 = y7 - y6; float dx7 = x7 - x6; float orientation = atan2(dy7, dx7); pushMatrix(); translate(x6, y6); scale(ratio, ratio); rotate(orientation - HALF_PI); translate(0-cw/2, 0); image(broccoli, 0, 0); popMatrix(); } void drawCornOnLimb(float x8, float y8, float x9, float y9) { float cw = corn.width; float ch = corn.height; float cornLength = ch; float desiredLength = dist(x8, y8, x9, y9); float ratio = desiredLength / cornLength; float dy9 = y9 - y8; float dx9 = x9 - x8; float orientation = atan2(dy9, dx9); pushMatrix(); translate(x8, y8); scale(ratio, ratio); rotate(orientation - HALF_PI); translate(0-cw/2, 0); image(corn, 0, 0); popMatrix(); } void drawBackground(){ image(meat, 0, 0); pushMatrix(); fill(0, 0, 0, 100); rect(0, 0, 1280, 740); popMatrix(); } void spotlight(){ noStroke(); fill(10, 10, 10); rect(0, 400, width, 500); fill(255, 249, 89, 100); arc(width/2, 650, 1100, 120, 0, PI, OPEN); triangle(width/2, -150, width/2 - 550, 650, width/2 + 550, 650); } |

joxin-Mocap

“Spider”

I converted a human skeleton to a monster.

When I first started this project, I drew a lot of skeletons on my sketchbook. I liked how simple they looked, and I found them to be a powerful abstraction of the way we move. Many live creatures have skeletons, so I thought—what if I try to create a new animal from human skeletons?

I used a walking skeleton data from CMU’s Mocap database. The raw data was in formats (e.g. amc, mpg), which I couldn’t use. However, luckily, someone converted all this data to bvh and posted it online, which can be accessed here.

I loaded a walking human skeleton data into Three.js. Starting with the template provided by Golan, I converted every bone position from human to the “spider.” The concept was simple but the process was very strenuous. I spent a lot of time figuring out how geometry and material work in Three.js. Also, to make the spider legs look like lines but with thickness, I found this library called MeshLine. It was horribly documented, but I eventually made it work. The conversion took a lot of fine-tuning. The head and torso are mapped directly. The tough part was to convert all human arms and legs to the four “spider” legs. The process was a combination of very deliberate mapping and trial-and-error.

I also took special consideration to design a monster skeleton that makes structural and physiological sense. Biological bodies have balance. For example, if the legs are too thin and the body is huge, or if the head is too much in the front, then the entire body would probably look weird and uncomfortable. Thus, in the mapping process, I modeled the body in a balanced way while maintaining the original relationships between the different bone positions in the human.

For me, the project was mainly a technical lesson. It was a great practice for me to understand the library of Three.js. I am still trying to get through the learning curve, but I am already very impressed by how powerful and flexible this code environment is. Every little task requires quite a bit of digging, but the result is normally very rewarding.

I liked the way the monster (spider or scorpion?) looks, but it definitely is very preliminary. I hope to continue working on it and potentially extend it to a final project for this class. Currently the materials and environments are both extremely simple. I am hoping to use shaders to make the monster look more complex and interesting. When it walks side by side with the human, their movements are visually in sync as I intended. I used red because I wanted to highlight the fun nature of this conversion. I wasn’t trying to make a scary monster. I made a monster that is the human’s twin.

Special thanks to tesh for helping me through the tough learning curve of Three.js when I first started this project <3

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 303 304 305 306 307 308 309 310 311 312 313 314 315 316 317 318 319 320 321 322 323 324 325 326 327 328 329 330 331 332 333 334 335 336 337 338 339 340 341 342 343 344 345 346 347 348 349 350 351 352 353 354 355 356 357 358 359 360 361 362 363 364 365 366 367 368 369 370 371 372 373 374 375 376 377 378 379 380 381 382 383 384 385 386 387 388 389 390 391 392 393 394 395 396 397 398 399 400 401 402 403 404 405 406 407 408 409 410 411 412 413 414 415 416 417 418 419 420 421 422 423 424 425 426 427 428 429 430 431 432 433 434 435 436 437 438 439 440 441 442 443 444 445 446 447 448 449 450 451 452 453 454 455 456 457 458 459 460 461 462 463 464 465 466 467 468 469 470 471 472 473 474 475 476 477 478 479 480 481 482 483 484 485 486 487 488 489 490 491 492 493 494 495 496 497 498 499 500 501 502 503 504 505 506 507 508 509 510 511 512 513 514 515 516 517 518 519 520 521 522 | var clock = new THREE.Clock(); var camera, controls, scene, renderer; var mixer, skeletonHelper; var parabola = function( x, k ) { return Math.pow( 4 * x * ( 1 - x ), k ); } init(); animate(); var cubes; // var left_spider_thigh; // var left_spider_calf; // var left_spider_thigh_a; // var left_spider_calf_a; // var right_spider_thigh; // var right_spider_calf; // var right_spider_thigh_a; // var right_spider_calf_a; var human_torso; var human_head; var human_left_leg; var human_right_leg; var human_left_arm; var human_right_arm; var spider_body; var spider_head; var spider_left_leg; var spider_right_leg; var spider_left_arm; var spider_right_arm; var spider_neck; var human_shift = -200; var spider_shift = 60; var loader = new THREE.BVHLoader(); loader.load("bvh/07_08.bvh", function( result ) { skeletonHelper = new THREE.SkeletonHelper( result.skeleton.bones[ 0 ] ); // og_skeletonHelper = new THREE.SkeletonHelper( result.skeleton.bones[ 0 ] ); skeletonHelper.skeleton = result.skeleton; // allow animation mixer to bind to SkeletonHelper directly // og_skeletonHelper.skeleton = result.skeleton; // allow animation mixer to bind to SkeletonHelper directly var material = new MeshLineMaterial({ color: new THREE.Color(0xff0000), side: THREE.DoubleSide, }); skeletonHelper.material = material; //scene.add( skeletonHelper ); // play animation mixer = new THREE.AnimationMixer( skeletonHelper ); mixer.clipAction( result.clip ).setEffectiveWeight( 2.0 ).play(); } ); function init() { camera = new THREE.PerspectiveCamera( 50, window.innerWidth / window.innerHeight, 1, 1000 ); camera.position.set( 0, 100, 400 ); controls = new THREE.OrbitControls( camera ); controls.minDistance = 300; controls.maxDistance = 700; scene = new THREE.Scene(); scene.add( new THREE.GridHelper( 500, 20 ) ); cubes = []; for (var i = 0; i < 21; i++) { var geometry = new THREE.SphereGeometry( 1, 30, 30, 0, 6.3, 0, 3.1 ); var material0 = new THREE.MeshBasicMaterial({ color: 0xff0000, opacity: 0.9, transparent: true }); cube = new THREE.Mesh( geometry, material0 ); cubes.push(cube); //scene.add( cube ); } // spider var geometry_body = new THREE.SphereGeometry( 30, 32, 32 ); var material_body = new THREE.MeshBasicMaterial({ color: 0xff0000, opacity: 0.9, transparent: true }); spider_body = new THREE.Mesh( geometry_body, material_body ); scene.add( spider_body ); var geometry_head = new THREE.SphereGeometry( 10, 32, 32 ); var material_head = new THREE.MeshBasicMaterial({ color: 0xff0000, opacity: 0.9, transparent: true }); spider_head = new THREE.Mesh( geometry_head, material_head ); scene.add( spider_head ); var lines = []; for (var i = 0; i < 4; i++) { var geometry_try = new THREE.Geometry(); geometry_try.vertices.push(new THREE.Vector3(0, 0, 0)); geometry_try.vertices.push(new THREE.Vector3(100, 100, 100)); geometry_try.vertices.push(new THREE.Vector3(100, 0, 0)); var line = new MeshLine(); line.setGeometry( geometry_try ) line.setGeometry( geometry_try, function( p ) { return 10; } ); // makes width 10 * lineWidth line.setGeometry( geometry_try, function( p ) { return 4 * (1 - p); } ); // makes width taper line.setGeometry( geometry_try, function( p ) { return 2 * parabola( p, 1 ); } ); // makes width sinusoidal lines.push(line); } var material_try = new MeshLineMaterial({ color: new THREE.Color(0xff0000), side: THREE.DoubleSide, }); spider_left_leg = new THREE.Mesh( lines[0].geometry, material_try ); spider_right_leg= new THREE.Mesh( lines[1].geometry, material_try ); spider_left_arm = new THREE.Mesh( lines[2].geometry, material_try ); spider_right_arm= new THREE.Mesh( lines[3].geometry, material_try ); var geometry_body = new THREE.Geometry(); geometry_body.vertices.push(new THREE.Vector3(0, 0, 0)); geometry_body.vertices.push(new THREE.Vector3(100, 100, 100)); geometry_body.vertices.push(new THREE.Vector3(100, 0, 0)); geometry_body.vertices.push(new THREE.Vector3(0, 100, 0)); var line_neck = new MeshLine(); line_neck.setGeometry( geometry_body ); line_neck.setGeometry( geometry_body, function( p ) { return 10; } ); // makes width 10 * lineWidth line_neck.setGeometry( geometry_body, function( p ) { return 4 * (1 - p); } ); // makes width taper line_neck.setGeometry( geometry_body, function( p ) { return 2 * parabola( p, 1 ); } ); // makes width sinusoidal spider_neck = new THREE.Mesh( line_neck.geometry, material_try );; scene.add( spider_left_leg ); scene.add( spider_right_leg ); scene.add( spider_left_arm ); scene.add( spider_right_arm ); scene.add( spider_neck ); // human var lines = []; for (var i = 0; i < 5; i++) { var geometry_try = new THREE.Geometry(); geometry_try.vertices.push(new THREE.Vector3(0, 0, 0)); geometry_try.vertices.push(new THREE.Vector3(100, 100, 100)); geometry_try.vertices.push(new THREE.Vector3(100, 0, 0)); geometry_try.vertices.push(new THREE.Vector3(0, 100, 0)); geometry_try.vertices.push(new THREE.Vector3(0, 100, 100)); var line = new MeshLine(); line.setGeometry( geometry_try ); line.setGeometry( geometry_try, function( p ) { return 3; } ); // makes width 5 * lineWidth //line.setGeometry( geometry_try, function( p ) { return 4 * (1 - p); } ); // makes width taper line.setGeometry( geometry_try, function( p ) { return 1.2 * parabola( p, 1 ); } ); // makes width sinusoidal lines.push(line); } human_left_leg = new THREE.Mesh( lines[0].geometry, material_try ); human_right_leg= new THREE.Mesh( lines[1].geometry, material_try ); human_left_arm = new THREE.Mesh( lines[2].geometry, material_try ); human_right_arm= new THREE.Mesh( lines[3].geometry, material_try ); human_torso = new THREE.Mesh( lines[4].geometry, material_try ); scene.add( human_left_leg ); scene.add( human_right_leg ); scene.add( human_left_arm ); scene.add( human_right_arm ); scene.add( human_torso ); var geometry_head = new THREE.SphereGeometry( 5, 32, 32 ); human_head = new THREE.Mesh( geometry_head, material_head ); scene.add( human_head ); // renderer renderer = new THREE.WebGLRenderer( { antialias: true } ); renderer.setClearColor( 0xeeeeee ); renderer.setPixelRatio( window.devicePixelRatio ); renderer.setSize( window.innerWidth, window.innerHeight ); document.body.appendChild( renderer.domElement ); window.addEventListener( 'resize', onWindowResize, false ); } function onWindowResize() { camera.aspect = window.innerWidth / window.innerHeight; camera.updateProjectionMatrix(); renderer.setSize( window.innerWidth, window.innerHeight ); } function animate() { requestAnimationFrame( animate ); var delta = clock.getDelta(); if ( mixer ) mixer.update( delta ); if ( skeletonHelper ) { for (var y = 0; y < skeletonHelper.bones.length; y++){ //console.log(skeletonHelper.bones[y].position); skeletonHelper.bones[y].position = skeletonHelper.bones[y].position * 20; } var useful = [ 0, // hip 2, // left leg root 3, // left knee 4, // left ankle 6, // left feet tip 8, // right left root 9, // right knee 10, //right ankle 12, // right feet tip 17, // chest 18, // neck 19, // chin 20, // forehead 21, // left shoulder 22, // left elbow 23, // left wrist 26, // left hand 30, // right shoulder 31, // right elbow 32, // right wrist 35, // right hand ]; for (var i = 0; i < useful.length; i++) { myPos = skeletonHelper.bones[useful[i]].getWorldPosition(); cubes[i].position.y = myPos.y * 5; cubes[i].position.z = myPos.z * 5; cubes[i].position.x = myPos.x * 5; } // body / hip var pos0 = skeletonHelper.bones[0].getWorldPosition(); var hip = new THREE.Vector3(0, pos0.y, pos0.z); // head var pos20 = skeletonHelper.bones[20].getWorldPosition(); var head = new THREE.Vector3(pos20.x-pos0.x, pos20.y, pos20.z); // left leg var pos2 = skeletonHelper.bones[2].getWorldPosition(); var pos3 = skeletonHelper.bones[3].getWorldPosition(); var pos4 = skeletonHelper.bones[4].getWorldPosition(); var pos6 = skeletonHelper.bones[6].getWorldPosition(); var left_leg_root = new THREE.Vector3((pos2.x-pos0.x) * 5, pos2.y*2 + 30, pos2.z * 5 + 10); var newY = (pos3.y * 5-50 - 15) / (30-10) * (50 - 60) + 50; var left_leg_knee = new THREE.Vector3((pos3.x-pos0.x) * 5 + 100, newY*2, pos3.z * 5+30); var left_leg_ankle = new THREE.Vector3((pos4.x-pos0.x) * 5 + 140, pos4.y*2, pos4.z * 5+30); // left arm var pos21 = skeletonHelper.bones[21].getWorldPosition(); var pos22 = skeletonHelper.bones[22].getWorldPosition(); var pos23 = skeletonHelper.bones[23].getWorldPosition(); var pos26 = skeletonHelper.bones[26].getWorldPosition(); var left_arm_root = new THREE.Vector3((pos21.x-pos0.x) * 5, pos21.y * 2+ 30, pos21.z * 5-10); var newZ = (pos22.z * 5+30 - pos21.z * 5-10) * 2 + pos21.z * 5-10; var left_arm_knee = new THREE.Vector3((pos22.x-pos0.x) * 5+100, pos22.y * 2 + 80, newZ-50); var left_arm_ankle = new THREE.Vector3((pos23.x-pos0.x) * 5+140, (pos23.y-12) * 10 - 25, pos23.z * 5-40); // right leg var pos8 = skeletonHelper.bones[8].getWorldPosition(); var pos9 = skeletonHelper.bones[9].getWorldPosition(); var pos10 = skeletonHelper.bones[10].getWorldPosition(); var pos12 = skeletonHelper.bones[12].getWorldPosition(); var right_leg_root = new THREE.Vector3((pos8.x - pos0.x) * 5, pos8.y*2 + 30, pos8.z * 5 + 10); var newY = (pos9.y * 5-50 - 15) / (30-10) * (50 - 60) + 50; var right_leg_knee = new THREE.Vector3((pos9.x - pos0.x) * 5-100, newY*2, pos9.z * 5+30); var right_leg_ankle = new THREE.Vector3((pos10.x - pos0.x) * 5-140, pos10.y*2, pos10.z * 5+30); // right arm var pos30 = skeletonHelper.bones[30].getWorldPosition(); var pos31 = skeletonHelper.bones[31].getWorldPosition(); var pos32 = skeletonHelper.bones[32].getWorldPosition(); var pos35 = skeletonHelper.bones[35].getWorldPosition(); var right_arm_root = new THREE.Vector3((pos30.x - pos0.x) * 5, pos30.y * 2 + 30, pos30.z * 5-10); //console.log(pos22.z * 5+30 - pos21.z * 5-10); var newZ = (pos31.z * 5+30 - pos30.z * 5-10) * 2 + pos30.z * 5-10; var right_arm_knee = new THREE.Vector3((pos31.x - pos0.x) * 5-100, pos31.y*2 + 80, newZ-40); var right_arm_ankle = new THREE.Vector3((pos32.x - pos0.x)* 5-140, (pos32.y-12) * 10 -25, pos32.z * 5-40); // chest & neck & chin var pos17 = skeletonHelper.bones[17].getWorldPosition(); var pos18 = skeletonHelper.bones[18].getWorldPosition(); var pos19 = skeletonHelper.bones[19].getWorldPosition(); var chest = new THREE.Vector3((pos17.x-pos0.x)*10, pos17.y * 8 + 20, pos17.z * 5-30); var neck = new THREE.Vector3((pos18.x-pos0.x)*10-5, pos18.y * 8 + 20, pos18.z * 5); var chin = new THREE.Vector3((pos19.x-pos0.x)*10+3, pos19.y * 7, pos19.z * 5 + 50); /* update human body parts */ // var human_torso; // var human_head; // var human_left_leg; // var human_right_leg; // var human_left_arm; // var human_right_arm; var human_scale = 5; for (var i = 0; i < 2; i++) { human_left_leg.geometry.attributes.position.array[i*3+0] = pos0.x * 5 + human_shift; human_left_leg.geometry.attributes.position.array[i*3+1] = pos0.y * 5; human_left_leg.geometry.attributes.position.array[i*3+2] = pos0.z * 5; human_left_arm.geometry.attributes.position.array[i*3+0] = pos17.x * 5 + human_shift; human_left_arm.geometry.attributes.position.array[i*3+1] = pos17.y * 5; human_left_arm.geometry.attributes.position.array[i*3+2] = pos17.z * 5; human_right_arm.geometry.attributes.position.array[i*3+0] = pos17.x * 5 + human_shift; human_right_arm.geometry.attributes.position.array[i*3+1] = pos17.y * 5; human_right_arm.geometry.attributes.position.array[i*3+2] = pos17.z * 5; human_right_leg.geometry.attributes.position.array[i*3+0] = pos0.x * 5 + human_shift; human_right_leg.geometry.attributes.position.array[i*3+1] = pos0.y * 5; human_right_leg.geometry.attributes.position.array[i*3+2] = pos0.z * 5; human_torso.geometry.attributes.position.array[i*3+0] = pos0.x * 5 + human_shift; human_torso.geometry.attributes.position.array[i*3+1] = pos0.y * 5; human_torso.geometry.attributes.position.array[i*3+2] = pos0.z * 5; } for (var i = 2; i < 4; i++) { human_left_leg.geometry.attributes.position.array[i*3+0] = pos2.x * 5 + human_shift; human_left_leg.geometry.attributes.position.array[i*3+1] = pos2.y * 5; human_left_leg.geometry.attributes.position.array[i*3+2] = pos2.z * 5; human_left_arm.geometry.attributes.position.array[i*3+0] = pos21.x * 5 + human_shift; human_left_arm.geometry.attributes.position.array[i*3+1] = pos21.y * 5; human_left_arm.geometry.attributes.position.array[i*3+2] = pos21.z * 5; human_right_arm.geometry.attributes.position.array[i*3+0] = pos30.x * 5 + human_shift; human_right_arm.geometry.attributes.position.array[i*3+1] = pos30.y * 5; human_right_arm.geometry.attributes.position.array[i*3+2] = pos30.z * 5; human_right_leg.geometry.attributes.position.array[i*3+0] = pos8.x * 5 + human_shift; human_right_leg.geometry.attributes.position.array[i*3+1] = pos8.y * 5; human_right_leg.geometry.attributes.position.array[i*3+2] = pos8.z * 5; human_torso.geometry.attributes.position.array[i*3+0] = pos17.x * 5 + human_shift; human_torso.geometry.attributes.position.array[i*3+1] = pos17.y * 5; human_torso.geometry.attributes.position.array[i*3+2] = pos17.z * 5; } for (var i = 4; i < 6; i++) { human_left_leg.geometry.attributes.position.array[i*3+0] = pos3.x * 5 + human_shift; human_left_leg.geometry.attributes.position.array[i*3+1] = pos3.y * 5; human_left_leg.geometry.attributes.position.array[i*3+2] = pos3.z * 5; human_left_arm.geometry.attributes.position.array[i*3+0] = pos22.x * 5 + human_shift; human_left_arm.geometry.attributes.position.array[i*3+1] = pos22.y * 5; human_left_arm.geometry.attributes.position.array[i*3+2] = pos22.z * 5; human_right_arm.geometry.attributes.position.array[i*3+0] = pos31.x * 5 + human_shift; human_right_arm.geometry.attributes.position.array[i*3+1] = pos31.y * 5; human_right_arm.geometry.attributes.position.array[i*3+2] = pos31.z * 5; human_right_leg.geometry.attributes.position.array[i*3+0] = pos9.x * 5 + human_shift; human_right_leg.geometry.attributes.position.array[i*3+1] = pos9.y * 5; human_right_leg.geometry.attributes.position.array[i*3+2] = pos9.z * 5; human_torso.geometry.attributes.position.array[i*3+0] = pos18.x * 5 + human_shift; human_torso.geometry.attributes.position.array[i*3+1] = pos18.y * 5; human_torso.geometry.attributes.position.array[i*3+2] = pos18.z * 5; } for (var i = 6; i < 8; i++) { human_left_leg.geometry.attributes.position.array[i*3+0] = pos4.x * 5 + human_shift; human_left_leg.geometry.attributes.position.array[i*3+1] = pos4.y * 5; human_left_leg.geometry.attributes.position.array[i*3+2] = pos4.z * 5; human_left_arm.geometry.attributes.position.array[i*3+0] = pos23.x * 5 + human_shift; human_left_arm.geometry.attributes.position.array[i*3+1] = pos23.y * 5; human_left_arm.geometry.attributes.position.array[i*3+2] = pos23.z * 5; human_right_arm.geometry.attributes.position.array[i*3+0] = pos32.x * 5 + human_shift; human_right_arm.geometry.attributes.position.array[i*3+1] = pos32.y * 5; human_right_arm.geometry.attributes.position.array[i*3+2] = pos32.z * 5; human_right_leg.geometry.attributes.position.array[i*3+0] = pos10.x * 5 + human_shift; human_right_leg.geometry.attributes.position.array[i*3+1] = pos10.y * 5; human_right_leg.geometry.attributes.position.array[i*3+2] = pos10.z * 5; human_torso.geometry.attributes.position.array[i*3+0] = pos19.x * 5 + human_shift; human_torso.geometry.attributes.position.array[i*3+1] = pos19.y * 5; human_torso.geometry.attributes.position.array[i*3+2] = pos19.z * 5; } for (var i = 8; i < 10; i++) { human_left_leg.geometry.attributes.position.array[i*3+0] = pos6.x * 5 + human_shift; human_left_leg.geometry.attributes.position.array[i*3+1] = pos6.y * 5; human_left_leg.geometry.attributes.position.array[i*3+2] = pos6.z * 5; human_left_arm.geometry.attributes.position.array[i*3+0] = pos26.x * 5 + human_shift; human_left_arm.geometry.attributes.position.array[i*3+1] = pos26.y * 5; human_left_arm.geometry.attributes.position.array[i*3+2] = pos26.z * 5; human_right_arm.geometry.attributes.position.array[i*3+0] = pos35.x * 5 + human_shift; human_right_arm.geometry.attributes.position.array[i*3+1] = pos35.y * 5; human_right_arm.geometry.attributes.position.array[i*3+2] = pos35.z * 5; human_right_leg.geometry.attributes.position.array[i*3+0] = pos12.x * 5 + human_shift; human_right_leg.geometry.attributes.position.array[i*3+1] = pos12.y * 5; human_right_leg.geometry.attributes.position.array[i*3+2] = pos12.z * 5; human_torso.geometry.attributes.position.array[i*3+0] = pos20.x * 5 + human_shift; human_torso.geometry.attributes.position.array[i*3+1] = pos20.y * 5; human_torso.geometry.attributes.position.array[i*3+2] = pos20.z * 5; } human_left_leg.geometry.attributes.position.needsUpdate = true; human_right_leg.geometry.attributes.position.needsUpdate = true; human_left_arm.geometry.attributes.position.needsUpdate = true; human_right_arm.geometry.attributes.position.needsUpdate = true; human_torso.geometry.attributes.position.needsUpdate = true; human_head.position.x = pos19.x*human_scale + human_shift; human_head.position.y = pos19.y*human_scale; human_head.position.z = pos19.z*human_scale; /* update to spider body parts */ //console.log(meshline.geometry); for (var i = 0; i < 2; i++) { spider_left_leg.geometry.attributes.position.array[i*3+0] = left_leg_root.x + spider_shift; spider_left_leg.geometry.attributes.position.array[i*3+1] = left_leg_root.y; spider_left_leg.geometry.attributes.position.array[i*3+2] = left_leg_root.z; spider_left_arm.geometry.attributes.position.array[i*3+0] = left_arm_root.x+ spider_shift; spider_left_arm.geometry.attributes.position.array[i*3+1] = left_arm_root.y; spider_left_arm.geometry.attributes.position.array[i*3+2] = left_arm_root.z; spider_right_leg.geometry.attributes.position.array[i*3+0] = right_leg_ankle.x + spider_shift; spider_right_leg.geometry.attributes.position.array[i*3+1] = right_leg_ankle.y; spider_right_leg.geometry.attributes.position.array[i*3+2] = right_leg_ankle.z; spider_right_arm.geometry.attributes.position.array[i*3+0] = right_arm_ankle.x + spider_shift; spider_right_arm.geometry.attributes.position.array[i*3+1] = right_arm_ankle.y; spider_right_arm.geometry.attributes.position.array[i*3+2] = right_arm_ankle.z; spider_neck.geometry.attributes.position.array[i*3+0] = chin.x + spider_shift; spider_neck.geometry.attributes.position.array[i*3+1] = chin.y; spider_neck.geometry.attributes.position.array[i*3+2] = chin.z; }; for (var i = 2; i < 4; i++) { spider_left_leg.geometry.attributes.position.array[i*3+0] = left_leg_knee.x + spider_shift; spider_left_leg.geometry.attributes.position.array[i*3+1] = left_leg_knee.y; spider_left_leg.geometry.attributes.position.array[i*3+2] = left_leg_knee.z; spider_right_leg.geometry.attributes.position.array[i*3+0] = right_leg_knee.x + spider_shift; spider_right_leg.geometry.attributes.position.array[i*3+1] = right_leg_knee.y; spider_right_leg.geometry.attributes.position.array[i*3+2] = right_leg_knee.z; spider_left_arm.geometry.attributes.position.array[i*3+0] = left_arm_knee.x + spider_shift; spider_left_arm.geometry.attributes.position.array[i*3+1] = left_arm_knee.y; spider_left_arm.geometry.attributes.position.array[i*3+2] = left_arm_knee.z; spider_right_arm.geometry.attributes.position.array[i*3+0] = right_arm_knee.x + spider_shift; spider_right_arm.geometry.attributes.position.array[i*3+1] = right_arm_knee.y; spider_right_arm.geometry.attributes.position.array[i*3+2] = right_arm_knee.z; spider_neck.geometry.attributes.position.array[i*3+0] = neck.x + spider_shift; spider_neck.geometry.attributes.position.array[i*3+1] = neck.y; spider_neck.geometry.attributes.position.array[i*3+2] = neck.z; }; for (var i = 4; i < 6; i++) { spider_left_leg.geometry.attributes.position.array[i*3+0] = left_leg_ankle.x + spider_shift; spider_left_leg.geometry.attributes.position.array[i*3+1] = left_leg_ankle.y; spider_left_leg.geometry.attributes.position.array[i*3+2] = left_leg_ankle.z; spider_left_arm.geometry.attributes.position.array[i*3+0] = left_arm_ankle.x + spider_shift; spider_left_arm.geometry.attributes.position.array[i*3+1] = left_arm_ankle.y; spider_left_arm.geometry.attributes.position.array[i*3+2] = left_arm_ankle.z; spider_right_leg.geometry.attributes.position.array[i*3+0] = right_leg_root.x + spider_shift; spider_right_leg.geometry.attributes.position.array[i*3+1] = right_leg_root.y; spider_right_leg.geometry.attributes.position.array[i*3+2] = right_leg_root.z; spider_right_arm.geometry.attributes.position.array[i*3+0] = right_arm_root.x + spider_shift; spider_right_arm.geometry.attributes.position.array[i*3+1] = right_arm_root.y; spider_right_arm.geometry.attributes.position.array[i*3+2] = right_arm_root.z; spider_neck.geometry.attributes.position.array[i*3+0] = chest.x + spider_shift; spider_neck.geometry.attributes.position.array[i*3+1] = chest.y; spider_neck.geometry.attributes.position.array[i*3+2] = chest.z; }; for (var i = 6; i < 8; i++) { spider_neck.geometry.attributes.position.array[i*3 + 0] = hip.x*5 + spider_shift; spider_neck.geometry.attributes.position.array[i*3 + 1] = hip.y*5; spider_neck.geometry.attributes.position.array[i*3 + 2] = hip.z*5; }; spider_left_leg.geometry.attributes.position.needsUpdate = true; spider_right_leg.geometry.attributes.position.needsUpdate = true; spider_left_arm.geometry.attributes.position.needsUpdate = true; spider_right_arm.geometry.attributes.position.needsUpdate = true; spider_neck.geometry.attributes.position.needsUpdate = true; spider_body.position.x = hip.x*5 + spider_shift; spider_body.position.y = hip.y*5; spider_body.position.z = hip.z*5; spider_head.position.x = head.x*20 + spider_shift ; spider_head.position.y = head.y*8.2; spider_head.position.z = head.z*5 + 50; skeletonHelper.update(); } renderer.render( scene, camera ); } |

zbeok-LookingOutwards06

Going through the Media Art Tube channel, this face caught my eye. I thought it was really weird how it looks like something an engineer or a biologist would make for the most informal research, but once I realized what was going on it became something with a lot of potential. Yes, it does remind me of research the way it isolates muscles in a very logical, robotic way, but the end result is something really fun and weird. In addition, the fact that this is done by machine makes it fascinatingly portable, a preprogrammed choreography able to load to anyone at all. It’s got a strangely human-weird touch for something so machine-like, in all.

Ackso-Mocap

For this project, I wanted to create one abstract form that is controlled by several dancing bodies. I used several bodies rather than one so that the movement of each would combine into a more complex and abstract form of motion. First, I recorded motion data of myself dancing to Phoenix’s “If I Ever Feel Better” three times, keeping relatively still until the chorus. I did this so that the motion of the combined form would start out calm and then get more sporadic; to add to this, I intentionally moved too fast for the Kinect to follow me during the climax of the song. I then drew simple bodies using triangles; however, the bodies do not represent a motion capture recording. Instead, each abstracted form is controlled by one half of two different skeletons, so in order to truly understand how I was dancing, one would have to look at the combination of multiple separate shapes. The aim of this was to make it seem like the whole shape, rather than the three bodies individually, was dancing.

PBvh myBrekelBvh; PBvh myBrekelBvh2; PBvh body3; float rotation = 0; PVector hip1 = new PVector(); PVector hip2 = new PVector(); PVector hip3 = new PVector(); PVector head1 = new PVector(); PVector head2 = new PVector(); PVector head3 = new PVector(); PVector leftKnee1 = new PVector(); PVector leftKnee2 = new PVector(); PVector leftKnee3 = new PVector(); PVector leftHand1 = new PVector(); PVector leftHand2 = new PVector(); PVector leftHand3 = new PVector(); PVector rightHand1 = new PVector(); PVector rightHand2 = new PVector(); PVector rightHand3 = new PVector(); PVector rightFoot1 = new PVector(); PVector rightFoot2 = new PVector(); PVector rightFoot3 = new PVector(); PVector leftFoot1 = new PVector (); PVector leftFoot2 = new PVector (); PVector leftFoot3 = new PVector (); //------------------------------------------------ void setup() { size( 1280, 720, P3D ); // Load a BVH file recorded with a Kinect v2, made in Brekel Pro Body v2. myBrekelBvh = new PBvh( loadStrings( "dance1.bvh" ) ); myBrekelBvh2 = new PBvh( loadStrings( "dance2.bvh" ) ); body3= new PBvh( loadStrings( "dance3.bvh" ) ); } //------------------------------------------------ void draw() { lights() ; background(0); rotation+=.1; setMyCamera(); // Position the camera. See code below. updateAndDrawBody(); // Update and render the BVH file. See code below. noStroke(); fill(0,0,255); beginShape(); vertex(head1.x,head1.y,head1.z); vertex(head2.x,head2.y,head2.z); vertex(head3.x,head3.y,head3.z); endShape(); fill(229, 42, 0); beginShape(); vertex(leftFoot1.x,leftFoot1.y,leftFoot1.z); vertex(rightFoot3.x,rightFoot3.y,rightFoot3.z); vertex(leftHand1.x,leftHand1.y,leftHand1.z); vertex(rightHand3.x,rightHand3.y,rightHand3.z); endShape(); fill(255,0,0); beginShape(); vertex(rightFoot1.x,rightFoot1.y,rightFoot1.z); vertex(leftFoot2.x,leftFoot2.y,leftFoot2.z); vertex(rightHand1.x,rightHand1.y,rightHand1.z); vertex(leftHand2.x,leftHand2.y,leftHand2.z); endShape(); fill(229, 34, 105); beginShape(); vertex(rightFoot2.x,rightFoot2.y,rightFoot2.z); vertex(leftFoot3.x,leftFoot3.y,leftFoot3.z); vertex(rightHand2.x,rightHand2.y,rightHand2.z); vertex(leftHand3.x,leftHand3.y,leftHand3.z); endShape(); fill(0, 242, 255); beginShape(); vertex(hip1.x,hip1.y,hip1.z); vertex(hip2.x,hip2.y,hip2.z); vertex(hip3.x,hip3.y,hip3.z); endShape(); } //------------------------------------------------ void updateAndDrawBody() { pushMatrix(); translate(width/2, height/2+100, 200); scale(-1, -1, 1); myBrekelBvh.update(millis()); BvhBone bone=myBrekelBvh.parser.getBones().get(0); hip1.x=modelX(bone.absPos.x,bone.absPos.y,bone.absPos.z); hip1.y=modelY(bone.absPos.x,bone.absPos.y,bone.absPos.z); hip1.z=modelZ(bone.absPos.x,bone.absPos.y,bone.absPos.z); BvhBone headBone1=myBrekelBvh.parser.getBones().get(48); head1.x=modelX(headBone1.absPos.x,headBone1.absPos.y,headBone1.absPos.z); head1.y=modelY(headBone1.absPos.x,headBone1.absPos.y,headBone1.absPos.z); head1.z=modelZ(headBone1.absPos.x,headBone1.absPos.y,headBone1.absPos.z); BvhBone leftKneeBone1=myBrekelBvh.parser.getBones().get(2); leftKnee1.x=modelX(leftKneeBone1.absPos.x,leftKneeBone1.absPos.y,leftKneeBone1.absPos.z); leftKnee1.y=modelY(leftKneeBone1.absPos.x,leftKneeBone1.absPos.y,leftKneeBone1.absPos.z); leftKnee1.z=modelZ(leftKneeBone1.absPos.x,leftKneeBone1.absPos.y,leftKneeBone1.absPos.z); BvhBone leftHandBone1=myBrekelBvh.parser.getBones().get(12); leftHand1.x=modelX(leftHandBone1.absPos.x,leftHandBone1.absPos.y,leftHandBone1.absPos.z); leftHand1.y=modelY(leftHandBone1.absPos.x,leftHandBone1.absPos.y,leftHandBone1.absPos.z); leftHand1.z=modelZ(leftHandBone1.absPos.x,leftHandBone1.absPos.y,leftHandBone1.absPos.z); BvhBone rightHandBone1= myBrekelBvh.parser.getBones().get(31); rightHand1.x=modelX(rightHandBone1.absPos.x,rightHandBone1.absPos.y,rightHandBone1.absPos.z); rightHand1.y=modelY(rightHandBone1.absPos.x,rightHandBone1.absPos.y,rightHandBone1.absPos.z); rightHand1.z=modelZ(rightHandBone1.absPos.x,rightHandBone1.absPos.y,rightHandBone1.absPos.z); BvhBone leftFootBone1= myBrekelBvh.parser.getBones().get(3); leftFoot1.x=modelX(leftFootBone1.absPos.x,leftFootBone1.absPos.y,leftFootBone1.absPos.z); leftFoot1.y=modelY(leftFootBone1.absPos.x,leftFootBone1.absPos.y,leftFootBone1.absPos.z); leftFoot1.z=modelZ(leftFootBone1.absPos.x,leftFootBone1.absPos.y,leftFootBone1.absPos.z); BvhBone rightFootBone1= myBrekelBvh.parser.getBones().get(6); rightFoot1.x=modelX(rightFootBone1.absPos.x,rightFootBone1.absPos.y,rightFootBone1.absPos.z); rightFoot1.y=modelY(rightFootBone1.absPos.x,rightFootBone1.absPos.y,rightFootBone1.absPos.z); rightFoot1.z=modelZ(rightFootBone1.absPos.x,rightFootBone1.absPos.y,rightFootBone1.absPos.z); popMatrix(); pushMatrix(); translate(width/2+370,height/2+100,0); scale(-1, -1, 1); rotateY(-3*PI/8); myBrekelBvh2.update(millis()); BvhBone bone2=myBrekelBvh2.parser.getBones().get(0); hip2.x=modelX(bone2.absPos.x,bone2.absPos.y,bone2.absPos.z); hip2.y=modelY(bone2.absPos.x,bone2.absPos.y,bone2.absPos.z); hip2.z=modelZ(bone2.absPos.x,bone2.absPos.y,bone2.absPos.z); BvhBone headBone2=myBrekelBvh2.parser.getBones().get(48); head2.x=modelX(headBone2.absPos.x,headBone2.absPos.y,headBone2.absPos.z); head2.y=modelY(headBone2.absPos.x,headBone2.absPos.y,headBone2.absPos.z); head2.z=modelZ(headBone2.absPos.x,headBone2.absPos.y,headBone2.absPos.z); BvhBone leftKneeBone2=myBrekelBvh2.parser.getBones().get(2); leftKnee2.x=modelX(leftKneeBone2.absPos.x,leftKneeBone2.absPos.y,leftKneeBone2.absPos.z); leftKnee2.y=modelY(leftKneeBone2.absPos.x,leftKneeBone2.absPos.y,leftKneeBone2.absPos.z); leftKnee2.z=modelZ(leftKneeBone2.absPos.x,leftKneeBone2.absPos.y,leftKneeBone2.absPos.z); BvhBone leftHandBone2=myBrekelBvh2.parser.getBones().get(12); leftHand2.x=modelX(leftHandBone2.absPos.x,leftHandBone2.absPos.y,leftHandBone2.absPos.z); leftHand2.y=modelY(leftHandBone2.absPos.x,leftHandBone2.absPos.y,leftHandBone2.absPos.z); leftHand2.z=modelZ(leftHandBone2.absPos.x,leftHandBone2.absPos.y,leftHandBone2.absPos.z); BvhBone rightHandBone2= myBrekelBvh2.parser.getBones().get(31); rightHand2.x=modelX(rightHandBone2.absPos.x,rightHandBone2.absPos.y,rightHandBone2.absPos.z); rightHand2.y=modelY(rightHandBone2.absPos.x,rightHandBone2.absPos.y,rightHandBone2.absPos.z); rightHand2.z=modelZ(rightHandBone2.absPos.x,rightHandBone2.absPos.y,rightHandBone2.absPos.z); BvhBone leftFootBone2= myBrekelBvh2.parser.getBones().get(3); leftFoot2.x=modelX(leftFootBone2.absPos.x,leftFootBone2.absPos.y,leftFootBone2.absPos.z); leftFoot2.y=modelY(leftFootBone2.absPos.x,leftFootBone2.absPos.y,leftFootBone2.absPos.z); leftFoot2.z=modelZ(leftFootBone2.absPos.x,leftFootBone2.absPos.y,leftFootBone2.absPos.z); BvhBone rightFootBone2= myBrekelBvh2.parser.getBones().get(6); rightFoot2.x=modelX(rightFootBone2.absPos.x,rightFootBone2.absPos.y,rightFootBone2.absPos.z); rightFoot2.y=modelY(rightFootBone2.absPos.x,rightFootBone2.absPos.y,rightFootBone2.absPos.z); rightFoot2.z=modelZ(rightFootBone2.absPos.x,rightFootBone2.absPos.y,rightFootBone2.absPos.z); popMatrix(); pushMatrix(); translate(width/2-200,height/2+100,-300); scale(-1, -1, 1); rotateY(2*PI/3); body3.update(millis()); BvhBone bone3=body3.parser.getBones().get(0); hip3.x=modelX(bone3.absPos.x,bone3.absPos.y,bone3.absPos.z); hip3.y=modelY(bone3.absPos.x,bone3.absPos.y,bone3.absPos.z); hip3.z=modelZ(bone3.absPos.x,bone3.absPos.y,bone3.absPos.z); BvhBone headBone3=body3.parser.getBones().get(48); head3.x=modelX(headBone3.absPos.x,headBone3.absPos.y,headBone3.absPos.z); head3.y=modelY(headBone3.absPos.x,headBone3.absPos.y,headBone3.absPos.z); head3.z=modelZ(headBone3.absPos.x,headBone3.absPos.y,headBone3.absPos.z); BvhBone leftKneeBone3=body3.parser.getBones().get(2); leftKnee3.x=modelX(leftKneeBone3.absPos.x,leftKneeBone3.absPos.y,leftKneeBone3.absPos.z); leftKnee3.y=modelY(leftKneeBone3.absPos.x,leftKneeBone3.absPos.y,leftKneeBone3.absPos.z); leftKnee3.z=modelZ(leftKneeBone3.absPos.x,leftKneeBone3.absPos.y,leftKneeBone3.absPos.z); BvhBone leftHandBone3=body3.parser.getBones().get(12); leftHand3.x=modelX(leftHandBone3.absPos.x,leftHandBone3.absPos.y,leftHandBone3.absPos.z); leftHand3.y=modelY(leftHandBone3.absPos.x,leftHandBone3.absPos.y,leftHandBone3.absPos.z); leftHand3.z=modelZ(leftHandBone3.absPos.x,leftHandBone3.absPos.y,leftHandBone3.absPos.z); BvhBone rightHandBone3= body3.parser.getBones().get(31); rightHand3.x=modelX(rightHandBone3.absPos.x,rightHandBone3.absPos.y,rightHandBone3.absPos.z); rightHand3.y=modelY(rightHandBone3.absPos.x,rightHandBone3.absPos.y,rightHandBone3.absPos.z); rightHand3.z=modelZ(rightHandBone3.absPos.x,rightHandBone3.absPos.y,rightHandBone3.absPos.z); BvhBone leftFootBone3= body3.parser.getBones().get(3); leftFoot3.x=modelX(leftFootBone3.absPos.x,leftFootBone3.absPos.y,leftFootBone3.absPos.z); leftFoot3.y=modelY(leftFootBone3.absPos.x,leftFootBone3.absPos.y,leftFootBone3.absPos.z); leftFoot3.z=modelZ(leftFootBone3.absPos.x,leftFootBone3.absPos.y,leftFootBone3.absPos.z); BvhBone rightFootBone3= body3.parser.getBones().get(6); rightFoot3.x=modelX(rightFootBone3.absPos.x,rightFootBone3.absPos.y,rightFootBone3.absPos.z); rightFoot3.y=modelY(rightFootBone3.absPos.x,rightFootBone3.absPos.y,rightFootBone3.absPos.z); rightFoot3.z=modelZ(rightFootBone3.absPos.x,rightFootBone3.absPos.y,rightFootBone3.absPos.z); popMatrix(); } //------------------------------------------------ void setMyCamera() { // Adjust the position of the camera //float eyeX = mouseX; // x-coordinate for the eye float eyeY = mouseY; // y-coordinate for the eye //float eyeZ = 350; // z-coordinate for the eye float centerX = width/2+100; // x-coordinate for the center of the scene float centerY = height/2.0f-50; // y-coordinate for the center of the scene float centerZ = -100; // z-coordinate for the center of the scene float upX = 0; // usually 0.0, 1.0, or -1.0 float upY = 1; // usually 0.0, 1.0, or -1.0 float upZ = 0; // usually 0.0, 1.0, or -1.0 float orbitRadius = 275; float eyeX= cos(radians(rotation))*orbitRadius+700; float eyeZ= sin(radians(rotation))*orbitRadius-175; camera(eyeX, eyeY, eyeZ, centerX, centerY, centerZ, upX, upY, upZ); } //------------------------------------------------ void drawMyGround() { // Draw a grid in the center of the ground pushMatrix(); translate(width/2, height/2, 0); // position the body in space scale(-1, -1, 1); stroke(100); strokeWeight(1); float gridSize = 400; int nGridDivisions = 10; for (int col=0; col<=nGridDivisions; col++) { float x = map(col, 0, nGridDivisions, -gridSize, gridSize); line (x, 0, -gridSize, x, 0, gridSize); } for (int row=0; row<=nGridDivisions; row++) { float z = map(row, 0, nGridDivisions, -gridSize, gridSize); line (-gridSize, 0, z, gridSize, 0, z); } popMatrix(); } |

fatik – LookingOutwards06

Memo Akten is “an Artist working with computation as a medium, exploring collisions between nature, science, technology, ethics, ritual, tradition and religion. Doing PhD at Goldsmiths UoL in artificial intelligence / machine learning and expressive human-machine interaction, exploring collaborative co-creativity between humans and machines.”

It’s amazing to read about the intention of specific projects that artist make using computational tools. For example, Memo’s Waves is a piece made in response to the tragic Paris Killings and Syria Bombings. He is exploring the back and forth cycle of violence. The visuals are simulated waves crashing into each other. He talks about the tension and balance; calming yet terrifying; graceful yet violent aspects about ocean waves.

It’s wild to see that he also has work relating to light, sound, interactivity, and commercial work. I love seeing all of these artists who are not constrained to one type of work but this exploration of medium and purpose.

fatik – LookingOutwards04

Quayola identifies himself as a visual artist, but the variety of his work says much more than simply a ‘visual artist’. I love his concepts and theme of using old renaissance paintings as inspiration and using high tech as well as low tech methods of execution. I personally love his series and ongoing research of Iconographies.

Iconographies focuses on the analysis of “analysis of renaissance and baroque paintings via computational methods. Religious and mythological scenes are transformed into complex digital formations. By removing iconographic narratives, the paintings lose their original context to become new objects of contemplation.”

I love the contrast of old, analog thinking with high tech making. I think a lot of his work is for further contemplation and alternative ways of perceiving which is really interesting. I enjoy how his work ranges from print, to sculpture, to installation, and many other mediums to express his concept.

Link to his website: https://www.quayola.com/

fatik – LookingOutwards03

noiseGrid, 2018, made with code (processing), vector 4 print “shifting pixel sorting data out of landscape photos into a 2D noise matrix”

Some quotes that describe his practice:

“My internal process is the same as it was when I was working with paint and canvas,” Lippmann explains. “That’s why I call my current work digital or computer-aided painting.”

“deep inside i’m a painter and i always was. so i think my work should be best described in the traditional context of painting. the focus lies on the development of an image and colour composition.”

Link to Website: http://www.lumicon.de/wp/