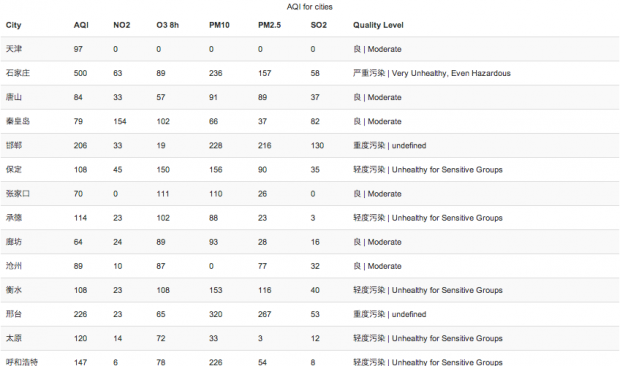

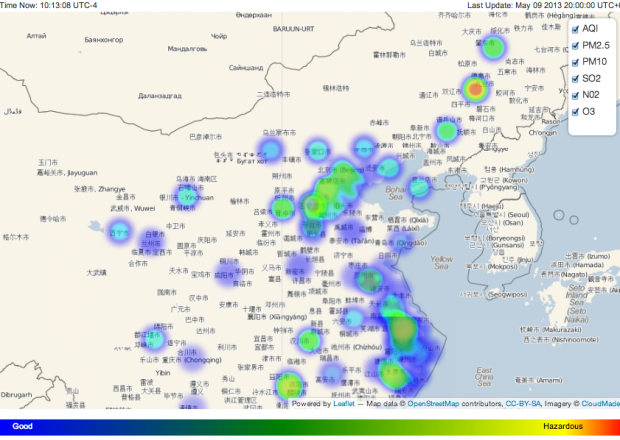

Title: AQI China / Author: Han HuaAbstract: Real time visualization of air quality in China.Showing you air pollution n major cities in China.http://tranquil-basin-8669.herokuapp.com/

Title: AQI China / Author: Han HuaAbstract: Real time visualization of air quality in China.Showing you air pollution n major cities in China.http://tranquil-basin-8669.herokuapp.com/

Category Archives: final-project

Michael

14 Apr 2013

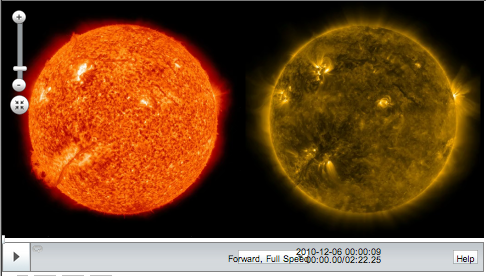

I’ve switched focus a bit since the group discussion on project ideas. I’m still focusing on the SDO imagery and displaying multiple layers of the sun time lapse, but I’ve decided that a more interesting approach to the project is to explore how people process different images of the sun when viewed through different eyes. The first technical challenge to this is to create a way to view multiple Time Machine time lapses side by side. I’ve managed to learn the Time Machine API and I’ve reworked a few things as follows:

A) Two time lapses display side-by-side at a proper size to be viewed through a stereoscope

B) Single control bar stretched beneath two time lapse windows

C) Videos synchronize on play and pause. (Synchronization on time change or position change still results in jittery performance)

The synchronization needs a bit of work still, and then comes the time to work on the interface a bit more to support changing the layers in an intelligent and intuitive way. I need to figure that out a bit and make some sketches.

John

08 Apr 2013

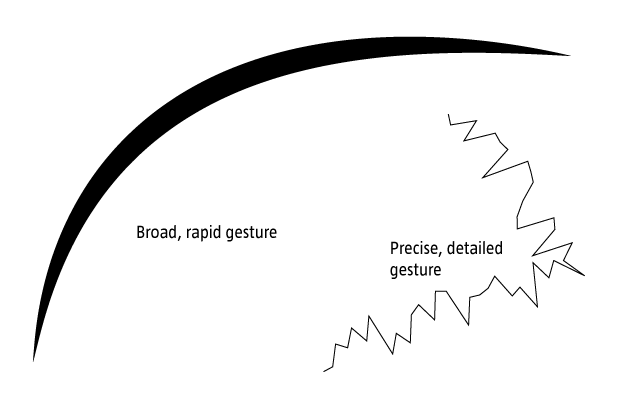

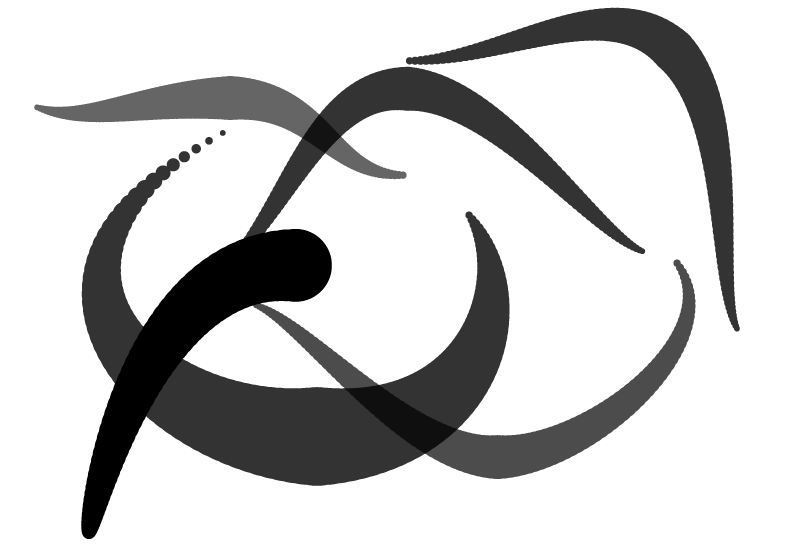

For my capstone project, I’m continuing to build on the kinect-based drawing system i built for p3. My previous project was, for all intents and purposes, a technical demo which helped me to better understand several technologies including the Kinect’s depth camera, OSC, and OpenFrameworks. While I definitely got a lot out of the project WRT the general structures of these systems, my final piece lacked an artistic angle. Further, as Golan pointed out in class, I didn’t make particularly robust use of gestural controls in determining the context of my drawing environment. In the interceding week, I’ve been trying to better understand the relation between the 3d meshes I’ve been able to pull of the Kinect using synapse and the flow/feel of the space of the application window. Two projects have served as particular inspiration.

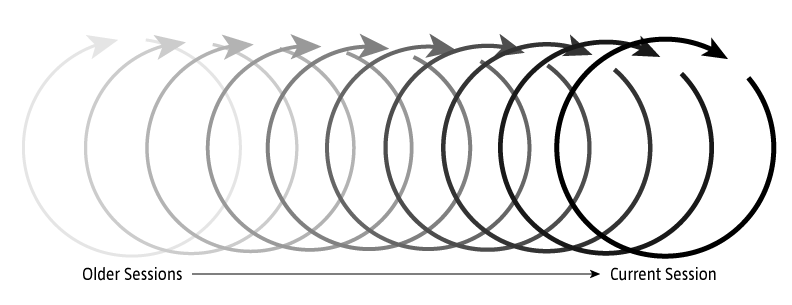

Bloom by Brian Eno is a REALLY early iOS app. What’s compelling here is the looping system which reperforms simple touch/gestural operations. This sort of looping playback affords are really nice method of storing and recontextualizing previous action w/in a series.

Inkscapes is a recent project out of ITP using OF and iPads to create large-scale drawings. Relevant here is the framing of the drawn elements within a generative system. The interplay between the user and system generated elements provides both depth and serendipity to the piece.

Sam

07 Apr 2013

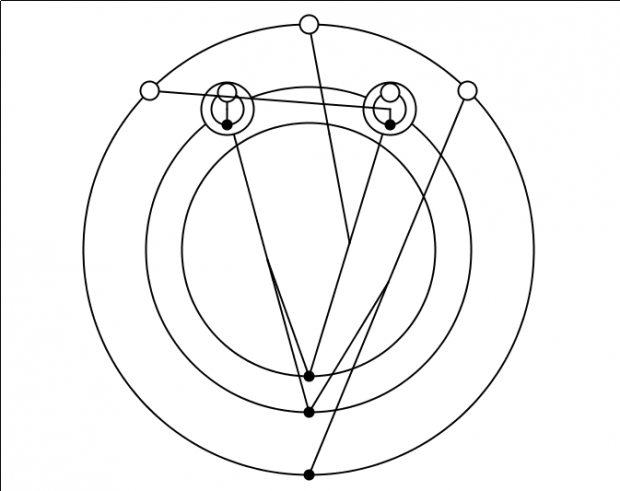

For my capstone project I will be expanding upon my last, GraphLambda, which was a visualization of Lambda Calculus programs.

The current state of the project is more a proof-of-concept than a usable tool. To bring it forward to achieve my original goals of providing an interactive visual programming environment for Lambda Calculus, I need to:

- Better layout of the nodes (topological sorting to clarify the order of operations)

- Create some tools for inserting, manipulating and deleting elements of the Lambda expressions

- Provide a clearer link between the text and pictorial representations (perhaps using some sort of synchronized highlighting)

- Provide a way to see the evaluation of the program in steps

- Enable collapsing of expression groups for readability

After achieving these goals, I plan to use the visualizer to record a video introduction to Lambda Calculus in this form. This paper from a researcher at FU Berlin gives a good overview of introductory concepts, and will probably form an outline for my video. I hope in the end to be able to offer this tool to the Computer Science department as a way to help their students understand this confusing topic.

Secondary features to be added as time permits:

- Investigate ways of coloring the objects to clarify relationships in the program structure

- Smooth animations for transitioning between views of the program (insertion/deletion, evaluation)

- Builtins of certain common elements (numbers, logical operations, named functions)

Caroline

07 Apr 2013

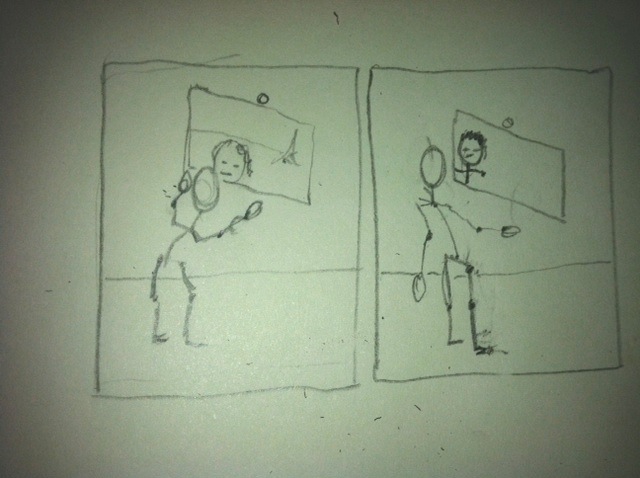

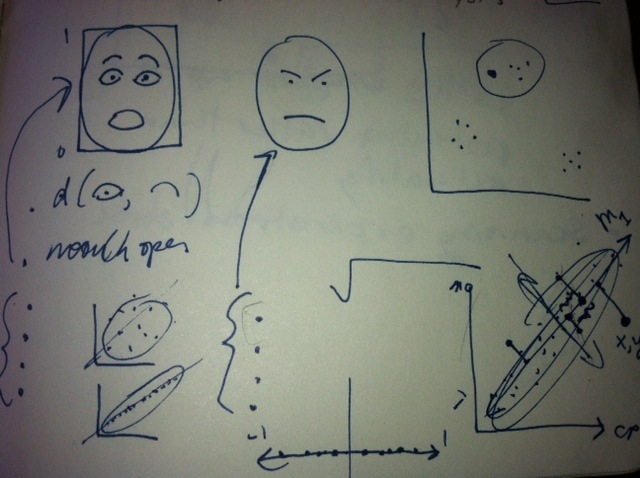

For my final project I want to use rough posture recognition to create system that triggers a photograph to be taken as soon as a pose enters a certain set of parameters. In the above photographs I attempted a rough approximation of this system. The photographs on the top are photographs taken when faceOSC detected that eyebrow position and mouth width equaled two, the second row is when the system detected both those parameters equaled zero, and finally the bottom row shows a few samples of the mess ups.

I have a couple ideas of how I might implement this project at varying levels of complexity:

- trigger a DSLR camera whenever face or body is in a particular position. Make a large format print of faces in a grid in their various positions.

- Record rough face tracking data of a face making a certain gesture. Capture that gesture frame by frame, and then capture photographs that imitate that gesture frame by frame.

- Trigger photographs to be taken when people reach certain pitch volume combinations. Create an interactive installation that you sing to and it brings up people’s faces that were singing the closest pitch volume combination.

All of these ideas involve figuring out how to trigger a DSLR photograph from the computer and storing a database of images based on their various properties. Here are some resources I have come up with to help me figure out how to trigger a DSLR:

- using arduino (tutorial page)

- Using an open frameworks library

- Communicating with an application that already talks to the dslr. PC only ):

In terms of databasing photographs based on their various properties, Golan recommended looking into principal component analysis, which allows you to reduce many axis of similarity into a manageable amount. He drew me a beautiful picture of how it works:

I also found Open Frameworks thread that pretty much described this project. Here are some of the influences I pulled out of that:

Stop Motion by Ole Kristensen

Cheese by Christian Moeller

Ocean_v1. by Wolf Nkole Helzle

Nathan

07 Apr 2013

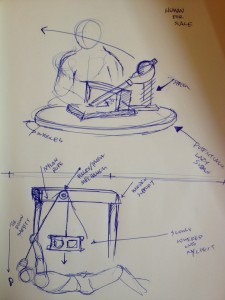

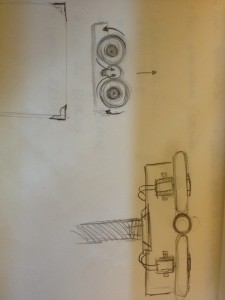

I am currently making work based on the ideas of Apprehension, Impact, Aggressiveness, and Gentleness. Through my own video performance work I have come to create a sculpture language that deals with these thoughts and actions that relate to them. My current interpretation is about purposefully being in the potential danger zone of an active simple machine throwing things at me.

First, I propose constructing 3 separate objects that throw things at me

1. A Catapult Mechanism

2. A Pitching Mechanism

3. A Simple Lever Drop Mechanism

These mechanisms will throw either

1. Lightbulbs

2. Hot Wheels

3. Wine Glasses (Maybe… potentially sugar glass if I can get my hands on it. I tested out wine glasses and they fucking hurt).

Second, I want to do multiple video performances of myself interacting with said mechanism. The Pitching Mechanism will be shown at my exhibition Uncontrol, on April 19th 2013 at the Frame Gallery.

Third, I am proposing that all 3 objects are displayed together with the videos of the performance work next to them at the final exhibition of the IACD class.

Examples of other work in Context

Red Apple [Impact] from Nathan Trevino on Vimeo.

Bulb II [Impact] from Nathan Trevino on Vimeo.

Egg [Impact] from Nathan Trevino on Vimeo.

Sex Machine I from Nathan Trevino on Vimeo.

Robb

01 Apr 2013

Joshua Lopez-Binder and I plan on making some gorgeous and outrageously efficient heat sinks.

What is a heatsink, you may ask? A heat sink is an object, typically metal, that is designed to absorb and dissipate heat. They are primarily used to cool hot electrical components.

My vested interest in making a super efficient and highly beautiful heatsink is quite related to my continued, yet slow pursuit of making a new Cryoscope. I find its current design noisy(due to fan) and a little static aesthetically. The device needs a large heatsink in order for the solid state heat pump(Pelter Element) to refrigerate the contact surface.

The applications of such a component are not at all limited to my old project.

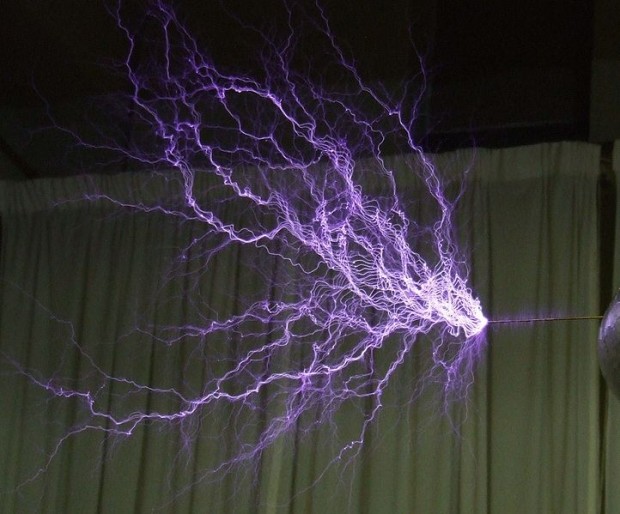

If I can get it to provoke imagery of a lightening storm, I think it would be pretty neat.

Josh and I have some theories. We think that naturally inspired fractal geometries will make very nice heat dumpsters indeed.

I am thrown with licthenberg figures, the pattern left behing by high intensity electrical charges. Here is an example of one on the back of a human who survived a lightening strike.

This looks like it will shape up to be the most formal thing I have pursued since enrolling in art school. I feel that the physical manifestation of thermal radiation of waste is an important aspect of my earlier thermal work. I had tried in the earliest Cryoscope to hide the byproduct heat using aesthetics that were too close to Apple for my comfort.

Lichtenberg ‘Art’

A group of scientists, dubbing themselves Lightening Whisperers, started a company which embeds Lichtenberg figures in acrylic (Plexiglass) blocks using a multi-million volt electron beam and a hammer and nail. The website is a great way to kill an hour looking at these beautiful little desk toys. They also shrink coins.

Josh Outlined some very nice works by Nervous System. They make very pretty generative jewelry, among other things. I just spent an hour scrolling on their blog. I always look too far outwards and end up with a post that is too short.

Patt

31 Mar 2013

As a mechanical engineer, I really really want to work with hardware for this final project. I really want to get my hands dirty and machine something. Now that I am a little more familiar with Max/MSP, I am excited to use it for my final project. As someone who loves to explore new places, I was amazed to recently find out that my friend has never been to a lot of places in Pittsburgh even though he’s about to graduate in less than two months. It has never occurred to me that a good number of CMU students live in ‘CMU’ but not in ‘Pittsburgh’. I think Pittsburgh has a lot of potential, definitely a perfect city for college students. I have grown to love this city more and more every year, and I want people to feel the same way.

For this final project, I want to make something that maps the different places you go to in this city. I am thinking about making a wall map or a box, carved out a ‘map’ of Pittsburgh (does not have to be the exact map of the city, but shows different places like restaurants/museums/tourist attractions), and install LED lights. A GPS will track a place you have been, and lights will go off at that specific place.

The layout and the details of the map might change, but this is just a general idea of what I want to do. Here are some projects that will help me get started.

http://www.creativeapplications.net/maxmsp/skube-tangible-interface-to-last-fm-spotify-radio/

http://www.designsponge.com/2010/09/pittsburgh-city-guide.html