For this project, I collaborated with Caroline Record and Andrew Bueno to make a sifteo alarm clock. For full details and a video demonstration, click here.

Category Archives: sifteo

Caroline

29 Jan 2013

In this grand collaboration between Andrew Bueno, Erica Lazrus and Caroline Record we created a prototype for a sifteo alarm clock. In case you have never stumbled upon these new fangled little cubes before, Sifteos are the new kid on the block for tangible computing. Sifteos are not a single device, but rather a collection of cubes that are aware of their orientation to one another. Our idea was to create an alarm clock that would only stop ringing when all the cubes were gathered together in a certain orientation. The user could set the level of difficulty by hiding the cubes about their abode for their future sleepy self to collect in the wee hours of the morning. We used two cubes: one as the hours and one as the minutes. Each could be set by tilting the cubes upward or downward. We have lots of ideas of how we could improve on our initial prototype. For example we would like to use png fonts , include more cube, and represent time more accurately.

code on github: https://github.com/crecord/SifteoAlarmClock

Erica: Erica was MVP, and bless her soul for it. She certainly

did the most coding and managed to figure out the essentials of how

exactly we could get this alarm to work, and she tirelessly built off Bueno’s timing mechanism to figure out how to represent time without the Sifteo using too much memory every time it checked how many minutes and seconds were left on the clock.

Caroline: Caroline was our motion-mistress, and implemented our

system for setting the alarm based on the movement of the Sifteo. She also

came up with the original idea, and so deserves a ton of credit in that

respect. Caroline also impeded the process by bothering Erica and Bueno to explain the workings of c++.

Bueno: During our short brainstorming process, Bueno

suggested that, if the alarm were to have different difficulty settings,

that we consider solving anagrams as a possible challenge for the user.

When we actually got down into the coding, it was often Bueno’s job to sift

through the documentation/developer forums in order to figure out answers

to some of our confusion concerning how exactly we should go about coding

the darn things. In the end, Bueno figured out how exactly we could go about

ensuring the Sifteo could keep track of time.

Sifteo Alarm Clock from Caroline Record on Vimeo.

Alan

28 Jan 2013

The Github Repo: https://github.com/chinesecold/Rock-Scissor-Paper

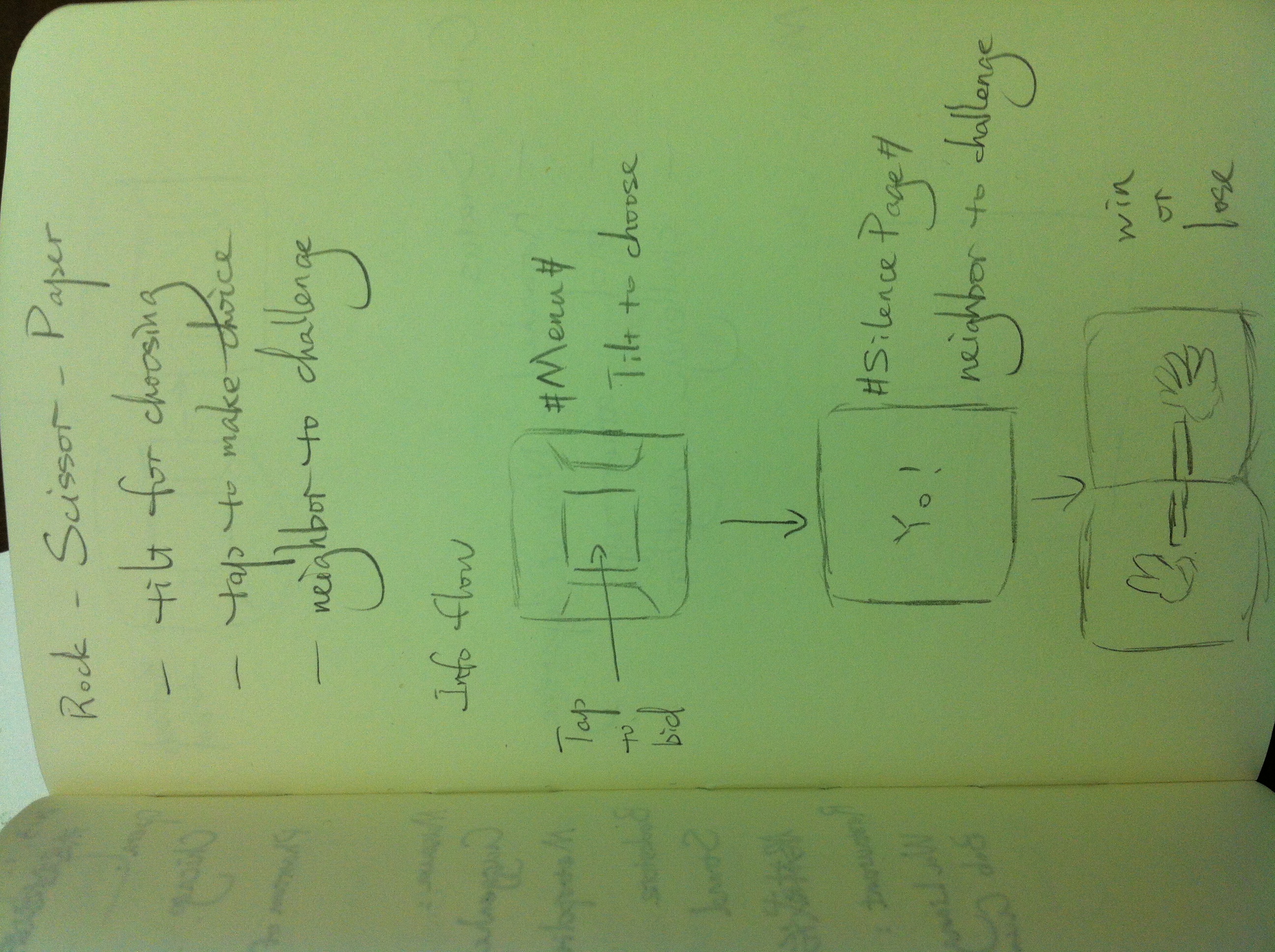

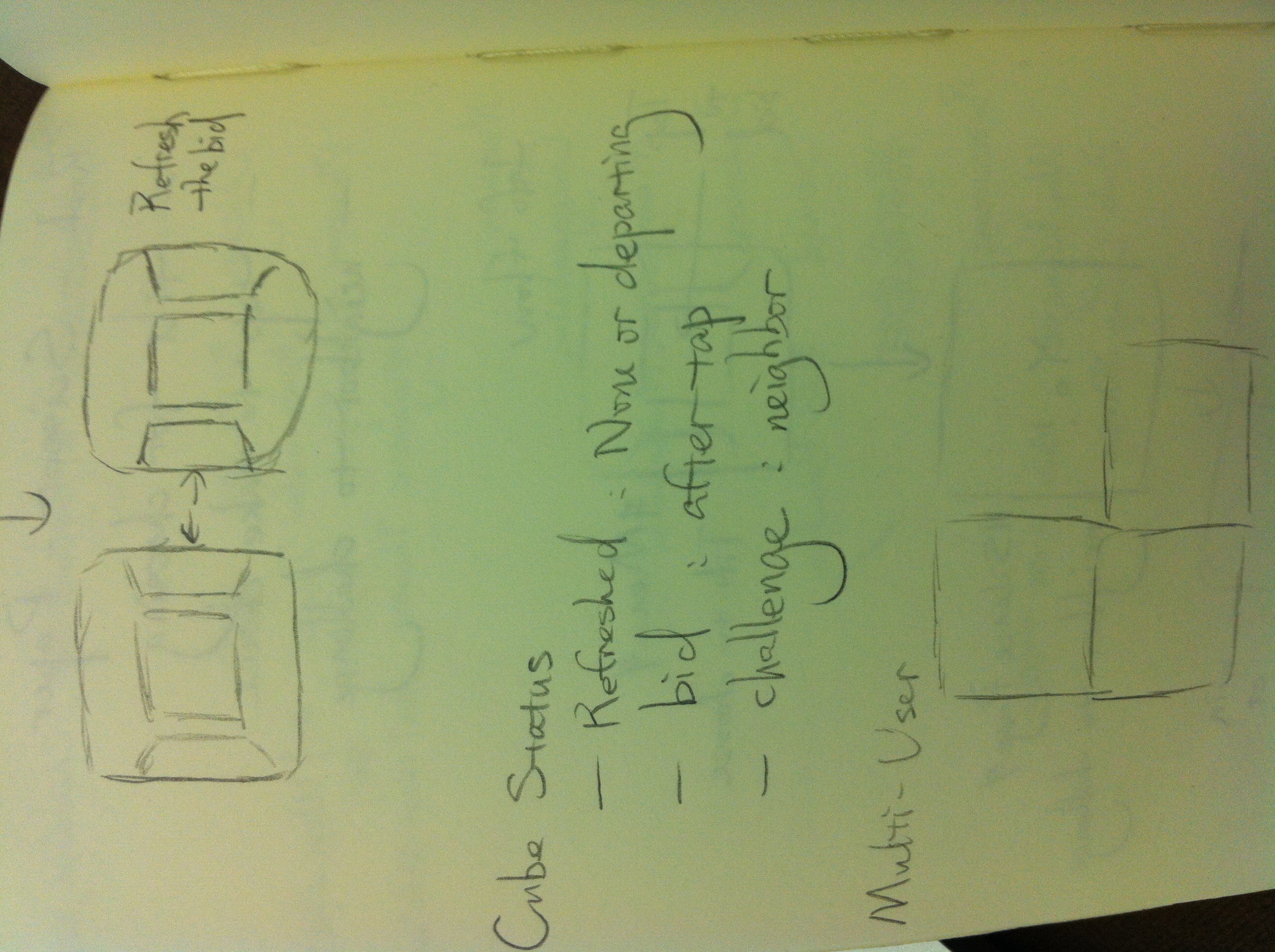

This project is designed to implement Rock-Paper-Scissor game on Sifteo devices. With each user using one cube, it allows users to bid their choices by tilting and tapping and to challenge other players by neighboring others’ devices.

Andy

28 Jan 2013

Github: https://github.com/andybiar/SifteoMusicBlocks

My Sifteo project ended up being the one that I am most proud of out of the four. I created a small step sequencer and composition system for Sifteo. The starting pitch and tempo are set at compile time, and from then on there is a master cube that will always play the starting pitch on the downbeat. From there you can add up to 7 other cubes into the composition. Adding cubes on top of other cubes will create chords, whereas adding cubes to the sides of other cubes will create melodies. The notes on the other cubes are dependent on the pitch of the cube you are connecting to (the base pitch), the side of the cube you are placing (determines whether to make a Major 2nd, Minor 3rd, Major 3rd, or Perfect 4th using Pythagorean tuning ratios on sine waves), and whether you are adding or subtracting that interval from the current. It’s pretty fun. Unfortunately all the Sifteo cubes in the STUDIO died so I couldn’t test on the hardware for this round of documentation.

Elwin

28 Jan 2013

A very basic program utilizing the shake function of the Sifteo cubes. An animation is played after the cube has been shaken. The program is based on the Stars example.

This part was also tough because I never worked with the Sifteo SDK AND I don’t have any experience in C++. I took a fair amount of time just trying to understand the syntax and the way things are setup. I had some ideas before I dived into the Sifteo SDK, and I quickly realized that it would be very tough to get something working. Even getting an animation into the cube took quite some time due to the specifications of the sprite itself.

Github link: https://github.com/BlueSpiritbox/p1_sifteo

*Sorry for the sound. Wasn’t aware that it was recording from my mic as well.

Patt

28 Jan 2013

While I think that Pittsburgh is trying to make up for its last ‘winter’, I have been inspired by the white snow everywhere I go this week. I modified the Stars example to make three snow globes for this Sifteo project. I used a photo of a mountain as the first layer background (bg0), and the photos of snow and a skating duck as sprites. The snow keeps falling every time the image is updated, but the duck stays stationary. If I had more time, I would like to either move the duck back and forth from one cube to another. I also want to produce more snow every time I shake the cubes. Oh, and I want to add an audio of the song ‘Let it snow’.

Here’s the code: https://github.com/pattvira/sifteoSnowglobe

John

28 Jan 2013

Oh Sifteo, you are too cruel. Seriously, having never worked with any system remotely like this before, I found this project to be completely unapproachable. The documentation for the SDK is lacking and there is significant overhead to get started on these projects. I sort of feel like a solid foundational understanding of C++ is really the only way to travel in Sifteo world.

Oh Sifteo, you are too cruel. Seriously, having never worked with any system remotely like this before, I found this project to be completely unapproachable. The documentation for the SDK is lacking and there is significant overhead to get started on these projects. I sort of feel like a solid foundational understanding of C++ is really the only way to travel in Sifteo world.

By the end I figured out the events system pretty well. My demo is a first foray into getting something up and running with (limited) intelligible value. Basically I’m displaying the accelerometer data coming off the cubes graphically. It’s a simple application to be sure, but it took my whole brain several hours to get this far!

Git it: https://github.com/johngruen/myFirstSifteo

Video pending Vimeo: https://vimeo.com/58357777

Ziyun

28 Jan 2013

Anna

28 Jan 2013

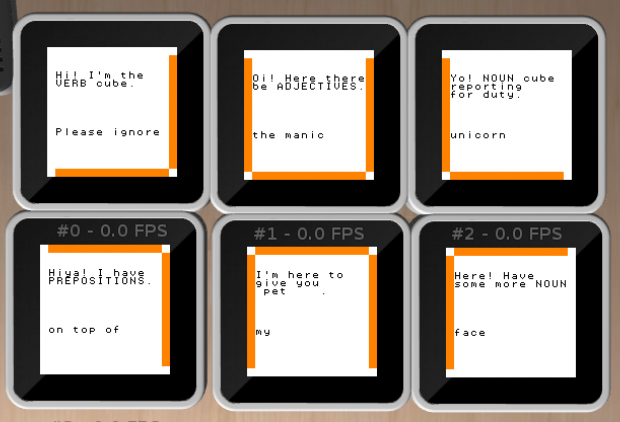

My original idea for a sifteo app was a game where each cube would display a word belonging to a different part of speech (noun, verb, adjective, etc). You’d have some amount of time to piece the cubes together in a logical sentence, before they would all switch to some other word/part-of-speech, and you’d have to begin from scratch.

Turns out this sifteo SDK was a lot harder to understand than I hoped it would be. Given that this is my first week ever looking at C++, much less a an undocumented offshoot of it, I had to adjust my goals significantly. (Thanks, Golan, for your help in understanding what I was working with!)

This version takes the original sensors demo and modifies the “shake” detection (through acceleration sensing) so that a random word will be displayed on each cube when you meddle with it. You can arrange the words to form (hopefully) amusing sentences. The name of the app comes from some somewhat taboo ‘dice’ you might have seen at a party sometime in your existence. There is nothing very taboo about this app.

If I were to take this project forward, I would like to be able to load background images or background colors into each cube, depending on the part of speech displayed. I was having a hard time with it this time around, I eventually came to realize, because I’m currently using bg0_ROM mode to create the text, and .png background assets weren’t playing very nice with that mode. Given more time, I would re-write the whole thing so that I was working in a different draw mode, and the displayed text was done using png files.

Dev

28 Jan 2013

This was the one part of this assignment I had most trouble with! Although I found the Sifteo docs to be only minorly helpful in this assignment, I should have for seen this and started earlier.

I began this assignment thinking I would do something like a word game for the blind. The blocks would each represent a letter. When tapped on the block would say what the letter was. If the letters were arranged from left to right in order, then a affirmative sound would be played, and the next word would be auto scrambled.

I was able to get the word split into letters and divided among cubes, but when it came time for me to see if the user had spelt the word out correctly I ran into a problem where I was not understanding how to reference each cube individually. This lack of understanding caused me to spend a bunch of time looking over the SDK website, which had few answers.

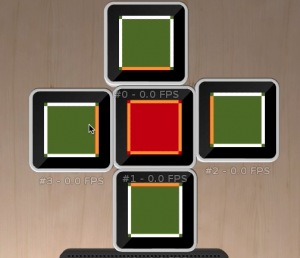

Ultimately, given the time, I decided to scratch the initial idea for a simpler one that was closer to an example I fully understood – the sensor’s example. I decided to make use of screen colors and assign these based on cube adjacencies. At first I had some problems with the background colors, but managed to set these in the end.

For a bit of extra interaction I decided to make use of touch. When a cube is touched, it changes the entire color scheme, while maintaining the fact that the color coding is based on adjacencies.

Overall the effect looks like it would be fun for a baby or a cat.