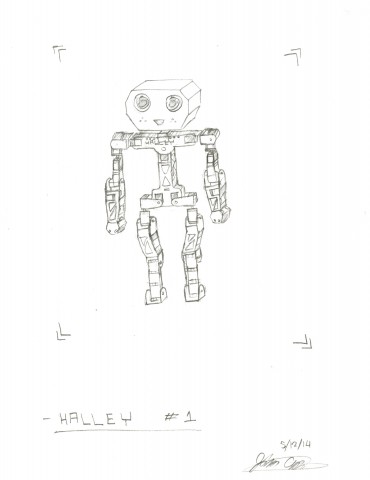

Assignment 13 Sketch

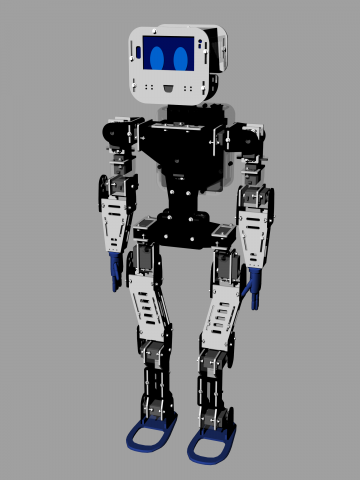

For this final project, I intend on constructing a 2.6-foot humanoid robot named Halley that exists for the purpose of emulating a single student in a classroom. The robot would be remote controlled from another computer and be able to perform tasks such as raising a hand and asking questions. Halley would also be equipped with an Android phone for a face, allowing for a wide range of emotions to be displayed.

Here is a concept sketch:

And here is a concept rendering:

This would probably not be possible to build in the course of two weeks, however, fortunately for me, I have been planning for it since the beginning of last year and assembling it since the beginning of this semester, thanks to the support of an FRFAF Microgrant (am about 80% done with raw assembly), meaning I can get it done just in time for the purposes of a final project.

While I do not expect that I will be able to complete the full functionality as a robot student until after Winter Break, I do think I will be able to get some interesting behaviors working. Primarily, I expect to have actions mapped to certain keys such that when pressed, the robot will proceed to play that action. Some actions I will probably set are shaking hands, raising hands, looking up/down/left/right, standing up and sitting down.

Something funny to take out of this, the way I see it, is that students are so detached while in some classrooms and lecture halls that they may as well be robots.