Machine-Assisted Creativity

“1980s photo of full body shot of a high fashion blobfish top model creature”, made with Midjourney

This unit has the following 9 components:

Due Thursday, 1/18:

- #1.1-LookingOutwards #1: Machine Learning and the Arts (45 minutes)

- #1.2-Exercise: Image-to-Image Translation with Pix2Pix (10 minutes)

- #1.3-Exercise: Text Synthesis with InferKit and NarrativeDevice (10 minutes)

Due Tuesday, 1/23:

- #1.4-Exercise: Text-to-Image Synthesis with Midjourney (60 minutes)

- #1.5-Exercise: Image Outpainting with Runway.ML Infinite Image (30 minutes)

Due Thursday, 1/25:

- #1.6: Project Proposal (10 minutes)

- #1.7: Reading Response (30 minutes)

Due Thursday, 2/1:

- #1.8. Self-Publishing an AI-Assisted Chapbook (6-12 hours).

This is the primary project for this unit; you will present it in a class critique.

1.1. Looking Outwards #01: Machine Learning and the Arts

(45 minutes, due Thursday 1/18). A “Looking Outwards” report is a brief post that reports on a project that interests you. Your job is to browse blogs and other sources, such as those linked below, and then report on an artwork or other project that you haven’t seen before. The point of the assignment is to deepen your familiarity with the field of AI in new media art — and to develop your own personal research practice.

The following websites showcase more than a thousand artworks that make use of machine learning and/or ‘AI’ techniques. Please browse these websites for about 30 minutes. After viewing at least five projects, select one to feature in a Looking Outwards report.

- MLArt.co Gallery (a collection of approximately 500 experiments curated by Emil Wallner).

- AI Art Gallery (annual exhibitions of the NeurIPS Workshop on Machine Learning for Creativity and Design: 2020, 2019, 2018, 2017)

- Chrome Experiments (a showcase of experiments, commissioned by Google, that explore machine learning through pictures, drawings, language, and music).

- CreativeApplications.net: #AI (projects tagged with #AI on a leading blog about media arts and design. To get past the paywall, use the login information provided in the #key-information channel of our Discord.)

- HuggingFace (a large repository of laboratory-grade experimental AI tools, not yet commercialized)

Now:

- Create a post in the Discord channel, #1-1-looking-outwards

- Include an image of the project you selected.

- Include a URL link to information about the project.

- Write a sentence describing the project. (What is it?)

- Write another sentence about why you found the project interesting.

- Write a question, concern, or critical observation that you have about the project.

- Note: If two students choose to write about the exact same project, neither student will get credit. This constraint is made to encourage you to look beyond the first page of results.

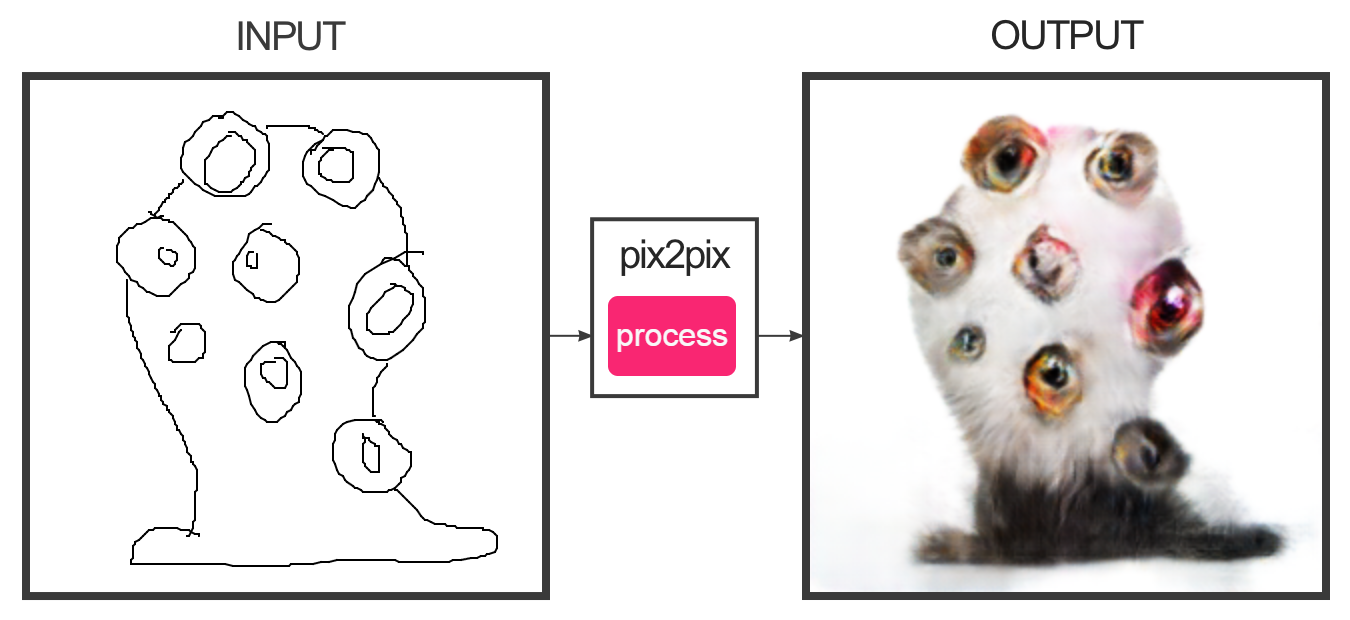

1.2. Exercise: Image-to-Image Translation with Pix2Pix

(10 minutes, due Thursday 1/18) In this brief exercise, you are asked to spend a few minutes with the Image-to-Image (Pix2Pix) online demo app by Christopher Hesse. Note that this app is from 2017, a very long time ago in AI terms, and is comparatively rudimentary. Experiment with edges2cats and, if you wish, some of the other interactive demonstrations (such as facades, edges2shoes, etc.). You are asked to:

- Create a post in the Discord channel, #1-2-pix2pix

- Create 2 or 3 different designs. Screenshot your work so as to show both your input and the system’s output.

- Embed your favorite results into the Discord post.

- Write a reflective sentence about your experience using this tool.

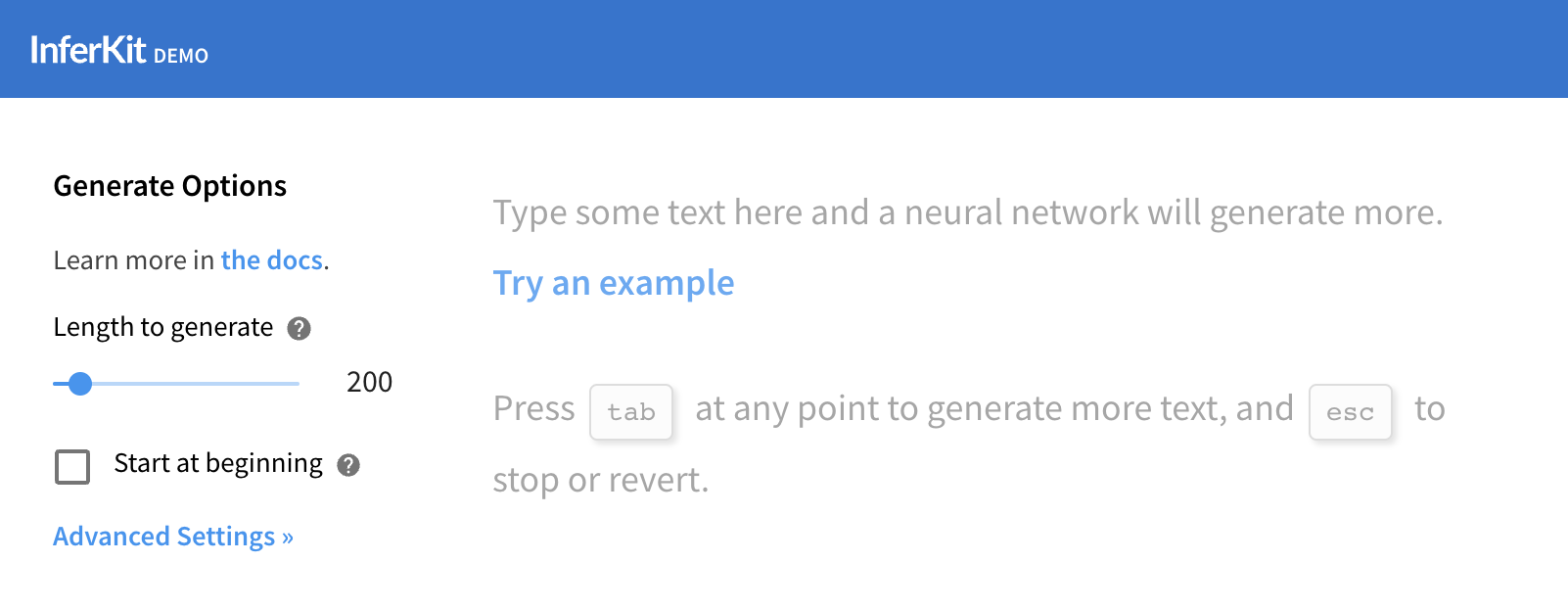

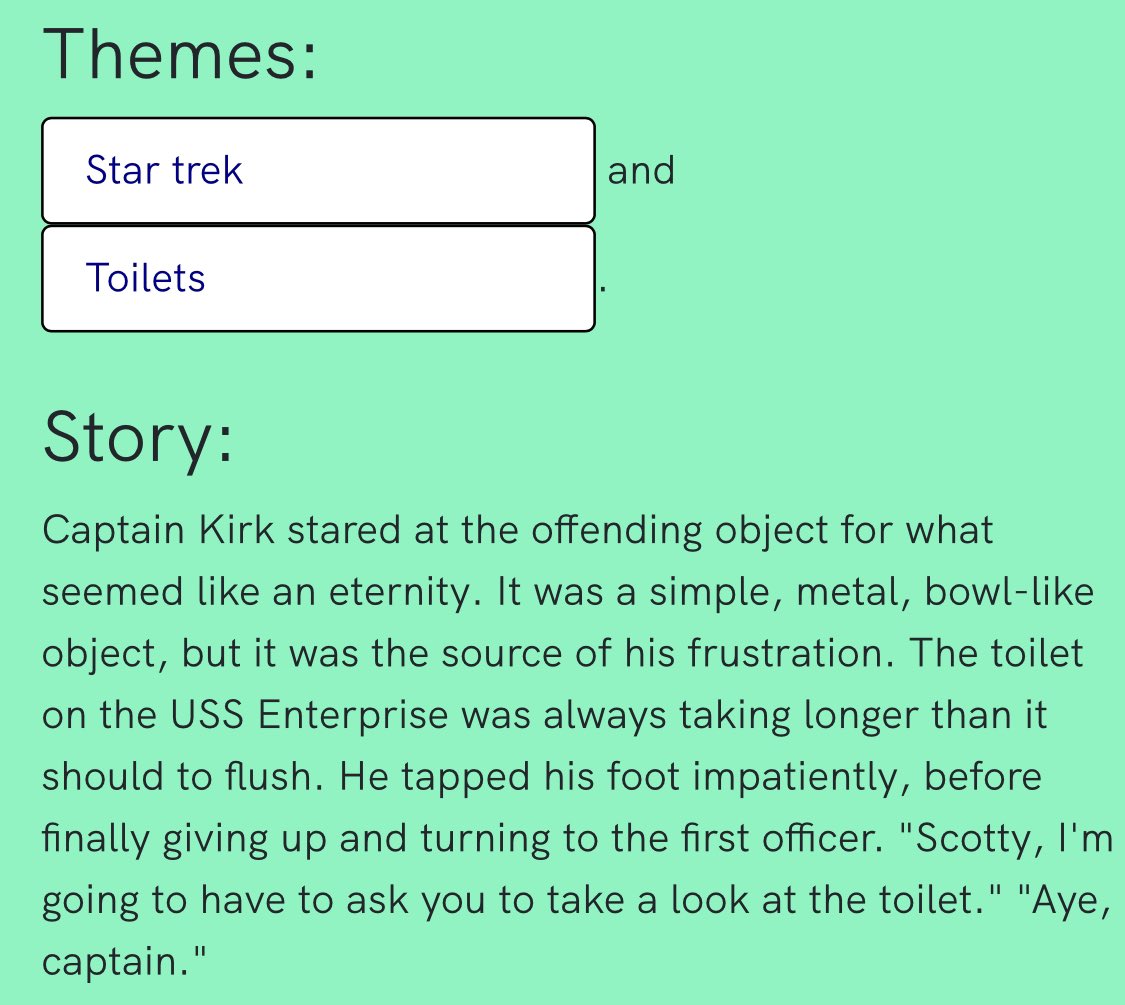

1.3: Exercise: Text Synthesis with InferKit and NarrativeDevice

(10 minutes, due Thursday 1/18) In this brief exercise, you will use two different browser-based interfaces to Open AI’s GPT-3 language model in order to generate some text which intrigues you: The InferKit tool by Adam King, and the NarrativeDevice by Rodolfo Ocampo (you’ll need to register for a free token).

Now:

- Experiment with one or both of these tools until you produce a text fragment that you find interesting.

- Create a Discord post in the channel #1-3-text-synthesis.

- Embed your text experiment in a Discord post. Use boldface to indicate the words which you provided as inputs to the system.

- Also in your post, write a reflective sentence or two about your experience using these tool(s).

1.4. Exercise: Text-to-Image Synthesis with Midjourney

(60 minutes, due Tuesday, 1/23) In this exercise, you will use a machine learning system to generate some images that intrigue you. This system has (controversially) been trained on billions of images scraped from the Internet. This very entertaining 10-minute Last Week Tonight video gives some good perspective; I encourage you to watch it.

You have been provided with access to a paid account for MidJourney, an image synthesis service. This is accessed through the Discord account of my alter ego, gorgonzola. Login details for this account are available in our course Discord. NOTE: This Discord+Midjourney account is a communally shared resource for the 16 students in our class, and sharing it with you is an act of trust.

If you have issues accessing Midjourney, for whatever reason, there are several comparable alternatives. The following services all work to generate images from textual descriptions, using similar algorithms, but provide different levels of image quality, speed, user interface controls, and interestingness. Most have a free tier (that you may quickly exhaust). These include DreamStudio (which has a nice UI), HuggingFace StableDiffusion, DALL-E 2, ArtBreeder, Craiyon, Pixray Text2Image, SimpleStable, and MindsEye.

Now,

- Read the Midjourney Quick Start Guide — most especially, the documentation on “Imagine Parameters“.

- Tinker! Try experimenting with techniques like: “inspiring” an image with a seed image, using different model versions, making non-square images, etcetera.

- Generate a half-dozen or so images, and present them in the Discord post. What you make is up to you, but I challenge you to make something that doesn’t just look like a character design plucked from DeviantArt (that’s too easy). The weirder the better. Try to work a prompt through at least 5 iterations of revision.

- Create a Discord post in the channel #1-4-image-synthesis

- Embed your images in the post.

- For each image, include the text of the prompt that you used to create it.

- Write a few sentences of reflective commentary. Were you able to ‘guide’ the system? What discoveries did you make?

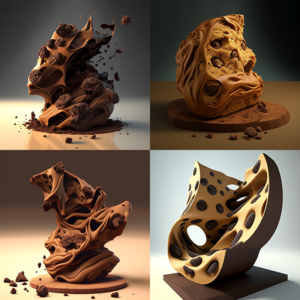

Tip 1: Note that Midjourney provides some powerful commands to guide the AI. These are discussed in the documentation on “Imagine Parameters“, and include things like controlling the image aspect ratio, resolution, visual quality, inspiration source image, and (importantly) choice of AI model. Midjourney has evolved a lot: The latest model version (v6) produces extremely high-fidelity results, but the earlier versions can make weirder stuff. Below are v1 and v4 responses to the same prompt, “chocolate chip sculpture”:

Tip 2: The following list additional guides may be helpful — they are packed full of practical tips. In particular, you may find it helpful to use what Kate Compton has called “seasonings” (discussed in this Twitter thread) — extra phrases and hashtags (like “trending on ArtStation” that hint and inflect the image synthesis in interesting ways.

- Midjourney’s official “Tips for Prompts“

- Crafting Exceptional Prompts for Midjourney

- Prompt-Engineering Tips

- Kate Compton on “Seasonings”

- Kevin Slavin on virtual photography

- Into prompt engineering for A.I.

In using our communal Midjourney (Discord) account, please adhere to the following:

-

- Do not share the login details of this account with anyone.

- Do not modify any of the account settings (password, etc.)!

- Do not use this account to connect to any other Discord servers.

- Do not use this account to communicate with other people on Discord, harass them, etc. You must adhere to our course Code of Conduct.

- You must adhere to the MidJourney terms of service. If you get booted off for doing bad things (like generating offensive content), it affects our whole class.

- I’ll need to help you log in the first time by affirming that your login is kosher.

- If you need significantly more images for your project 1.8 (below), talk to me.

1.5. Exercise: Image Outpainting with Runway.ML

(30 minutes, due Tuesday, 1/23). You have been provided with account details to access Runway.ML, a powerful suite of machine-learning-based artist tools. In this exercise, you are asked to use the Runway.ML “infinite image” and/or “expand image” tool to provocatively extend an image of your choice. Some possible images you could expand are:

- One or more of your own artworks

- One of the images you generated with Midjourney

- A famous photograph or painting (please be thoughtful)

- (This list is representative and not exhaustive.)

- (Note that it’s also possible to create a composition that connects multiple images.)

Now,

- Watch the 2-minute video above, which helpfully explains how to use the tool.

- Make a large, high-resolution extended image. Consider making a tableau vivant. Compare the results of Runway’s “infinite image” tool (which asks for a text prompt) versus Runway’s “expand image” tool.

- Create a Discord post in the channel #1-5-outpainting, and embed your image.

- Also embed the “original” (seed) image that you extended, so we can better understand what you did.

- Write a few sentences of process description. What was the initial “kernel” image or images that you started from? Why did you choose this? Did you make any discoveries in your process?

- Write a few sentences of reflective commentary. What do you appreciate about the result, and what would you change?

#1.6: Project Proposal

(10 minutes, due Thursday, 1/25). The purpose of this assignment is to keep you on-track.

- Read ahead to the prompt for Project 1.8, below.

- Create a Discord post in the channel, #1-6-proposal.

- Write just a sentence or two that describes, in broad terms, the type of book you plan to make for this project. Optionally, please free to share some early results, or a brief update about your progress on the project (if any).

#1.7: Reading Response

(30 minutes, due by Thursday, 1/25. By this point, you have experienced using AI tools a little. Below, you are provided with two short readings about AI+Art, and asked to respond in a couple of sentences.

- Hertzmann, Aaron. “How Photography Became An Art Form“. Aaronhertzmann.com blog, 08/29/2022. (8 minute read). [PDF backup copy here.]

- Popova, Maria. “Music, Feeling, and Transcendence: Nick Cave on AI, Awe, and the Splendor of Our Human Limitations“. The Marginalian, 1/24/2019. (5 minute read). [PDF backup copy here.]

Here, respectively, are optimistic and pessimistic takes on AI+art: Aaron Hertzmann (an imaging scientist at Adobe) suggests that, like photography, AI will lead to new art forms, and reinvigorate old ones. Nick Cave (a legendary post-punk songwriter) asserts that great art arises from the audacity to transcend human limitations, adding, “AI would have the capacity to write a good song, but not a great one. It lacks the nerve.”

Now,

- Read the two articles above.

- Create a Discord post, in the #1-7-reading channel.

- Write a sentence or two describing something that stuck with you from either of the articles.

- Write a couple of sentences of reflection on these readings. How do you reconcile these points of view? How can we make great art—not merely good art—with AI? What might “nerve” look like if you’re using (and not avoiding) AI tools?

1.8. Self-Publishing an AI-Assisted Chapbook

What We’re Doing and Why

(6-10 hours, due Thursday 2/1). Your challenge is to use one or more of the above techniques to assist you in the (AI-assisted) creation of an illustrated chapbook, and to self-publish this book through Lulu.com, an online vendor. Why are we doing this? The aims of this project are several:

-

- (Ab)using AI creatively requires a very different way of thinking. I feel you should develop an understanding of the texture and possibilities of this new medium, and that you should have the skill to guide AI systems to produce compelling cultural objects. Non-artists like this guy are using AI to make stuff that superficially ‘looks good’; as an artist, you really ought to be better than them.

- You will need to use Adobe InDesign to layout the book, and you will need to navigate Lulu.com’s system for self-publishing. While your work with ChatGPT and Midjourney may be highly conceptual and experimental, your work with InDesign and the online digital publishing process will be bluntly practical.

Our goal is to explore the potential of these tools as assistants or prosthetics for your creativity. For this reason, you are invited/permitted to hand-edit the texts and or images that you generate, in any way you deem necessary. Some possible types of books you might create include (but are not limited to):

Nonfiction: Abecedarium (alphabet book, e.g. “A is for Apple”, etc.), Art monograph, Autobiography, Biography, Catalogue, Coffeetable Travel Book, Crafts/hobbies, Cookbook, Dossier, Diary, Dictionary, Encyclopedia, Guide, History, Home and garden, Humor, Journal, Memoir, Philosophy, Prayer, Religion, Textbook, True crime, Review, Science, Self help, Sports and leisure, Travel, True Crime

Fiction: Action and adventure, Alternate history, Anthology, Chick lit, Children’s, Comic book, Coming-of-age, Crime, Drama, Fairytale, Graphic novel, Historical fiction, Horror, Mystery, Paranormal romance, Picture book, Poetry, Romance, Satire, Science fiction, Suspense, Thriller, Western, Young adult

How You Will Do It

-

- Your book may be: (A) all text; (B) all images; or (C) any mixture of text and images. You should use AI to assist in generating either the images, and/or the writing, or both.

- To generate images, you may use MidJourney— but you are also welcome to use other AI imaging tools including DreamStudio, HuggingFace StableDiffusion, DALL-E 2, ArtBreeder, Craiyon, Pixray Text2Image, SimpleStable, cleanup.pictures, palette.fm, GauGan, GANPaint, MindsEye, and the very wide variety of AI tools in RunwayML, including but not limited to Infinite Image. You can also use these tools in combination with each other (such as by extending Midjourney images with Infinite Image). NOTE: To make your book look good, you’ll probably need to generate images in the highest resolution possible, either using Midjourney’s or Runway’s “Upscale” feature, or a superresolution AI upscaling tool like Waifu2x or Remini.

- To generate text, you may use ChatGPT — but you are also welcome to use other AI text-generating tools including Write with Transformer (GPT2), InferKit Tool, NarrativeDevice, PhilosopherAI, SudoWrite, TextSynth. You can also use these tools in combination with each other. You might find that older systems like GPT2 produce “weirder” stuff.

- You are also welcome to “get your hand in there” and modify the text and/or images generated by the computer. There is no rule that “it must be 100% computer-generated”.

We will use the Lulu.com “Comic Book” template for InDesign. Download this template (lulu-comic-book-interior-template.indd inside the lulu-book-template-comic-book.zip folder) by navigating through this form here. Select “Comic Book” and (for books between 2-32 pages) the “saddle stitch” binding. You may print in color or black-and-white, up to you. Your book may have up to 32 pages. Here are some important pain points to note about using Lulu’s templates:

-

- When you first open it, Lulu’s file templates default to having the workspace set to the locked “Template” layer—and you literally won’t be able to do or accomplish anything. This is very frustrating. Go to the Layers menu and switch your active layer to the unlocked layer called “Your Artwork”.

- For some infuriating reason, Lulu’s template comes with all typography set to “All Caps”. This means that no matter what text you type or paste, it LOOKS LIKE THIS. Fix this by going to Window→Type&Tables→Character palette, open the Options hamburger menu in the upper right, and unclick the “All Caps” checkbox.

- The Lulu template comes with the default font being “Open Sans”. If you don’t happen to have this font on your system, then all the text you try to display will be highlighted pink, indicating an error status (i.e. missing font). Choose a different font in the Character menu.

- To add pages using the Pages menu, go to the Pages palette, open the tiny Options hamburger menu in the upper right, and enable “Allow Document Pages to Shuffle”.

- I strongly suggest that you go File→DocumentSetup→Enable Facing Pages. This will help make it clear which page is on the left or right side of your book’s spreads.

- Here is a revised cover template (2024) that will be helpful when you lay out the cover.

- When you export the cover, make sure you have Export As Spreads checked.

- If you use full bleed on your book (meaning, you want ink to go all the way to the edges), make sure that your design goes past the edge of the page, and make sure you set a Bleed of 0.125 when you export the file. Otherwise, Lulu will complain that your document is the wrong size.

What are the Actual Deliverables?

(I have presented an example of these deliverables on Lulu here, in PDF here, and on this page.)

Now:

- Be prepared to discuss a proposal for your project in class on 1/25; see #1.6: Project Proposal above.

- Make a book with AI-generated texts and/or images, with up to 32 pages, and publish this on Lulu.com using their “comic book” printed format.

- Create a Discord post, in the #1-8-chapbook channel.

- Begin by providing a single sentence declaring the title of your book, and briefly describing what your book “is”.

- Provide a screenshot of a “contact sheet” of the interior of your book, showing all of the pages. (You can do this with Preview or Acrobat.)

- Also provide a screenshot of your favorite page(s) or spread(s) from the book.

- Write a few sentences describing what inspired or motivated you to make this particular idea. What were you aiming for? How did your thinking evolve? Discuss your process.

- Write a couple sentences of critical evaluation. Which specific aspects of your project do you feel proud of, and which aspects fell short of your vision?

- Include a link to your book, published at Lulu.com. Make sure this link is working. Your book must be publicly purchasable from Lulu.com, at (or very close to) the cost of production.

- Store a PDF copy of your book’s interior and cover in your CMU Google drive. In your Discord post, include links to these PDF files. Make sure these links have ‘public viewing’ enabled.

- Be prepared to present your project in a class critique on Thursday, February 1st. You’ll present it digitally, from a PDF stored in your CMU Google Drive.