“How will we change the way we think about objects, once we can become one ourselves?” – Simone Rebaudengo

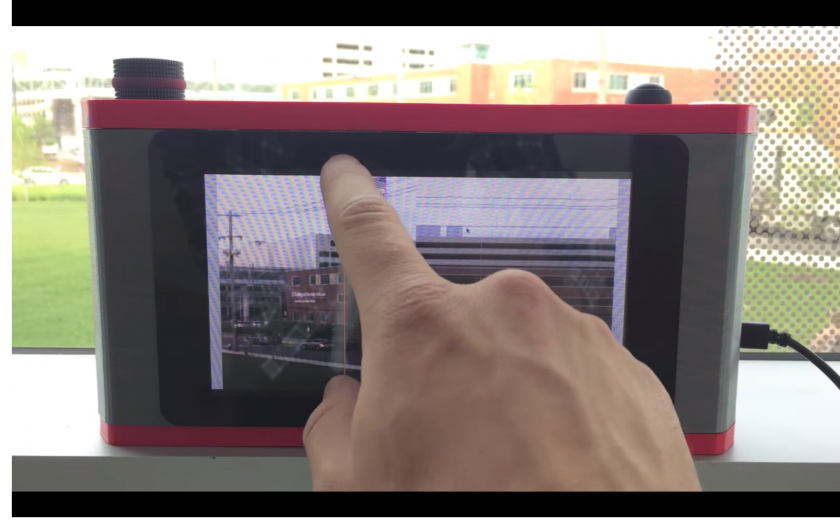

The aim of this project is to leverage a surprisingly available technology, the Wacom tablet, in order to change one’s perspective on drawing. By placing you’re view right at the tip of the pen, mirroring your every tiny hand gesture, scale changes meaning and drawing becomes a lot more visceral.

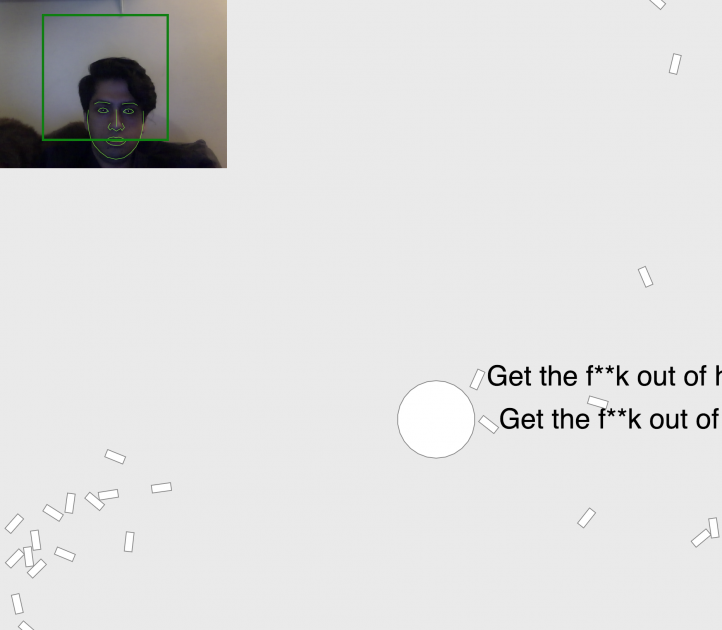

Part of this is every micro movement of your hand (precision limited by the wacom tablet) becoming magnified to alter the pose of your camera. Furthermore, having such a direct, scaled connection between your hand and your POV allows you to do interesting things with where your looking.

Technical Implementation

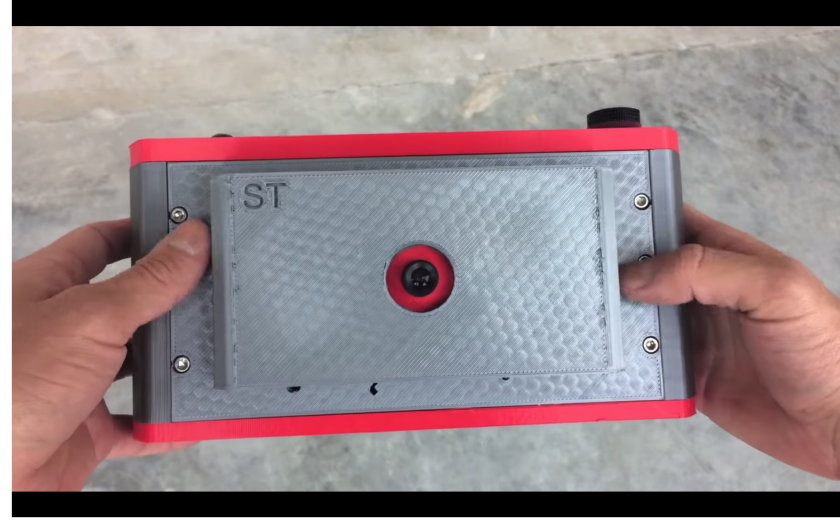

I wrote a processing application that uses the tablet library to read the wacom’s data as you draw. From there, it also records the most precise version of your drawing to the canvas as well as sends the pen’s pose data to the VR headset over OSC.

Note: One thing that the wacom cannot send right now is the pen’s rotation, or absolute heading. However, the wacom art pen can enable this capabilities with one line of code.

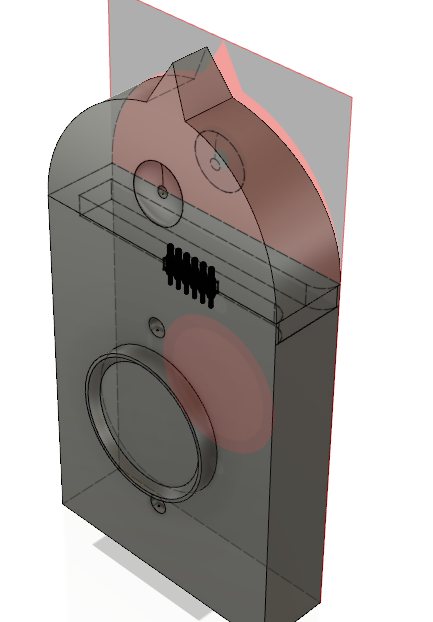

The VR app interprets the OSC messages and poses a camera accordingly. The Unity app also has a drawing canvas inside as well, and your drawing is mirrored to that canvas through the pen position + pen down signals. This section is still a work in progress, as I only found too late (and after much experimentation) that it’s much easier to smooth the cursors path in screen space and then project it onto the mesh.

The sound was done using granulation of pre recorded audio of pens and pencils writing in Unity3D. This causes some performance issues on Android and I may have to look into scaling this back.