The Abovemarine

This project by Adam Ben-Dror uses OpenCV to track the position of a fish in a mobile fishbowl. It then moves the bowl based on the direction the fish is swimming, allowing the fish to roam “freely” on land.

I like this project because it is an interesting use of computer vision, and technology as a whole. It reminds me of the “reverse SCUBA suit” created by Dr. Wernstrom in the eighth episode of Futurama. While it is a similar concept, Adam Ben-Dror’s project is much more simple and elegant. I also appreciated the simple, no-frills design that was used in this project. The documentation is also, very professionally done.

Bit Planner – A Cloud Synchronized Lego Calendar

This project created by Vitamin Design is a calendar that organizes workflow, where projects are represented by different colors, people by rows, and half-days by columns. I like it because of its simplicity and that they made an app to allow themselves to update their google calendars based on a picture of the calendar and some simple OpenCV use.

As I enjoy Legos, and am in constant need of more organization, this project really struck me. Where a simple google calendar would have sufficed, this goes beyond by adding the ability for people to interact with the schedule in the physical world (and using Legos).

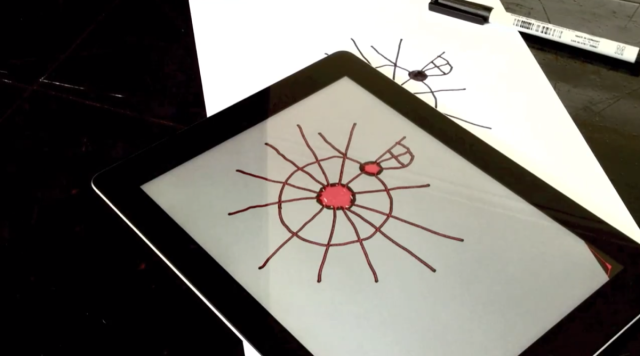

Tunetrace-Drawings to Music

Ed Burton’s app, Tunetrace, allows users to take pictures of drawings, and then creates a tune based on their shape, their topographies, and a simple set of rules. I like it because it shows another interesting use of OpenCV, and that it is an iOS app created in openFrameworks. I like to see that oF is capable of making streamlined apps for mobile devices.

I thought though that the melodies created were not very interesting. It also seemed to me that the user has no control over what tones will be created. After searching online, I found no posts of people’s tunetraces which they thought were intereseting, and this makes me assume that no one was capable of making something amazing with this system or interested enough in the system to take the time to make something to share. I would like to see a system like this that elegantly created music from a drawing but at the same time gave the user more control.