Motivation

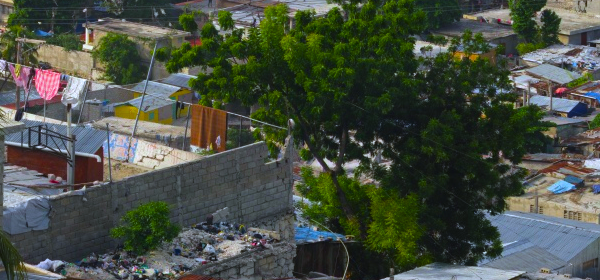

Higher resolution imaging systems and computer screens have made it possible to explore images in much more detail than previously possible. Unfortunately, fast internet connections and ever-more-compact computing devices like tablets combined with virtually limitless online content means that we are frequently able to pass over the details that lend value to these images. In short, by the nature of our technology and culture, we frequently see the forest but not the trees.

Silt, an Interactive Exploration of Detail

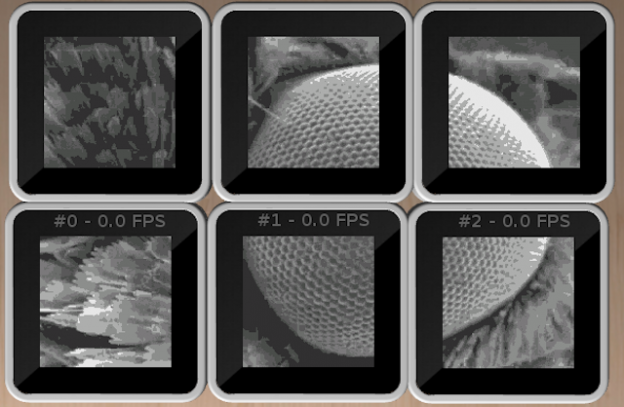

Silt is an application for the Sifteo interactive game system that provides a tangible means of exploring large images from a new perspective. This project is designed to be an installation piece which can be used in classrooms or museums, and may be used in live settings for early education later this semester. Each cube is a small window into the image that can be moved using pan and tilt gestures. Multiple cubes can be connected together to link views and provide a larger window into the image. New images can be loaded using a simple app which autoformats a selected image and allows the user to select a quality factor.

Implementation

This is an extension of the Sifteo aspect of my submission for Project-0, and is a complete re-write of the previous code. In addition to the Sifteo SDK, three core pieces of software allow the user to load new images onto the cubes without having to touch the command line or worry about image formatting.

The simple user interface is written in Processing and asks the user to select an image and a quality factor. The software scales down the image if it is far too large, then subtly adjusts the resolution so that each dimension is divisible by 8 pixels (necessary for Sifteo’s image compression) before converting it to PNG. This program also rewrites the LUA asset generation script based on the parameters intrinsic to the image and compression values. Lastly, the Processing app runs a shell script which loads the SDK tools and compiles the Sifteo app, which must be re-made for each new image. Upon completion, the script will first attempt to load the compiled app onto any connected Sifteo base. If no base is connected, the Siftulator emulation program will be used instead. An error message occurs if the size of the image or the compression settings were set too low, which prompts the user to re-run the Processing app with different parameters. This has only been tested on OSX, and will not likely work on other platforms, though cross-platform compatibility is in the works.

The Sifteo app itself is initially based on the Sensors demo from the SDK, but is only partially event-driven. The cubes respond to two actions: connecting cubes and tilting motions. Neighbor attachments are events that trigger the active cube (designated as the last cube whose screen was touched) to assume the appropriate virtual orientation to correspond to the neighboring cube to deal with physical rotations. After this step, the cube updates its coordinates to display the appropriate adjacent area of the image. There is a gap between the images on each cube to correct for the width of the bezels.

In the original implementation (which was also incapable of dealing with cube rotation) the bezels acted as blind spots. The Processing app originally cut the original image into tiles and served each as its own indexed asset image. This meant that the areas covered by the bezel were permanently obscured (and in fact, not even present). For the purposes of thoroughly exploring an image, this was unacceptable. The new implementation loads a single massive asset image and each cube draws only the tiles corresponding to its global coordinates in pixels. Panning occurs pixel-by-pixel and simultaneously across all tiles based on the accelerometer values of the most-tilted cube. This means that stationary cubes pan together to prevent previously-joined image segments from drifting apart. Panning pixel-by-pixel imposed a slight technical challenge in that the cubes allow pixel-level panning, but the image wraps around after 18 8-pixel tiles due to hardware limitations, so the entire image cannot be panned in this mode. Panning by 8-pixel increments is possible by simply changing the tiles loaded from the main image, but this results in somewhat clunky motion that ruins some of the immersive qualities of the interaction. Instead, the app combines both methods to load new tiles and reset the panning values every 8 pixels. This also suffered from initial performance issues because calling two non-trivial graphics operations for every video buffer while rendering asynchronously can lead to lag and some image tearing. This problem is mostly solved by sequentially checking the accelerometers, calling all draw operations, allowing the system to paint, and then explicitly waiting for painting to complete on all tiles before checking the accelerometers and writing to the video buffers again. This happens quickly enough that the resulting performance is acceptable.

The app also detects if any of the cubes have reached the border of the image, at which point all cubes are prevented from moving further in that direction. Blocking the motion of all cubes prevents the edges from causing the images to bunch up and lose alignment.

Limitations and Future Directions

Update: Silt now supports the four-asset-group method described below, allowing for 4x larger images!

The main limitation of the current implementation is that the image is limited by how much can be stored inside of one asset group. The first step to correcting this limitation is to load all four available asset groups with quadrants of the image, thus quadrupling the size of the image that can be stored. A more advanced future implementation will reassign asset groups to different large chunks of the image based on the centroid of all current cubes, so that as long as the user does not pan too quickly, the limitation on image size is only imposed by the storage space on the Sifteo base.

There are several exciting future directions that I would like to pursue with this project. The first step is to refine the Processing code as a standalone app that gives the user more feedback and control for loading images. The second modification that I would like to make will allow each cube to pan separately. This enables children to each hold their own cube and explore the image freely, but encourages them to link their cubes in order to see larger parts of the picture. The applications for this might include exploring electron micrographs of insects and plants, or perhaps high-resolution maps or famous paintings. Further engagement can be achieved through activities like eye-spy games or scavenger hunts. I would like the app to remain as general-purpose as possible to encourage creativity in its use.

As always, I encourage any feedback on this project. Feel free to email me at mdtaylor@cmu.edu.

The code (minus necessary Sifteo SDK tools) can be found on Github at https://github.com/SteelBonedMike/Silt