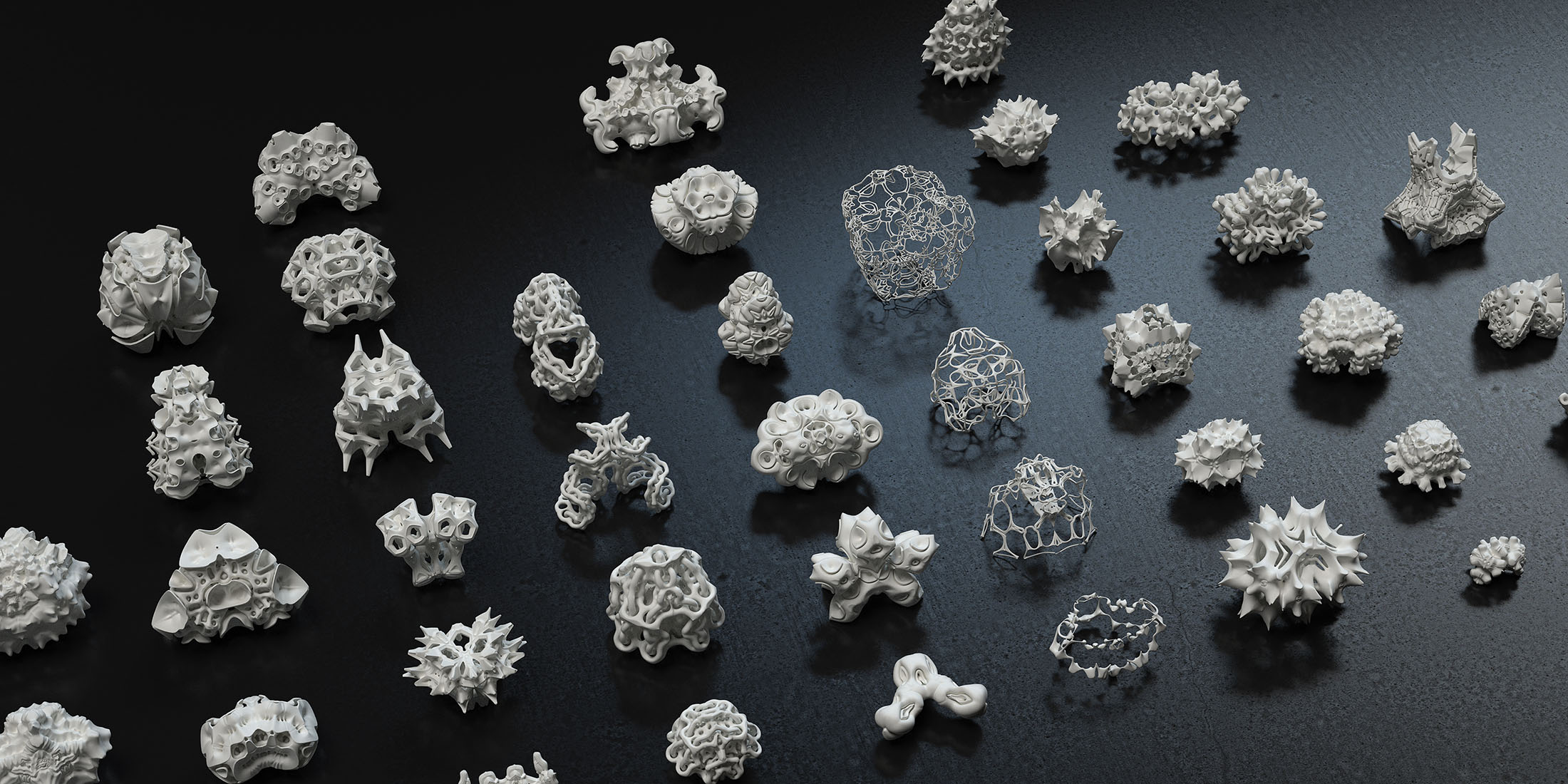

After Allison Parrish’s lecture I was really interested in exploring what other artworks there are that try to create an environment for “meaning” to occur, through establishing some basic constraints on text/symbols. For example the piece that generates every possible 32×32 black and white icon was really fascinating. I then came across a paper that outlines a generative grammar for specifying painting and sculpture. This seems useful for developing a really narrow range of patterns in 2d and 3d. I think that it would be interesting if they introduced a bit more uncertainty/noise into that system. Without it there is no texture, or more ambiguous “blurred” forms; everything looks clean cut an predictable.

http://www.cs.bc.edu/~gips/SGBestPapers72.pdf

Another piece I stumbled on was Frequency by Esther Hunziker. It is a video art project that has been “compressed several times in low resolution until the sharp edges started to blur and original movie signal disappeared, vanished into color abstractions”. This project is sort of the opposite technique from what I was describing before; it starts with some meaningful source material and then becomes entirely focused on the color/surface qualities through this “degradation” of quality. I think it looks like an abstract moving painting.

http://www.ref17.net/hunziker/frequency.html