For this assignment I was initially looking into the formation of lines in people’s signatures.

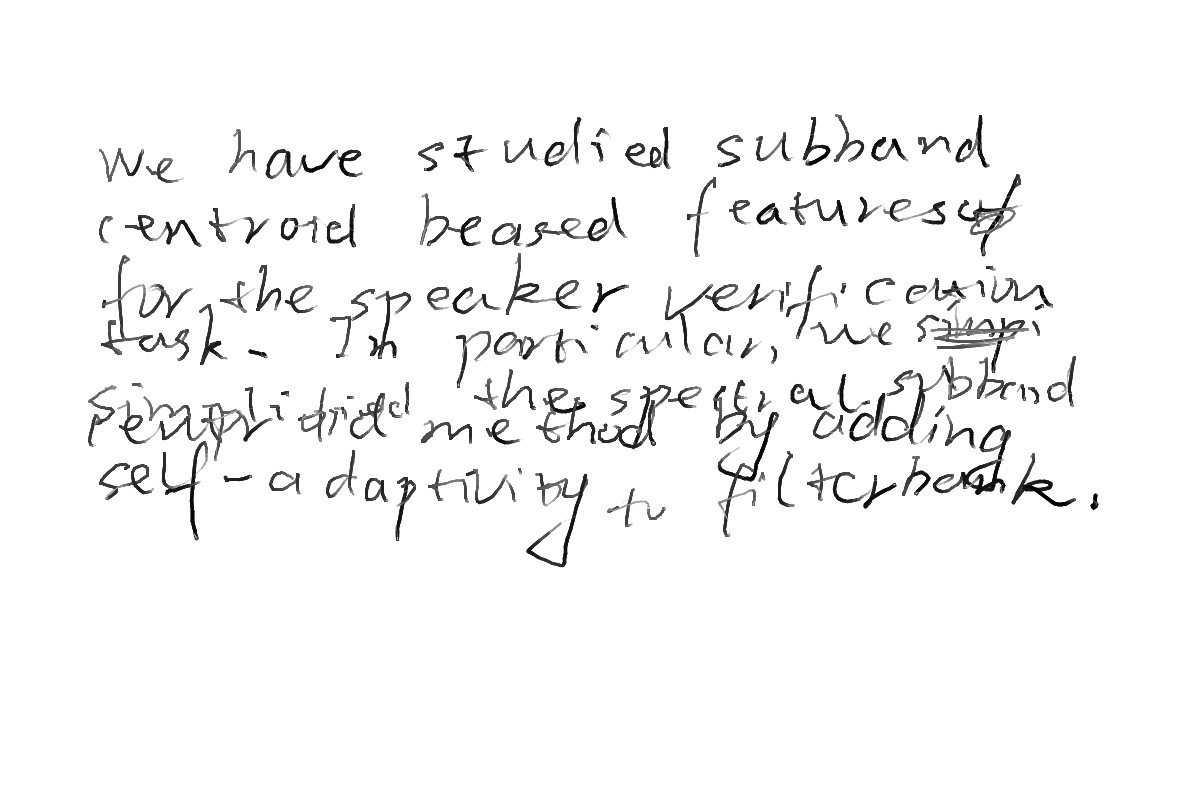

Then Golan gave me a dataset of English handwritings made by Chinese. As I spent some time looking through hundreds of handwritings I was curious about how computers would interpret them. I discovered an open source OCR called Tesseract that is originally intended to read scanned images of printed text. Since the source is not originally suitable for reading handwritings, it did a ridiculous job trying to read the handwritings. However it was interesting to read how Tesseract would interpret these “scribbles” as text, as if it was a guess and check game with a human judge grading the computer.

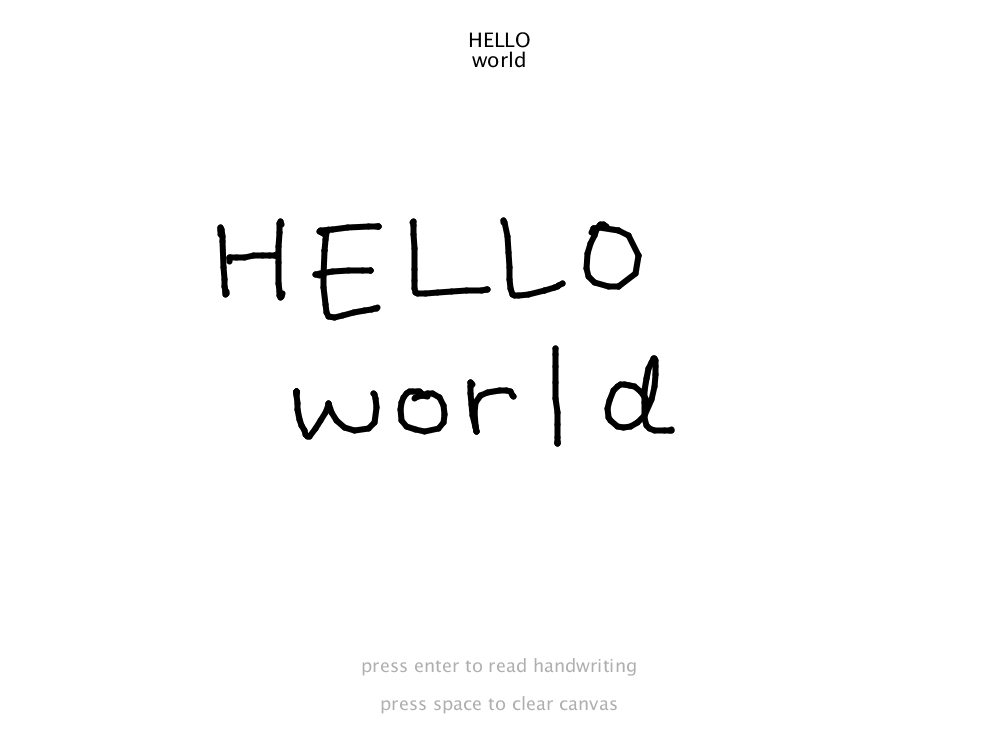

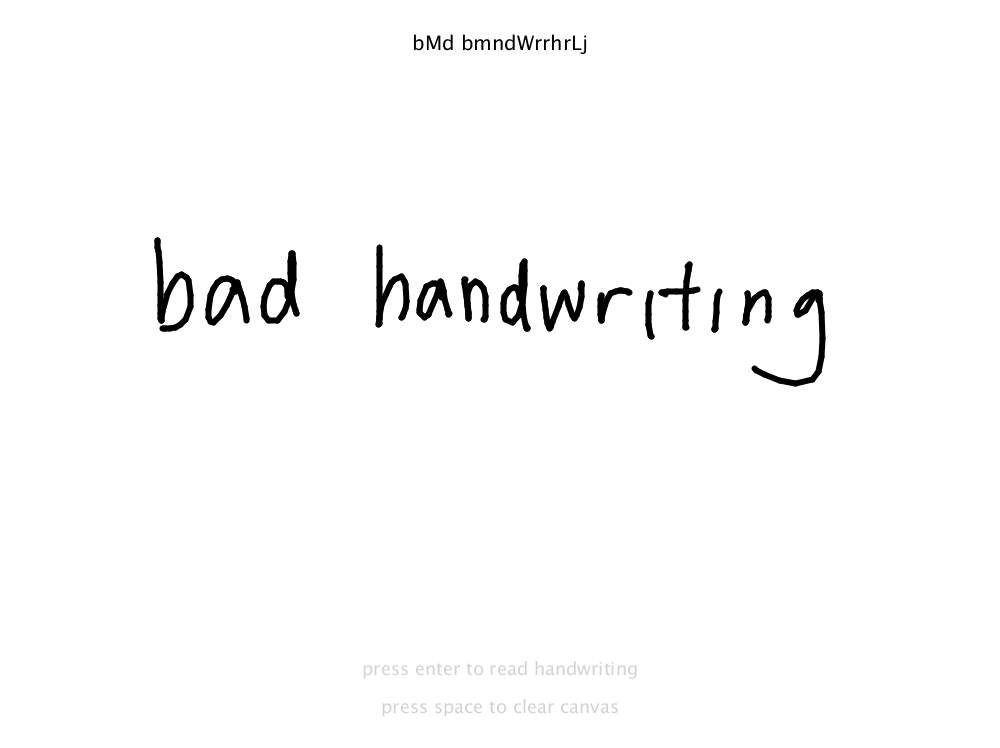

Inspired by Golan’s suggestion I developed an interactive program that uses Tesseract with Processing. After the user scribbles text or etc on the canvas, preferably with a writing tablet, and press enter, computer’s guess on what the user wrote appears on the top.

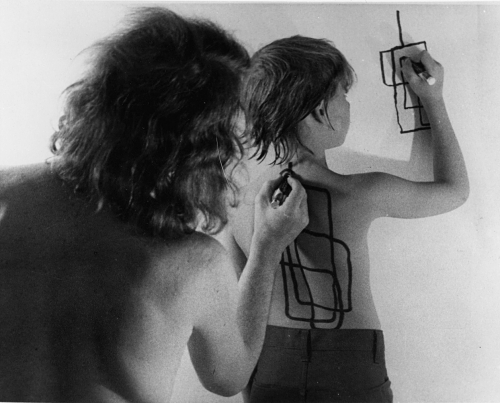

During the in-class crit we spent a lot of time discussing the distinction between handwriting and computer-generated text, and how it extends further to the relationship between humane dataset and computer’s interpretation of the dataset. Some made comments about how the program seems to lead the user to write more like a computer. Greater humanity in the dataset led to greater inaccuracy in the output. Although computer is able to read, digest, and modify typed text more fluently in some ways than people do, limits in operating the same activity are met with handwritings. Are computers able to mimic the intuitive activities that humans do, such as reading handwriting and signatures, and if so what is the threshold?

I applied some changes to the program after the crit last week, including options for guidelines to writing like a computer and improvements in accuracy. Perhaps more options can be added in terms of fonts and font sizes for guidelines. All of the additional changes were applied and shown in the demo.

GitHub: https://github.com/bykbyk301/handwritingX