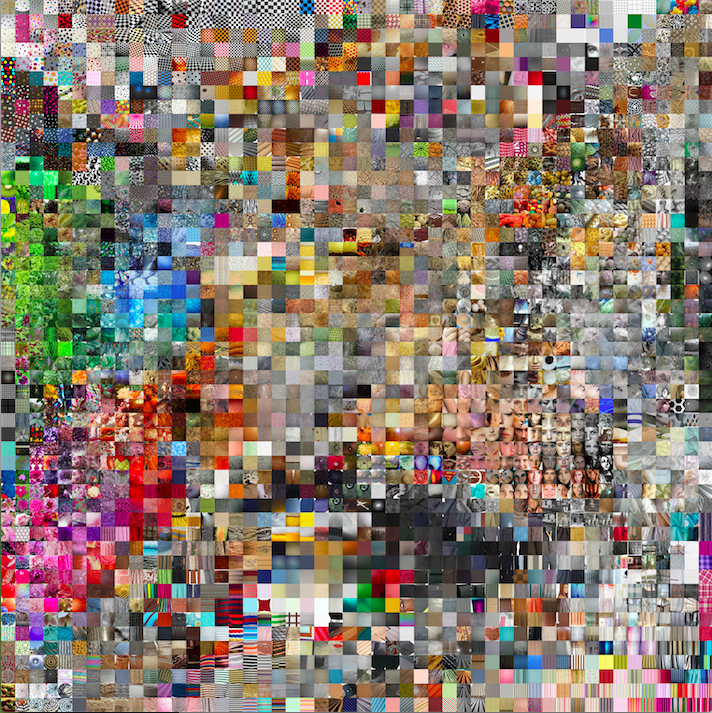

I began this project with an interested in the dataset of describable textures. I liked this set of images because they are playful, removed from their original context, and grouped not by subject, but by the feel and look of the subject. From the TSNE grid of this dataset, I identified a few areas I particularly enjoyed.

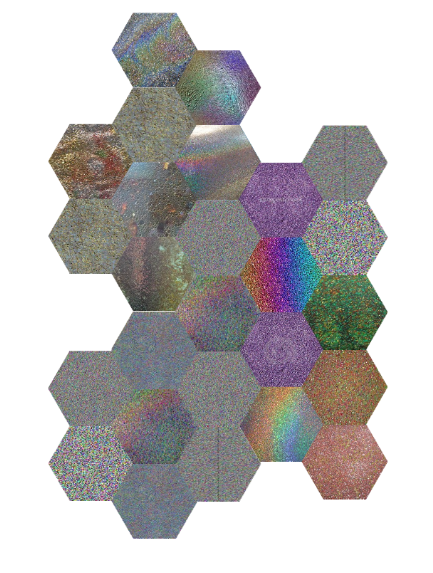

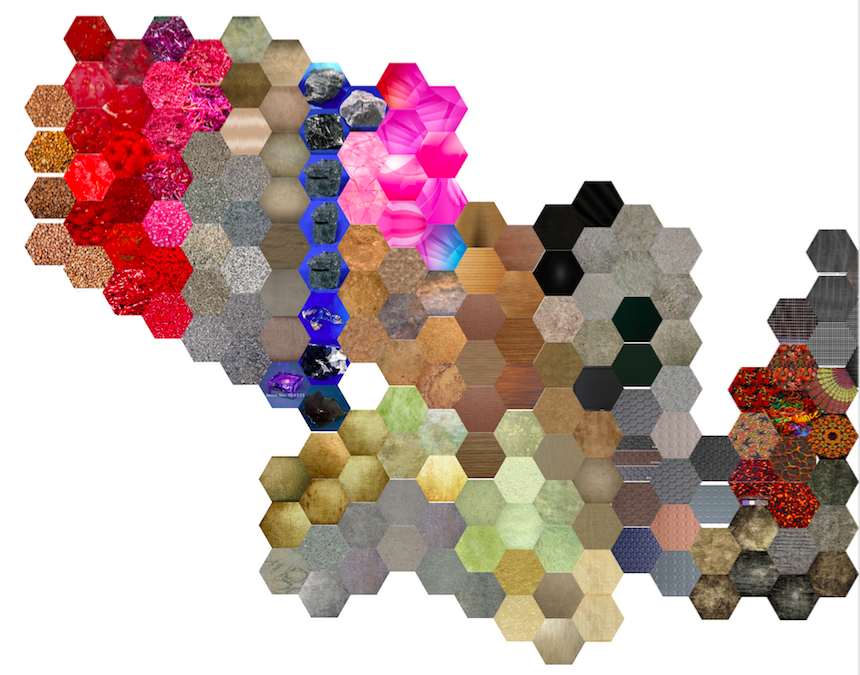

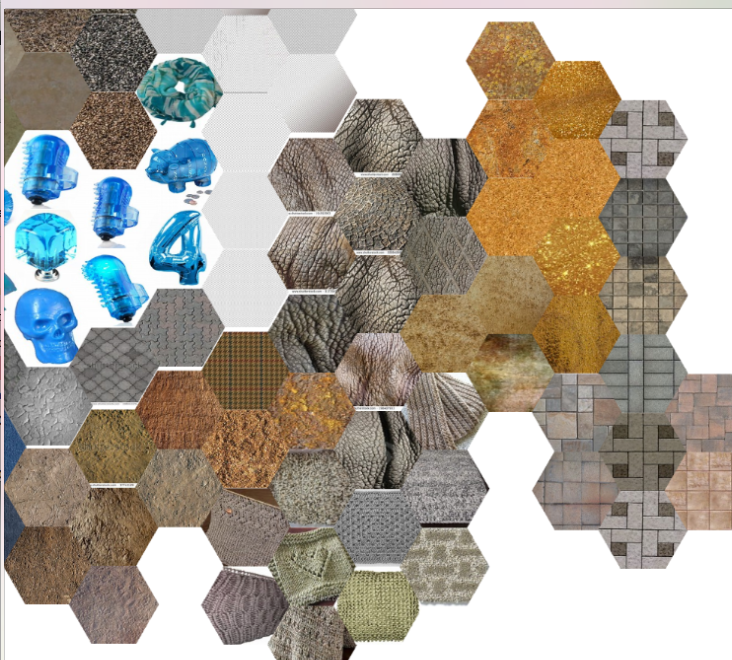

So thinking of what to do with images outside of TNSE, I wanted to continue with the theme of group similar textures and create a sense of surprise for the person viewing the groupings. I wanted to make a series of generative collages based on particular texture fading into the next texture. I also wanted to expand the database from its initial set of 5640 images.

I began by scraping Google Images Visually Similar search results, but soon switched to Bing Images for feasibility reasons. Though I envisioned the final version of the project to be a growing collage of textures with the textures being determined by the user’s input, the tool I created is a limited subset of that functionality. Using a combination of p5, NodeJS, socket.io, and a headless browser automation library, I dynamically scrape visually similar images from Bing based on a rotating set of texture word queries.

The user navigates the collage using a combination of keystrokes to add new tiles to the collage. In a further iteration of the project, I would adjust the input and display of the texture queries. I would find ways to make the collage making a more elegant process, whether through some other means of navigation or pattern making.

github repo: https://github.com/kaurorah/iacd-image-collection