Playing with the guts of machine learning models to create a conversational design partner.

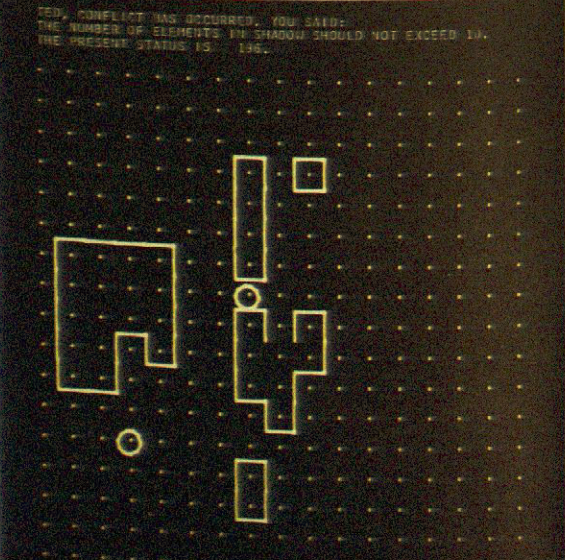

For my RA work with the Archaeology of CAD project I am recreating Nicholas Negroponte’s URBAN5 design system. Built in the 1970’s, its purpose was to “study the desirability and feasibility of conversing with a machine about an environmental design project.” For my final project, I would like to revisit this idea with a modern machine (i.e. a machine learning model).

Most applications of ML are focused on automatically classifying, generating, stylizing, completing, etc. I would like to create an artifact that frames the interaction as an open-ended conversation with an intelligent design partner.

In its early stages, machine learning functioned as a black box. It developed an understanding of the world in its subconscious. Just like us, it had trouble articulating it’s intuitions. As we work on explainability, we develop tools that allow the machine learning model to communicate it’s understanding.

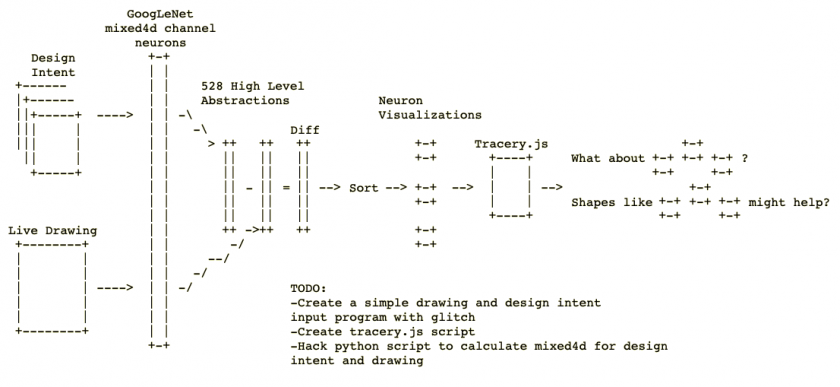

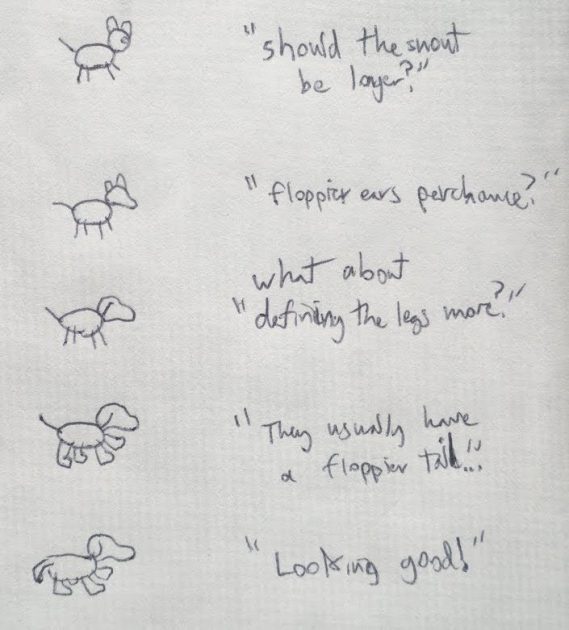

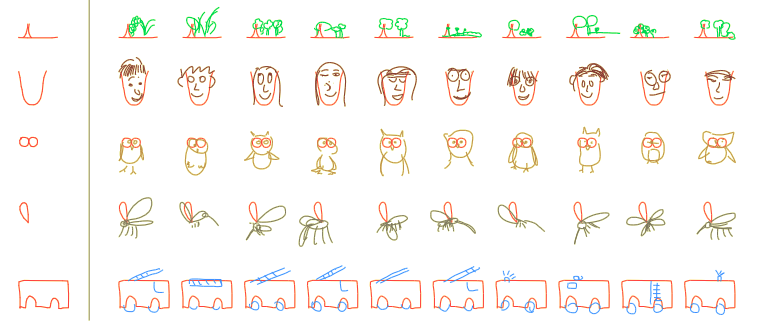

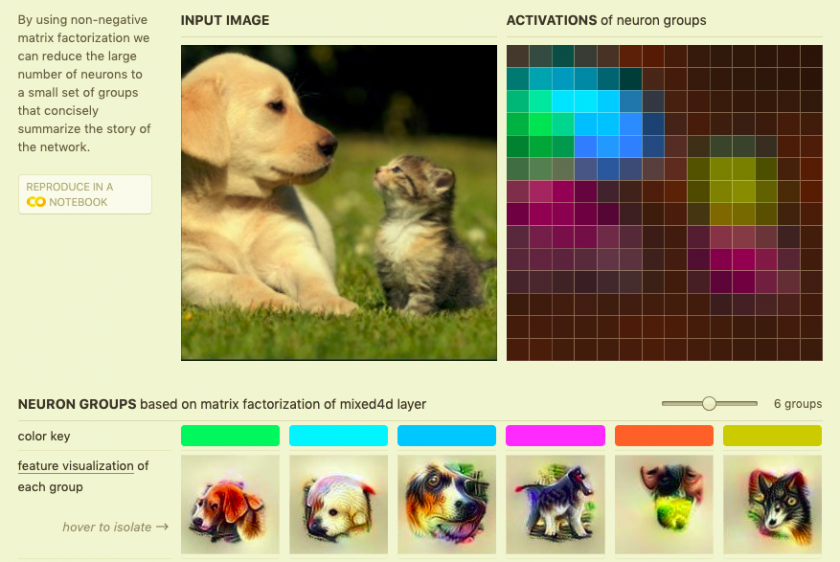

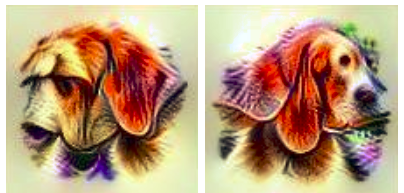

This project investigates this area through a drawing program with a chat bot powered by the mixed4d GoogLeNet hidden layer. As you draw, it will calculate the difference in the mixed4d layer between your drawing and a design intent (which could be an image or set of images) and then return the neurons with the greatest difference. Google provides an API for visualizing these neurons. This will produce a set of high level abstractions that represent what your picture might be missing (given your intent). These images will be shown through various Tracery.js prompts. The purpose is to make it feel like a conversation with the machine instead of an insistence that you do what the machine tells you. I could also add some stochasticity or novelty checks to keep the suggestions fresh.

This piece takes new machine learning interpretability technology, applies the idea of comparing high level abstraction vectors, and frames it as a conversation with a machine. It proposes an interaction with machines as partners instead of ‘auto’-bots that do everything for us, make all our decisions, free us from work, and control our fate.